Live face tracking in the browser using TensorFlow.js. Part 4

In Part 4 (you read part 1, 2, and 3, right?) We return to our goal of creating a Snapchat-style face filter using what we’ve already learned about face tracking and adding 3D visualization with ThreeJS. In this article, we’re going to use facial cue points to virtualize a 3D model over webcam video for a little augmented reality fun.

You can download a demo version of this project. You might need to enable WebGL support in your web browser to get the performance you want. You can also download code and files for this series. It assumes that you are familiar with JavaScript and HTML and have at least a basic understanding of neural networks.

Adding 3D graphics with ThreeJS

This project will build on the code for the face tracking project we created at the beginning of this series. We will add a 3D scene overlay to the original canvas.

ThreeJS makes it relatively easy to work with 3D graphics, so we are going to use this library to render virtual glasses over our faces.

At the top of the page, we need to include two script files to add ThreeJS and a GLTF file loader for the virtual glasses model we will be using:

<script src="https://cdn.jsdelivr.net/npm/three@0.123.0/build/three.min.js"></script>

<script src="https://cdn.jsdelivr.net/npm/three@0.123.0/examples/js/loaders/GLTFLoader.js"></script>To simplify the task and not worry about how to place the texture of the webcam on the stage, we can overlay an additional transparent canvas (canvas) and draw virtual glasses on it. We are using the CSS below above the tag bodyby placing an output canvas in a container and adding an overlay canvas.

<style>

.canvas-container {

position: relative;

width: auto;

height: auto;

}

.canvas-container canvas {

position: absolute;

left: 0;

width: auto;

height: auto;

}

</style>

<body>

<div class="canvas-container">

<canvas id="output"></canvas>

<canvas id="overlay"></canvas>

</div>

...

</body>The 3D scene requires a few variables and we can add a 3D model loading utility function for GLTF files:

<style>

.canvas-container {

position: relative;

width: auto;

height: auto;

}

.canvas-container canvas {

position: absolute;

left: 0;

width: auto;

height: auto;

}

</style>

<body>

<div class="canvas-container">

<canvas id="output"></canvas>

<canvas id="overlay"></canvas>

</div>

...

</body>We can now initialize all the components of our async block, starting with the size of the overlay canvas, as we did with the output canvas:

(async () => {

...

let canvas = document.getElementById( "output" );

canvas.width = video.width;

canvas.height = video.height;

let overlay = document.getElementById( "overlay" );

overlay.width = video.width;

overlay.height = video.height;

...

})();You also need to set the renderer, scene and camera variables. Even if you’re unfamiliar with 3D perspective and camera math, you don’t need to worry. This code simply positions the camera in the scene so that the width and height of the webcam video correspond to the coordinates of 3D space:

(async () => {

...

// Load Face Landmarks Detection

model = await faceLandmarksDetection.load(

faceLandmarksDetection.SupportedPackages.mediapipeFacemesh

);

renderer = new THREE.WebGLRenderer({

canvas: document.getElementById( "overlay" ),

alpha: true

});

camera = new THREE.PerspectiveCamera( 45, 1, 0.1, 2000 );

camera.position.x = videoWidth / 2;

camera.position.y = -videoHeight / 2;

camera.position.z = -( videoHeight / 2 ) / Math.tan( 45 / 2 ); // distance to z should be tan( fov / 2 )

scene = new THREE.Scene();

scene.add( new THREE.AmbientLight( 0xcccccc, 0.4 ) );

camera.add( new THREE.PointLight( 0xffffff, 0.8 ) );

scene.add( camera );

camera.lookAt( { x: videoWidth / 2, y: -videoHeight / 2, z: 0, isVector3: true } );

...

})();We need to add to the function trackFace just one line of code to render the scene on top of the face tracking output:

async function trackFace() {

const video = document.querySelector( "video" );

output.drawImage(

video,

0, 0, video.width, video.height,

0, 0, video.width, video.height

);

renderer.render( scene, camera );

const faces = await model.estimateFaces( {

input: video,

returnTensors: false,

flipHorizontal: false,

});

...

}The last stage of this puzzle before displaying virtual objects on our face is loading a 3D model of virtual glasses. We found a couple Heart Shaped Glasses by Maximkuzlin on SketchFab… You can download and use another object if you want.

Here’s how to load an object and add it to the scene before calling the function trackFace:

Placement of virtual glasses on the tracked face

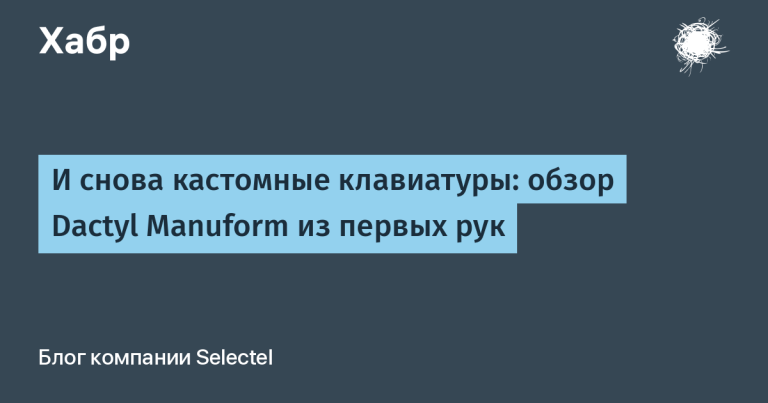

Now the fun begins – put on our virtual glasses.

The annotated annotations provided by the TensorFlow face tracking model include an array of coordinates MidwayBetweenEyeswhere the X and Y coordinates correspond to the screen, and the Z coordinate adds depth to the screen. This makes placing glasses in front of our eyes a fairly straightforward task.

You need to make the Y coordinate negative because the positive Y axis is downward in the 2D screen coordinate system, but upward in the spatial coordinate system. We’ll also subtract the distance or depth of the camera from the Z coordinate to get the correct distances in the scene.

glasses.position.x = face.annotations.midwayBetweenEyes[ 0 ][ 0 ];

glasses.position.y = -face.annotations.midwayBetweenEyes[ 0 ][ 1 ];

glasses.position.z = -camera.position.z + face.annotations.midwayBetweenEyes[ 0 ][ 2 ];Now you need to calculate the orientation and scale of the glasses. This is possible if we determine the direction “up” relative to our face, which points to the top of our head, and the distance between our eyes.

You can estimate the “up” direction using a vector from an array midwayBetweenEyesused for glasses, along with a tracked point for the lower part of the nose. Then we normalize its length as follows:

glasses.up.x = face.annotations.midwayBetweenEyes[ 0 ][ 0 ] - face.annotations.noseBottom[ 0 ][ 0 ];

glasses.up.y = -( face.annotations.midwayBetweenEyes[ 0 ][ 1 ] - face.annotations.noseBottom[ 0 ][ 1 ] );

glasses.up.z = face.annotations.midwayBetweenEyes[ 0 ][ 2 ] - face.annotations.noseBottom[ 0 ][ 2 ];

const length = Math.sqrt( glasses.up.x ** 2 + glasses.up.y ** 2 + glasses.up.z ** 2 );

glasses.up.x /= length;

glasses.up.y /= length;

glasses.up.z /= length;To get the relative size of the head, you can calculate the distance between the eyes:

const eyeDist = Math.sqrt(

( face.annotations.leftEyeUpper1[ 3 ][ 0 ] - face.annotations.rightEyeUpper1[ 3 ][ 0 ] ) ** 2 +

( face.annotations.leftEyeUpper1[ 3 ][ 1 ] - face.annotations.rightEyeUpper1[ 3 ][ 1 ] ) ** 2 +

( face.annotations.leftEyeUpper1[ 3 ][ 2 ] - face.annotations.rightEyeUpper1[ 3 ][ 2 ] ) ** 2

);Finally, we scale the glasses based on the value eyeDist and orient the glasses on the Z axis using the angle between the up vector and the Y axis. And voila!

Execute your code and check the result.

Before moving on to the next part of this series, let’s take a look at the complete code put together:

Bed sheet with code

<html>

<head>

<title>Creating a Snapchat-Style Virtual Glasses Face Filter</title>

<script src="https://cdn.jsdelivr.net/npm/@tensorflow/tfjs@2.4.0/dist/tf.min.js"></script>

<script src="https://cdn.jsdelivr.net/npm/@tensorflow-models/face-landmarks-detection@0.0.1/dist/face-landmarks-detection.js"></script>

<script src="https://cdn.jsdelivr.net/npm/three@0.123.0/build/three.min.js"></script>

<script src="https://cdn.jsdelivr.net/npm/three@0.123.0/examples/js/loaders/GLTFLoader.js"></script>

</head>

<style>

.canvas-container {

position: relative;

width: auto;

height: auto;

}

.canvas-container canvas {

position: absolute;

left: 0;

width: auto;

height: auto;

}

</style>

<body>

<div class="canvas-container">

<canvas id="output"></canvas>

<canvas id="overlay"></canvas>

</div>

<video id="webcam" playsinline style="

visibility: hidden;

width: auto;

height: auto;

">

</video>

<h1 id="status">Loading...</h1>

<script>

function setText( text ) {

document.getElementById( "status" ).innerText = text;

}

function drawLine( ctx, x1, y1, x2, y2 ) {

ctx.beginPath();

ctx.moveTo( x1, y1 );

ctx.lineTo( x2, y2 );

ctx.stroke();

}

async function setupWebcam() {

return new Promise( ( resolve, reject ) => {

const webcamElement = document.getElementById( "webcam" );

const navigatorAny = navigator;

navigator.getUserMedia = navigator.getUserMedia ||

navigatorAny.webkitGetUserMedia || navigatorAny.mozGetUserMedia ||

navigatorAny.msGetUserMedia;

if( navigator.getUserMedia ) {

navigator.getUserMedia( { video: true },

stream => {

webcamElement.srcObject = stream;

webcamElement.addEventListener( "loadeddata", resolve, false );

},

error => reject());

}

else {

reject();

}

});

}

let output = null;

let model = null;

let renderer = null;

let scene = null;

let camera = null;

let glasses = null;

function loadModel( file ) {

return new Promise( ( res, rej ) => {

const loader = new THREE.GLTFLoader();

loader.load( file, function ( gltf ) {

res( gltf.scene );

}, undefined, function ( error ) {

rej( error );

} );

});

}

async function trackFace() {

const video = document.querySelector( "video" );

output.drawImage(

video,

0, 0, video.width, video.height,

0, 0, video.width, video.height

);

renderer.render( scene, camera );

const faces = await model.estimateFaces( {

input: video,

returnTensors: false,

flipHorizontal: false,

});

faces.forEach( face => {

// Draw the bounding box

const x1 = face.boundingBox.topLeft[ 0 ];

const y1 = face.boundingBox.topLeft[ 1 ];

const x2 = face.boundingBox.bottomRight[ 0 ];

const y2 = face.boundingBox.bottomRight[ 1 ];

const bWidth = x2 - x1;

const bHeight = y2 - y1;

drawLine( output, x1, y1, x2, y1 );

drawLine( output, x2, y1, x2, y2 );

drawLine( output, x1, y2, x2, y2 );

drawLine( output, x1, y1, x1, y2 );

glasses.position.x = face.annotations.midwayBetweenEyes[ 0 ][ 0 ];

glasses.position.y = -face.annotations.midwayBetweenEyes[ 0 ][ 1 ];

glasses.position.z = -camera.position.z + face.annotations.midwayBetweenEyes[ 0 ][ 2 ];

// Calculate an Up-Vector using the eyes position and the bottom of the nose

glasses.up.x = face.annotations.midwayBetweenEyes[ 0 ][ 0 ] - face.annotations.noseBottom[ 0 ][ 0 ];

glasses.up.y = -( face.annotations.midwayBetweenEyes[ 0 ][ 1 ] - face.annotations.noseBottom[ 0 ][ 1 ] );

glasses.up.z = face.annotations.midwayBetweenEyes[ 0 ][ 2 ] - face.annotations.noseBottom[ 0 ][ 2 ];

const length = Math.sqrt( glasses.up.x ** 2 + glasses.up.y ** 2 + glasses.up.z ** 2 );

glasses.up.x /= length;

glasses.up.y /= length;

glasses.up.z /= length;

// Scale to the size of the head

const eyeDist = Math.sqrt(

( face.annotations.leftEyeUpper1[ 3 ][ 0 ] - face.annotations.rightEyeUpper1[ 3 ][ 0 ] ) ** 2 +

( face.annotations.leftEyeUpper1[ 3 ][ 1 ] - face.annotations.rightEyeUpper1[ 3 ][ 1 ] ) ** 2 +

( face.annotations.leftEyeUpper1[ 3 ][ 2 ] - face.annotations.rightEyeUpper1[ 3 ][ 2 ] ) ** 2

);

glasses.scale.x = eyeDist / 6;

glasses.scale.y = eyeDist / 6;

glasses.scale.z = eyeDist / 6;

glasses.rotation.y = Math.PI;

glasses.rotation.z = Math.PI / 2 - Math.acos( glasses.up.x );

});

requestAnimationFrame( trackFace );

}

(async () => {

await setupWebcam();

const video = document.getElementById( "webcam" );

video.play();

let videoWidth = video.videoWidth;

let videoHeight = video.videoHeight;

video.width = videoWidth;

video.height = videoHeight;

let canvas = document.getElementById( "output" );

canvas.width = video.width;

canvas.height = video.height;

let overlay = document.getElementById( "overlay" );

overlay.width = video.width;

overlay.height = video.height;

output = canvas.getContext( "2d" );

output.translate( canvas.width, 0 );

output.scale( -1, 1 ); // Mirror cam

output.fillStyle = "#fdffb6";

output.strokeStyle = "#fdffb6";

output.lineWidth = 2;

// Load Face Landmarks Detection

model = await faceLandmarksDetection.load(

faceLandmarksDetection.SupportedPackages.mediapipeFacemesh

);

renderer = new THREE.WebGLRenderer({

canvas: document.getElementById( "overlay" ),

alpha: true

});

camera = new THREE.PerspectiveCamera( 45, 1, 0.1, 2000 );

camera.position.x = videoWidth / 2;

camera.position.y = -videoHeight / 2;

camera.position.z = -( videoHeight / 2 ) / Math.tan( 45 / 2 ); // distance to z should be tan( fov / 2 )

scene = new THREE.Scene();

scene.add( new THREE.AmbientLight( 0xcccccc, 0.4 ) );

camera.add( new THREE.PointLight( 0xffffff, 0.8 ) );

scene.add( camera );

camera.lookAt( { x: videoWidth / 2, y: -videoHeight / 2, z: 0, isVector3: true } );

// Glasses from https://sketchfab.com/3d-models/heart-glasses-ef812c7e7dc14f6b8783ccb516b3495c

glasses = await loadModel( "web/3d/heart_glasses.gltf" );

scene.add( glasses );

setText( "Loaded!" );

trackFace();

})();

</script>

</body>

</html>What’s next? What if we also add facial emotion detection?

Would you believe that all this is possible on one web page? By adding 3D objects to real-time face tracking, we created magic with the camera right in the web browser. You might be thinking, “But heart-shaped glasses exist in real life …” And it’s true! What if we create something truly magical, like a hat … that knows how we feel?

Let’s create a magic hat (like in Hogwarts!) For detecting emotions in the next article and see if we can make the impossible possible by leveraging the TensorFlow.js library even more! See you tomorrow at the same time.

Tracking faces in real time in the browser. Part 1

Tracking faces in real time in the browser. Part 2

Tracking faces in real time in the browser. Part 3

Find out the detailshow to get a Level Up in skills and salary or an in-demand profession from scratch by taking SkillFactory online courses with a 40% discount and a promotional code HABR, which will give another + 10% discount on training.

Other professions and courses

PROFESSION

COURSES