How we use multithreading in the geometric core

This post was prepared by Tatyana Mitina, an employee of C3D Labs, in the past Intel (Habr’s readers are familiar with her from the story “I am 57 years old and I am a scrum-master”).

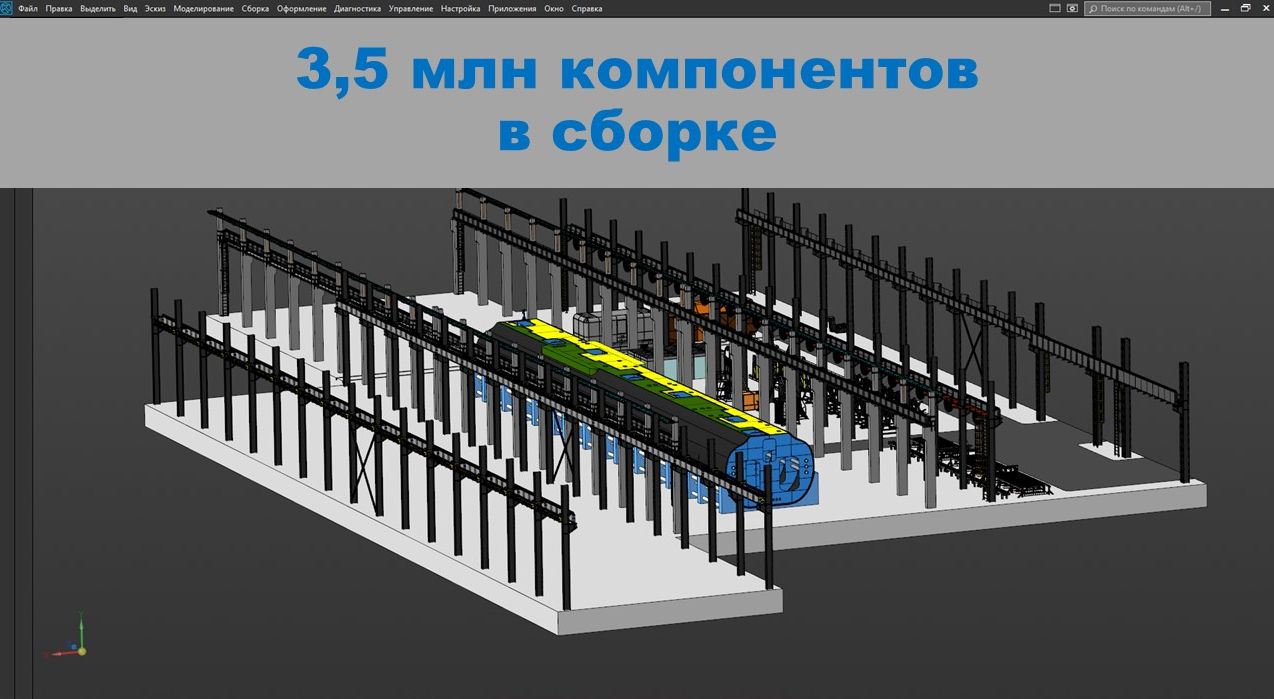

Model of a plant with technological equipment in KOMPAS-3D

OKB LLC (Novosibirsk)

Parallel computing is our future.

And it always will be!

This old programming joke reminds us of the importance of using multithreading in applications and the prospects for the development of parallel computing, and also hints at the complexity of parallel programming.

We started work on organizing multi-threaded data processing in the C3D geometric core several years ago. Multithreading support in our case includes two components:

- The use of multi-threaded computing in the kernel.

- Ensuring kernel thread safety, which, in addition to supporting parallel computations of the kernel itself, also supports user multithreading. In other words, it ensures the security of using kernel interfaces in parallel computing in a user application.

To organize the internal multithreading of the kernel, we use OpenMP technology. This is an open standard for parallelizing C, C ++ and Fortran programs, which is implemented to one degree or another by most popular compilers.

The use of OpenMP technology to optimize the kernel code allows us to solve the problems of its cross-platform and compatibility.

Compilers provide support for OpenMP to varying degrees. For example, at the moment, the Intel C ++ compiler implements OpenMP version 4.5 and a subset of OpenMP version 5.0, while the Microsoft C ++ compiler provides support for only OpenMP version 2.0.

Parallel computing in a geometric kernel

The main multithreaded operations of the C3D core include:

- flat projection

- polygon mesh calculation,

- calculation of mass-centering characteristics,

- converter operations

but are not limited to this list.

Function runtime MassInertiaProperties() in different kernel modes using the KOMPAS-3D system as an example

The implementation of parallel processing of independent data, as a rule, is relatively simple and quite effective.

However, situations are much more common when objects processed in different threads depend on each other (for example, they use the same data) or the same object participates in calculations in different threads. Then the task of ensuring thread-safe access to the processed data comes to the fore.

The easiest way is to use locks when a thread gains exclusive access to shared data by blocking them.

Such a straightforward method often leads to an increase in latency, which can negate the potential benefit of using parallel computing, and sometimes slow down the calculation.

In the C3D core, the efficiency of parallel computing and thread safety of objects is ensured by a special mechanism – multi-threaded caches.

Thread safe access to kernel objects

One of the methods of optimization of calculations is data caching, which is based on the assumption that the values of the parameters for which the calculations are carried out are not arbitrary, but obey some (predetermined or statistically predictable) regularity, which allows reusing previously calculated results.

Using caching, usually effective in sequential computations, when executed in parallel, can trigger “data races” when multiple threads vie for shared cached data.

To solve the problem of thread-safe data access, we use multi-threaded caching.

How multi-threaded caches work

The multithreaded cache mechanism provides thread-safe access to the data of kernel objects and makes it possible to efficiently parallelize computations in cases when data is processed simultaneously in several threads.

Each thread works with its own copy of cached data, which prevents competition for data between threads and minimizes the use of locks.

Its multi-threaded cache is managed by its cache manager, which is responsible for creating, storing and issuing cached object data for the current thread.

It is important to note that multi-threaded caching works effectively both in sequential execution of calculations and in parallel calculations.

An undoubted plus is that the transition to the use of multi-threaded caches requires minimal code processing.

The operation of the multithreaded cache mechanism is controlled by switching the kernel multithreading mode.

Kernel multithreading modes

The kernel multithreading mode controls the thread safety mechanism of kernel objects, and also determines which operations in the kernel will be parallelized.

The C3D core can work in the following modes:

- Mode Off – kernel multithreading is disabled. All kernel operations are performed sequentially. The mechanism that ensures thread safety of kernel objects is disabled.

- Mode Standard – standard multithreading mode. Limited parallelization of kernel operations works (only operations processing independent data are parallelized). The thread safety mechanism of kernel objects is disabled.

- Mode Safeitems – thread safety mode of kernel objects, in which the multi-threaded caching mechanism is turned on, but limited parallelization of kernel operations still works. This mode is designed to support multi-threaded operations in user applications.

- Mode Items – the maximum multithreading mode of the kernel, when the multithreaded caching mechanism is turned on and all kernel operations are parallelized, where calculations can be performed in parallel.

Developers working with the C3D core have the ability to dynamically change its multithreading mode.

User multithreading support

The implementation of the geometric kernel is focused on supporting multi-threaded use of kernel interfaces in user applications.

How kernel thread safety is ensured

All geometrical objects of the kernel are thread safe, provided that the mechanism of multithreaded caching is used (multithreading mode is not lower than SafeItems).

Most kernel operations (including those that are not multithreaded) are thread safe, i.e. can be used in multiple threads if the multithreading mode is set to at least SafeItems.

Kernel locks are implemented on the basis of native synchronization mechanisms (such as WinAPI on Windows and pthread API on Linux), which ensures the security of using kernel interfaces in user applications that use various parallelization mechanisms.

Interfaces are provided for notifying the start and end of parallel computing that use kernel interfaces.

Important! A user application that calls C3D interfaces from multiple threads should notify the kernel when it enters and exits parallel computing.

Managing kernel multithreading modes

C3D kernel users have the ability to dynamically change the kernel multithreading mode.

Changing the multithreading mode allows you to:

- manage thread safety of kernel objects (enable / disable multithreaded caching)

- determine which kernel operations will be parallelized – all or only those that process independent data.

When can this come in handy? In some cases, the presence of internal parallel kernel loops can affect the efficiency of external parallelization in a user application.

Important! The need to switch the kernel multithreading mode should be analyzed in each case.

Using kernel interfaces in a multi-threaded application

Before using the kernel interfaces in several threads, you need to make sure that the kernel is set to multithreading mode not lower than SafeItems. By default, the kernel runs in maximum multithreading mode.

When using parallel mechanisms other than OpenMP, the user application must notify the kernel about entering and leaving the parallel region.

To do this, we recommend one of the following methods:

- the class

ParallelRegionGuard(defender of parallel region in scope) - the functions

EnterParallelRegionandExitParallelRegion - macros

ENTER_PARALLELandEXIT_PARALLEL.

Examples of kernel notifications about entering and leaving a parallel region

Conclusion

To summarize. We actively use internal multi-threaded computing. A feature of the C3D core is the ability to select multithreading modes when performing mathematical calculations. The kernel provides users with the ability to dynamically change the internal multi-threading mode of the kernel.

The kernel supports user multithreading, providing thread-safe kernel operations and providing thread-safe access to kernel object data. The kernel can be configured for multithreading in a user application.