How we stopped checking everything in a row with one task and accelerated the verification of tests for internships

Nobody likes test tasks: long, expensive, not always indicative. But on an internship, you can’t refuse tests because of the large flow of applicants without a portfolio and work experience. For several years, we at Kontur have been testing backend developers with one big task, which was invented and tested by one developer. And then everything broke and therefore changed. We will tell you how not to do a test, and how our selection for an internship now looks like.

Why an internship is impossible without a test

We receive about 1,000 applications for internships every year. It is impossible to talk with every candidate – it takes a lot of time and effort. In most cases, it is also impossible to look at the portfolio, because the audience of the internship is students. Therefore, in the selection for an internship, one cannot do without a test: with it we do not waste the time of candidates, HR’ov and interviewing developers.

Since you can’t refuse the test one, it should be interesting, take not very long to complete, and reflect the company’s requirements for developers. We now have six tasks that test the basic knowledge and skills we need. But now we are so optimized and reasonable, before it was different. At first there were rakes, layoffs and protracted selection.

One head for all processes

In 2019, the internship test at Kontur was one big task that tested several skills at once: basic programming knowledge, clean code, algorithms, data structures, and anything else that we tested as we passed by. How to decide what exactly this piece of the task is for the cleanliness of the code, and this one is for the data structure? How to make a uniform grading scale and check tests?

To make the suffering complex, one developer came up with the task, he also checked all the test ones. There were no guides, checklists, or verification criteria, because the task changed every year, and the entire context was kept in the head of one developer.

He came up with the problem for a long time, because at the same time he was working on the current tasks in the project. There were autotests, but manual checking still wasted a lot of time.

The test task was difficult. There was a joke (or not a joke) that the one who solved the test could immediately find a job as a junior, and not as an intern.

It won’t work like that for a long time. And we didn’t succeed.

No more goals

Keeping all this context, the developer quit in the middle of the selection for an internship (not because of her). Then the campaign was delayed, but brought to an end.

Before that, we hadn’t touched the test for years, because ̶r̶a̶b̶o̶t̶a̶e̶t̶ ̶ — ̶ ̶n̶e̶ ̶t̶r̶o̶g̶a̶y̶. But the layoff was a turning point. Several other contradictions have accumulated: a large test case is difficult to come up with, difficult to make, and difficult to start solving. The check is delayed (while autotests, while code-review …). Basfactor: there is no one to pick up if the creator of the test blockage / illness / dismissal. To feel the pain to the end, look at what it looked like last megatest…

Everything came together – it was necessary to redo it.

Experimental projects

They did not roll out the changes immediately for the internship, they began to experiment on smaller projects and started from the School of Industrial Development (Spur). There, the problems with the test were almost the same as with the internship:

-

Too difficult for 2-3 year students.

-

It is difficult for a student to tackle such a large task.

-

Motivation is lost: we give part of the autotests, the student sends the solution and does not even imagine that it does not pass hidden autotests. One typo or a stupid mistake – that’s it, autotests don’t pass, you can’t pass the problem, and you can’t find an error.

Former students of courses – contour developers were involved in solving the problem. We formulated what we want to check, decided to do it after 6 tasks (one task – one skill), made it and posted it on Ulearn – this is our platform with programming courses. It has all the tools you need for testing: autotests, anti-plagiarism, tools for code review.

We liked everything, but the reaction of the target audience – students-future interns – was more important. They invited me to solve a new test of last year’s spurrs (as a target audience, because they just graduated or continue to study) and received good feedback. The results were felt in battle:

-

The tests were passed faster. If earlier we received most of the tests closer to the deadline, this time we received many solutions in the first days after publication.

-

70 solved test cases and 138 test cases with at least one problem instead of 63 solved in the previous year.

We recognized the experiment as successful and proceeded to change the test to internship.

What and how do we evaluate?

“We decided to test candidates’ ability to write clean code, the ability to understand someone else’s code, the ability to write code from scratch, understanding of algorithms, knowledge of how to search for information in order to understand the problem and solve it. These skills were chosen, as they are often encountered in the work of a developer, plus we have such ideas about a good trainee and a contour developer, ”says Kostya Volivach, one of the creators of the new test in C #.

Then we thought over the criteria for each skill. The most interesting thing is checking the cleanliness of the code. “We tried to formulate the criteria based on the principle“ the code is read more often than it is written, ”says Lesha Pepelev, one of the creators of the test and the developer of the evaluation criteria. Here’s what we got:

-

Clean code doesn’t lie. Reading such a code does not turn into a detective story where they try to mislead you. The intent of clean code is expressed in the names of classes, methods, and variables and is the same as the actual behavior. If the method is called GetNode, then it should return the node to the calling code, and not add it to a class field. How to check yourself? Remove the bodies of all methods from the code. Can you guess from the squeeze how the solution works?

-

Clean code can be read from anywhere. If the solution consists of a set of classes, then each of them represents something whole. The meaning of a separate class can be seen without looking into other classes. How to check yourself? Remove all methods from the class, leave one. Describe it by answering the questions “what is it” rather than “what does it do” without referencing the remote code. At the level of an individual class, remove all methods from the class, look at the properties and fields. If you can understand the essence only by use, call refactoring.

-

Clean code has explicit dependencies. The reader must clearly understand what the method depends on and what is the result of its work. How to check? Ask yourself if you can break your code when refactoring by rearranging lines or deleting a seemingly unnecessary line?

We agreed to consider the best solution the one that the reviewer spends the least time on understanding (after passing all the other criteria).

Adding context and fighting phrasing

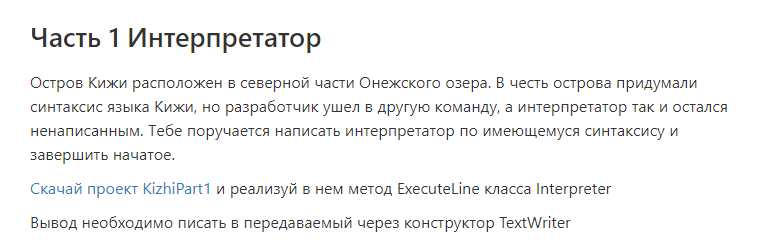

Two developers come up with a new test, each taking three tasks. So that the tasks do not hang in a vacuum and the conditions are perceived more easily, we compose a background story for each. For example, in test-2020 there is a problem with an interpreter:

Kizhi Island appeared here because one of the favorite languages of Kostya, the author of the problem, is Kotlin. It is named after an island in St. Petersburg.

Another refactoring task was born out of a poorly functioning TV remote control. “I decided to combine a non-working remote control and a TV set into one task. The plot is as follows: the remote control does not work because the code is badly written, there are errors. The developer was in a hurry, so the quality of the code is low, and the review was skipped. We wrote a sample code, we messed it up and confused it on purpose, ”says Kostya Volivach.

Problems are reviewed by 10 developers, they check the level of complexity, wording and general concept. The metric is as follows: if the middle copes with the task in an hour, everything is ok, if it takes more time, the task needs to be revised.

The most difficult thing in the test for the authors is the lyrics. “Last year we realized that the interns did not understand the wording of the tasks. Then we solved the problem manually: the question came about the wording – you go to the problem and edit the text, ”recalls Kostya. This year we checked the wording in advance on the programmers and on the editors – it should get better.

Tests are checked by 10 developers. Everyone is responsible for testing one problem in all test cases.

What are the metrics

In 2019, we received 55 test cases with one big problem. In 2020, 63 people solved all six problems, and another 281 candidates solved at least one problem. In 2019, we checked the test ones for more than a month, in 2020 it took two weeks.

After all the innovations, it became easier, the progress became clearer, which means we can react to the progress of the solution.

If few people have solved all six problems, we ask in chats what stops the rest, we send out mailings. If we see that many decide quickly, we are looking for additional verifiers.

We do not yet have complete data for 2021, but we can already see that the speed of passing the test is high. Autotests appeared only on February 15, and by this time we already have 28 people with six solved problems.

Do you want to check the wording (and see the tasks, of course)? Internship recruitment is open and test lies with Ulearn… To watch the test and start solving, join the group…