Transport stream calculation based on YOLOv5 and DeepSORT based on Deepstream

We want to save you time and nerves with the task of counting traffic at intersections.

Yaroslav And Nikita – our CV-engineers shared a solution that in just 4 steps will help you approach the release with minimal loss of time and money.

The article will be useful for novice CV engineers, product managers, IT product owners, marketers and project managers.

The task of counting traffic at an intersection is to determine the number of vehicles (TC) passing through it. The results of the traffic count can be used to manage traffic flow, ensure road safety and identify problematic sections of the road.

To solve this problem, you can use various methods, for example, install motion sensors or video surveillance cameras.

Of these methods, the highest priority solution is to install cameras that transmit video to a device with the appropriate software. Such an implementation allows not only to count the number of vehicles, but also to classify it, and only one camera can cover the entire intersection.

The use of sensors requires the installation of equipment for each lane of the intersection, which increases the cost of the system, as well as the complexity of installation.

To solve the problem of counting traffic at an intersection using CCTV cameras, it is necessary to use computer vision methods. One approach is to use detector And object tracker.

What is an object detector and tracker? What is the difference?

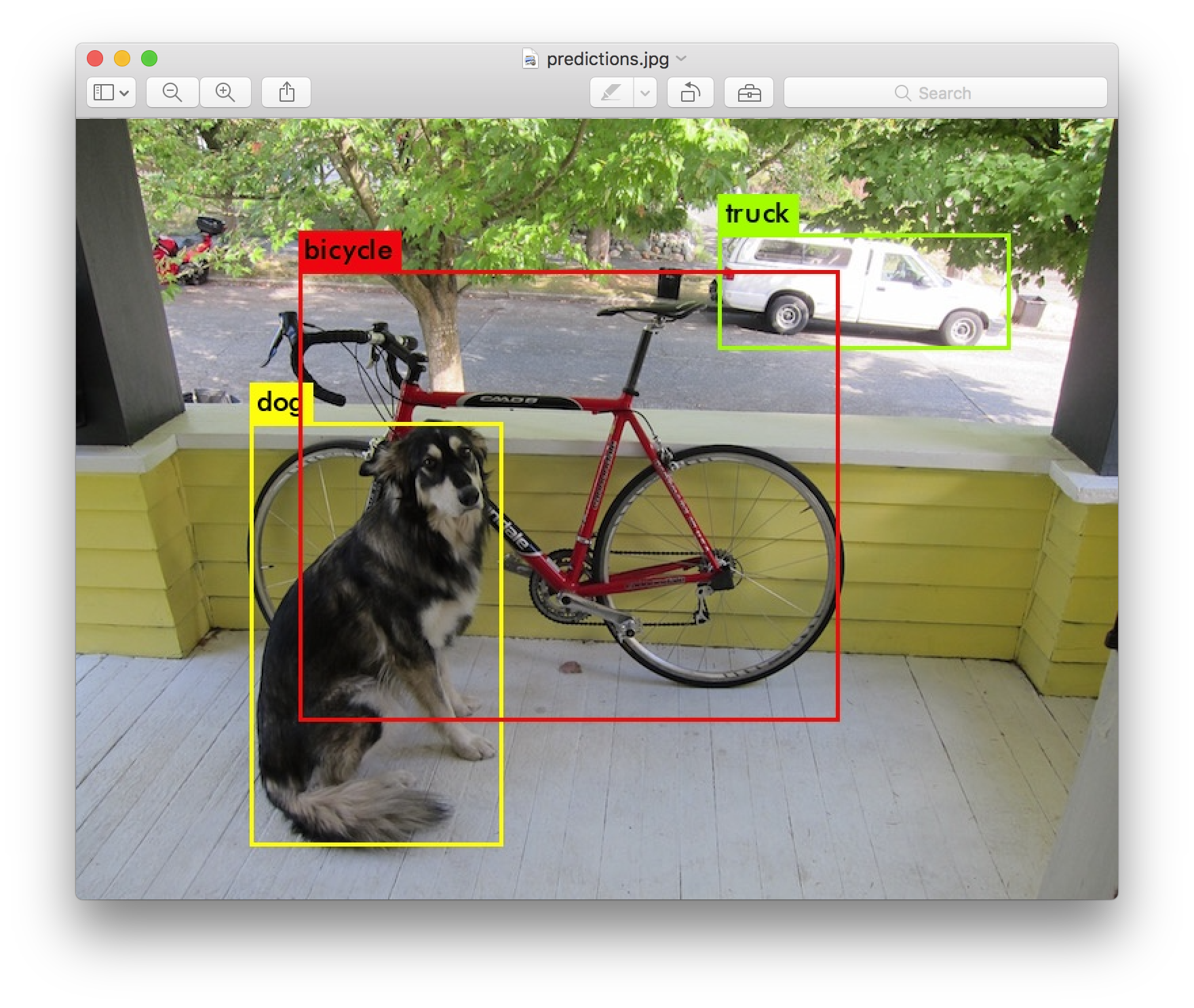

An object detector is an algorithm that allows you to automatically find objects in an image.

For example, vehicles can be detected on a camera image, their position in the frame can be calculated, and they can be classified (car, truck, bus, etc.).

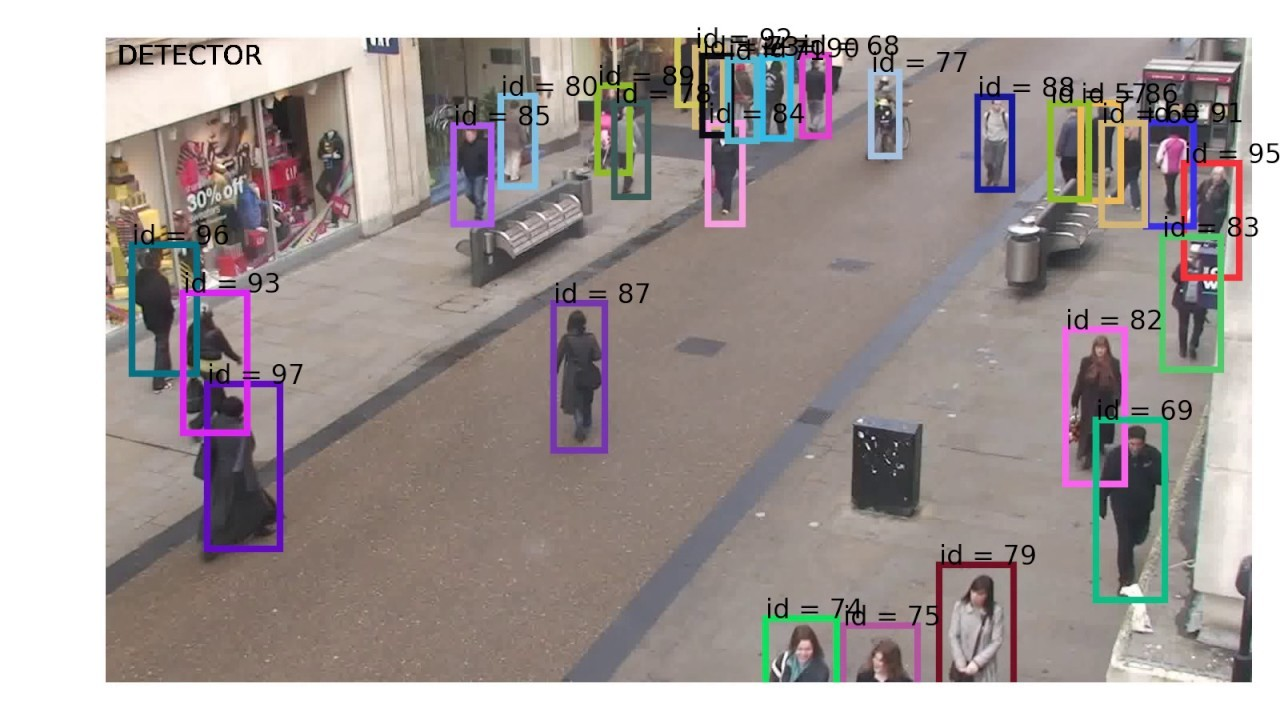

The object tracker is an algorithm that allows you to track the movement of an object on subsequent video frames.

That is, this algorithm allows you to assign an identifier to each object in the frame and indicate where the same object is located on the next frame.

First, the object detector is used to find all vehicles in the frame. The object tracker is then used to track the movement of each detected vehicle. When a vehicle leaves the area of the intersection, it is considered to be taken into account, and the data on the number of vehicles that left is updated.

Object Detection

There are a large number of algorithms that can solve this problem. A detailed story about these algorithms in our article will not. You can check them out if you want. Here. Of the entire presented set of detectors YOLOv5in our opinion, would be the most suitable option for the following reasons:

Ability to work in real time, as the solution refers to one-stage detectors and requires low computational costs.

A large community, which makes it easy to find a solution to almost any problem.

The solution was published on January 25, 2020, thanks to which a large number of bugs have already been found and fixed.

You can deploy the solution on any laptop, there is a simple instruction for this.

At the time of this writing, YOLOv8 has already been published, but this repository does not have the advantages described in points 2 and 3.

To implement traffic counting, you can use pre-trained YOLOv5 weights. We do not need all 80 classes to calculate traffic, it is enough to select only the necessary ones (car, bus, truck, motorcycle). If there is a need for a more detailed classification of a car, it is necessary to train a custom model. Instructions for learning are also provided in the YOLOv5 repository. Training a custom model on a large sample of intersections will also allow achieving high accuracy when detecting a car.

Object Tracking

Here you can also find a large number of solutions. Good article on the topic Here. Let’s stick with DeepSORT for the following reasons:

Ability to work in real time, since the solution is relatively lightweight.

Easy start as there is an implementation on tensorflow And PyTorch.

Large community (not the same as YOLOv5, but still).

To solve the problem of traffic counting, you can also use a lighter version of the SORT tracker, if it is possible to ensure stable operation of the detector (the object is not lost on the video during the entire time it is in the frame).

But! Such an algorithm will not allow restoring the track if it was blocked by another object or lost by the detector.

It is also worth considering that DeepSORT is trained by default to track people in the frame, but it can also be used to track other objects.

If you want to achieve maximum accuracy, you need to train the weights on a dataset with cars.

However! It is worth remembering that different implementations of the algorithm use different models for re-identifying objects.

Model training repository for the original implementation based on Tensorflow Here.

For analog training with PyTorch based implementation here.

Solution launch

So, the implementation of the described solution includes four main steps:

Receive video stream.

Pass it through the YOLOv5 detector.

Process the received detections using DeepSORT.

Analyze the tracks obtained based on the work of DeepSORT (count the cars that have passed).

The first three points can be implemented based on many examples (like this one) or independently. Traffic counting can be done in different ways.

Let’s consider two of them:

Indication of the lines at the intersection of which the car is entered into the statistics.

Splitting the frame into zones, at the intersection of which the statistics are also updated.

After completing all four steps, we will get a working solution for calculating the traffic flow. You can run the described solution on any PC, the technical equipment will determine the processing speed of one frame.

For example, on a laptop with an Intel Core i7 10th Gen processor and an Nvidia Geforce GTX 1060 Ti graphics card, this solution works at a speed of 15-20 FPS (using YOLOv5 size S and DeepSORT implemented on PyTorch).

Thus, it is possible to implement a similar project using a home laptop (a video card is not required for implementation).

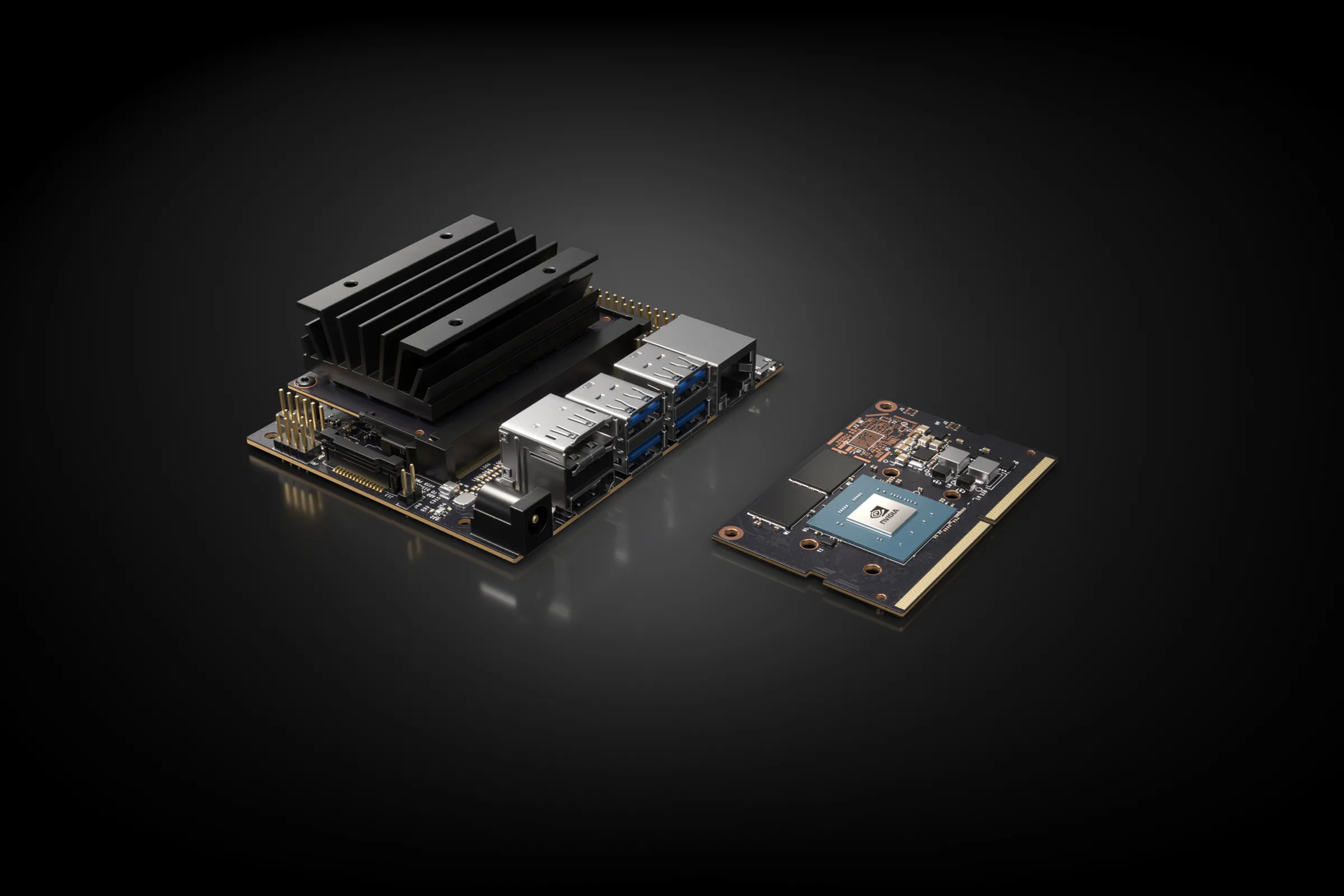

However! If you need to calculate statistics for a long period of time at some intersection, then allocating a PC for this task is costly. To solve such problems, there are a large number of edge platforms (we recommend interesting article on the subject).

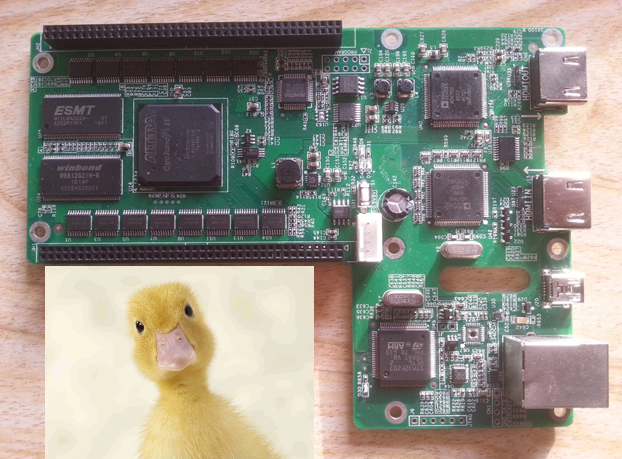

A fairly popular platform is the Nvidia Jetson line. The cheapest of them is the Jetson Nano. It was this device that was chosen to test the solution described above. Jetson nano requires OS installation (jetpack) as instructed by Nvidia.

Having chosen a platform and installed all the necessary dependencies and repositories, at launch we will encounter the following problem: the frequency of the solution will be only 1-2 FPS, which will not allow working in real time. This is due to the fact that Jetson nano contains a low-power processor with ARM architecture (4 cores at 1.5 GHz), as well as a graphics card with 128 cores.

All this is noticeably inferior to the usual characteristics for a PC. Fortunately, to speed up the work of our method on Jetson, there is deepstreamwhich can be installed when using JetPack.

deepstream

Deepstream is a set of tools written in C++ that allows you to process video streams from a file or camera (rtsp or usb connections), built using the platform Gstreamer open source.

The Deepstream SDK includes plugins for Object detection, Object tracking and other related tasks in image processing (decoding, preprocessing, visualization, etc.).

Eat informative article on the topic.

Deepstream installation in progress according to instructions.

Version 6.0 is the latest version available for the Jetson nano. By installing Deepstream, we get the ability to run an example using various detectors (YOLOv3, YOLOv4, SSD, DetectNet_v2) and trackers (SORT, DeepSORT, NvDCF, IOU).

Running the example using DetectNet_v2 and IOU allows you to process video at a frequency of 25 FPS on 4 streams at a time based on Jetson nano. This result significantly exceeds the previously described indicators. This is due to the use of optimized plugins as part of Deepstream, which transfer calculations from the CPU to the GPU as much as possible.

However, the examples use other solutions than those previously described. Deepstream has the ability to configure the tracker plugin to work with DeepSORT, but does not have the ability to configure it under YOLOv5. By using repository you can adapt the algorithm to run under Deepstream.

After configuring the necessary plugins to solve the problem using YOLOv5 and DeepSORT, we get 13 FPS at startup. Of course, this is not 25 FPS, but still allows you to process data in real time, and also shows a performance increase on Jetson by at least 6 times compared to using the original repositories.

Deepstream can also be used on any device with an Nvidia graphics card and Ubuntu 20.04. To simplify working with Deepstream plugins, implemented repositorywhich allows you to configure and run plugins using Python.

Similar devices

Of course, Jetson is a leader among competitors, as it is accompanied by clear documentation with a lot of examples. However, Nvidia’s sanctions against the Russian Federation make it difficult to purchase products.

Unfortunately, there are no Russian analogues comparable in terms of capabilities. But there are several manufacturers from China whose products you can buy. Here are two of them:

Huawei Atlas 500 (other models available) – A device with an ARM-based processor and a neural network processor. Such a device costs several times more, but it must be considered as a server for processing multiple video streams. However, the documentation leaves much to be desired. Repository with examples here.

Rockchip is a company that manufactures microprocessors and microcircuits. Among their devices is a series with an installed NPU (neural network processor that allows you to speed up calculations). One such device is Rockchip RK3588. There are also problems with the documentation, but many praise the device itself.

If you have examples of edge devices with clear documentation and without sanctions restrictions, we will be glad to read your comments 🙂

Let me know if the review was interesting for you – we will do them more often 🙂