The math behind the rolling shutter phenomenon

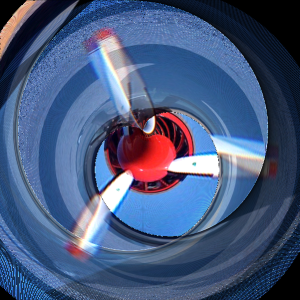

I remember once saw the photo above on flickr and broke his brain, trying to understand what was wrong with her. The thing was that the propeller was spinning while the motion sensor in the camera was “reading the readings,” that is, during the camera’s exposure, there was some movement. This is really worth thinking about, let’s think together.

Many modern digital cameras use a CMOS sensor as their “sensitive” device, also known as active pixel sensor, which works by accumulating an electronic charge when light is incident on it. After a certain time has elapsed – exposure time – the charge line by line moves back to the chamber for further processing. After that, the camera scans the image, storing rows of pixels line by line. The image will be distorted if there was any movement during shooting. To illustrate, imagine shooting a rotating propeller. In the animations below, the red line corresponds to the current reading position, and the propeller continues to rotate as it reads. The part under the red line is the resulting image.

The first propeller makes 1/10 revolution during the exposure:

Subscribe to the channels:

@Ontol – the most interesting texts / videos of all time, affecting the picture of the world

@META LEARNING – where I share my most useful findings about education and the role of IT / games in education (as well as thoughts on this subject by Anton Makarenko, Seymour Peypert, Paul Graham, Joseph Liklider, Alan Kay)

The image is a little distorted, but nothing critical. Now the propeller will move 10 times faster, making a full rotation during the exposure:

It already looks like the picture that we saw at the beginning. Five times per exposure:

This is already a little too much, so you can move off the coils. Let’s have fun and see how different objects will look at different rotation speeds per exposure.

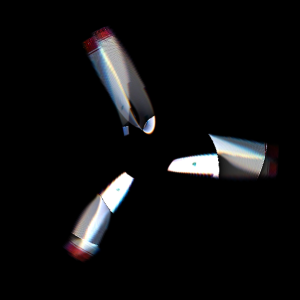

Exactly the same propeller:

Propeller with large blades:

Car wheel:

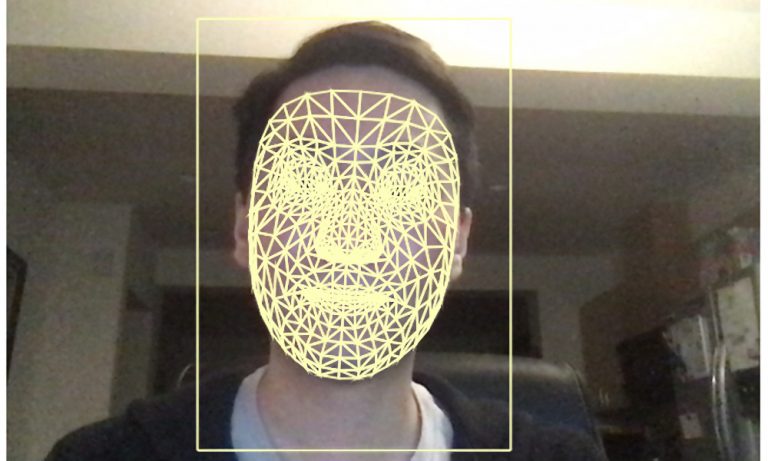

We can perceive rolling shutter effect as a kind of transformation of the coordinates of a real object from the “object space” into the “image space” of the distorted object. The animation below shows what happens to the Cartesian coordinate system as the number of revolutions increases. At low speeds, the deformation is negligible – the number increases to unity, and each side of the coordinate system sequentially moves to the right side of the image. This is a rather complex transformation for perception, but easy to understand.

Let the image be I (r, θ), the real (rotating) object will be f (r, θ), where (r, θ) are the 2D polar coordinates. We chose the polar coordinates for this task because of the rotational motion of objects.

The object rotates with an angular frequency ω, and the shutter moves along the image with a speed v vertically. In the position (r, θ) in the picture, the distance the shutter has passed since the beginning of the exposure is y = rsinθ, where the elapsed time from this moment is (rsinθ) / v. During this time, the object turned on (ω / v) rsinθ) radians. So we get

I (r, θ) = f (r, θ + (ω / v) rsinθ),

which is the required transformation. The coefficient ω / v is proportional to the number of rotations per exposure and parameterizes the transformation.

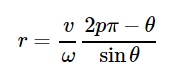

To get a deeper understanding of the obvious forms of propellers, we can consider an object consisting of P propellers where f is nonzero only for

θ = 2π / P, 4π / P … 2π = 2pπ / P for 1

Image I is nonzero for θ + (ω / v) rsinθ = 2pπ / P or

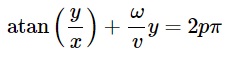

In the Cartesian coordinate system, it becomes

and helps us in explaining the reason why propellers take an S-shape – it’s just a function of arc tangent in image space. Cool. Below I built this function with a set of five propeller blades with slightly different initial shifts, you can see this on playback. They are very similar to the figures from the animations above.

Since we learned a little more about the process, can we fix corrupted photos? Using one of the images above, I can draw a line through it, rotate it back and paste these pixels into a new image. In the animation below, I scan the image on the left, marked with a red line, and then rotate the pixels along this line, getting a new image. So we can recreate the image of a real object, even if suddenly an annoying rolling shutter ruined your photo.

Eh, if I had better control of Photoshop, I would have extracted the propellers from the original photo on Flickr, edited and returned to the photo. I think I know what I’ll do in the future.

If you want to know the actual number of blades in the photo at the beginning of the post and the rotation speed, you can read this great post on tumblr daniel walshin which he gives a mathematical explanation.

He believes that we can calculate the number of blades by subtracting the “lower” blades from the “upper”, so we get three blades in that picture. We also know that the propeller scrolls approximately twice during the exposure time, so if we try to “cancel” the rotation at several different speeds, we get something like this:

I had to understand where the center of the propeller was, so I drew a circle. Apparently, the center should be somewhere nearby. Unfortunately, one blade is missing, but there is enough information for the image.

I found a place where everything intersects the most, therefore, at this rotation speed (2.39 revolutions per exposure), here’s what the original image and blades look like: