Scientists created Lamphone: using a photodiode and telescope, researchers turned bulbs into “bugs” for wiretapping

A bit about the history of photoacoustic wiretapping

This type of wiretapping is rooted in centuries backresearch by the engineer of the closed Tupolev design bureau and the pioneer of electronic music, Lev Theremin. Which back in the mid-forties of the last century developed the “Buran” system, which with the help of reflected infrared rays was able to listen to the vibration of window panes. The same principle subsequently formed the basis of laser microphones. However, the method was not perfect. The presence of sound-absorbing barriers in front of the sound source prevented sufficient glass shaking in order to carry out any useful information reading.

laser microphone of the late 80s

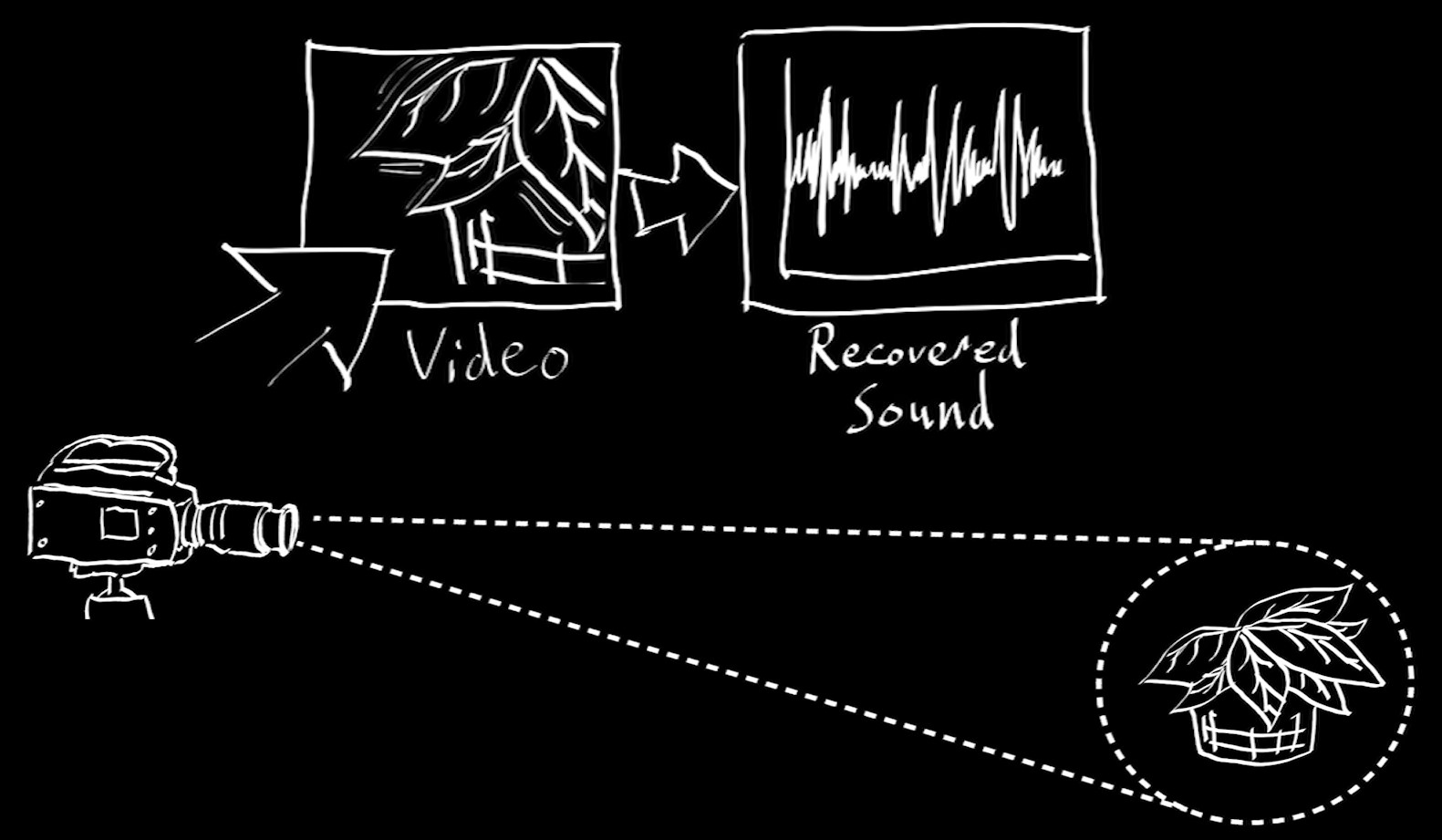

The advent of high-resolution video cameras with a frame refresh rate has opened up new possibilities for wiretapping. Sound waves, colliding with the surface of objects, cause vibrations imperceptible to the eye.

A camera with a high resolution and a frame refresh rate of 60 fps can be used to recognize them. Three years ago, a group of researchers from Massachusetts Technological were able to convert a video shot at a frequency of 2200 fps into the sound of a melody that was playing indoors at the time of shooting. It was further discovered that with less efficiency the method can be applied even with a refresh rate of 60 fps.

This method also had limitations. Firstly, the cost of cameras with high and ultra-high refresh rates. Secondly, there are problems with the processing speed of an image taken at such a frame rate; voluminous video files require long processing, the duration of which directly depends on the hardware capacities. This limits the use of the realtime method.

Cameras with the existing resolution practically do not allow using the camera at a considerable distance, limiting it to 5-6 meters to the object.

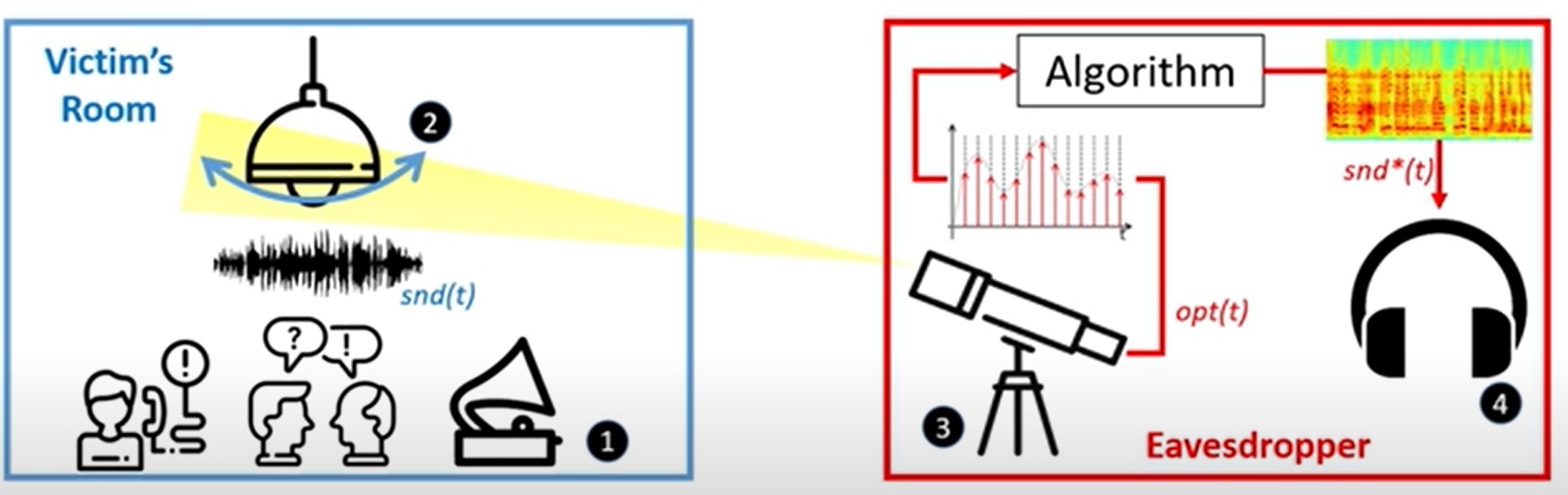

The essence of the new method

Israeli scientists decided to improve the method of the Americans, focused the shot on a specific object with a telescope and replaced an expensive camera with an inexpensive photodiode. Air jitter during a conversation causes microvibration of the bulb, which in turn causes not noticeable, but significant for sensitive equipment, changes in illumination. Light is captured by a telescope and converted by a photodiode into an electrical signal. Using a software analog-to-digital converter, the signal is recorded in the form of a spectrogram, which is processed by an algorithm written by researchers and then converted into sound.

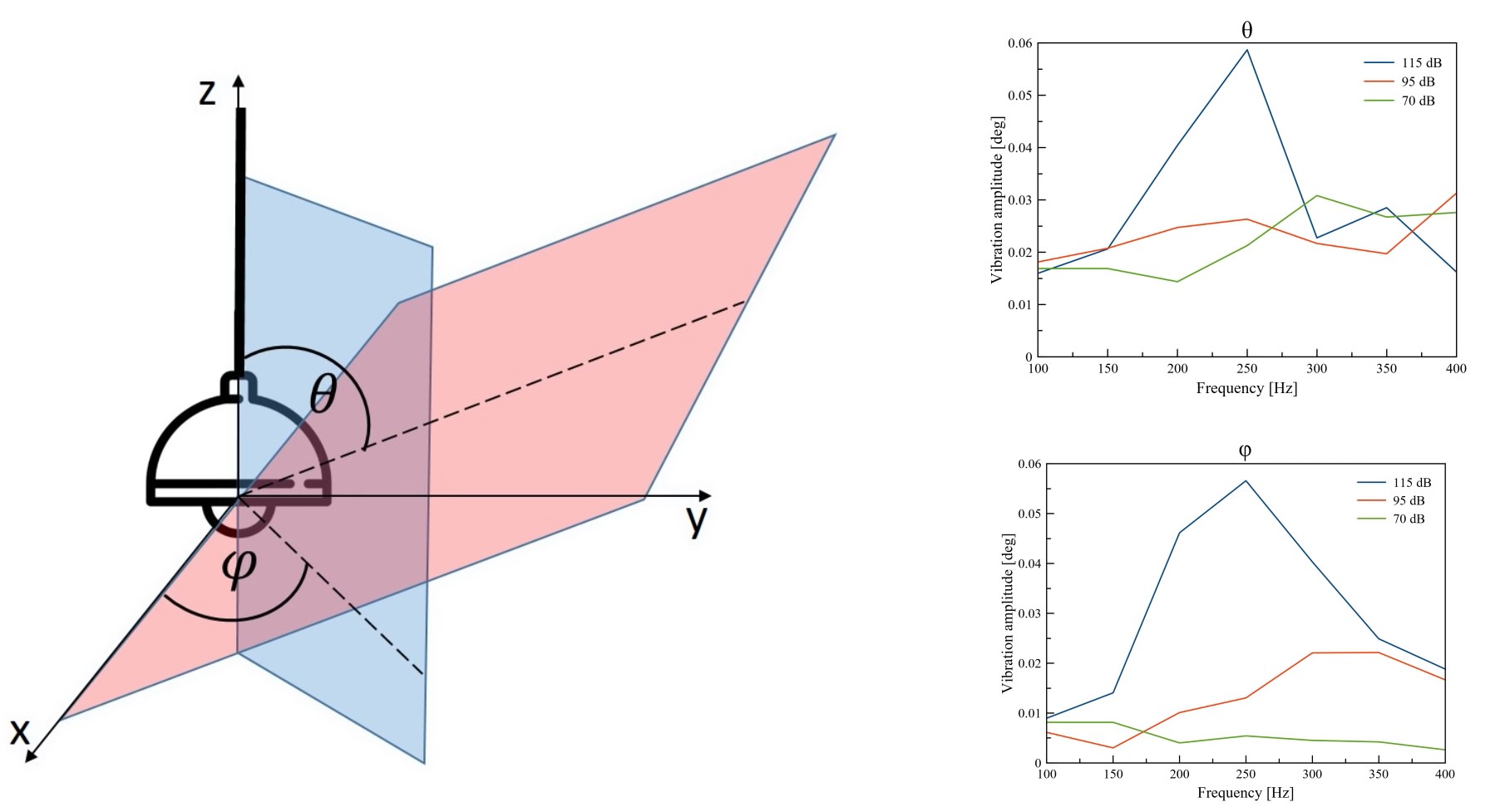

The researchers tested the efficiency of the method by laboratory experience, in which they attached a gyroscope to a light bulb and reproduced sounds with a frequency of 100 to 400 Hz in one centimeter from the object. The fluctuations of the bulb were small and ranged from 0.005 to 0.06 degrees (the deviation averaged from 300 to 950 microns), but the main thing was that they differed significantly depending on the frequency and sound pressure level, and accordingly, there is a dependence of fluctuations from the characteristics of propagating sound waves.

The oscillations in the vertical and horizontal planes were very small (300–950 microns), but they varied depending on the frequency and volume of the delivered sound, which means that the bulb, albeit barely noticeable, still vibrates from the sound waves propagating nearby, and fluctuations depend on their characteristics.

Measurement and experiment

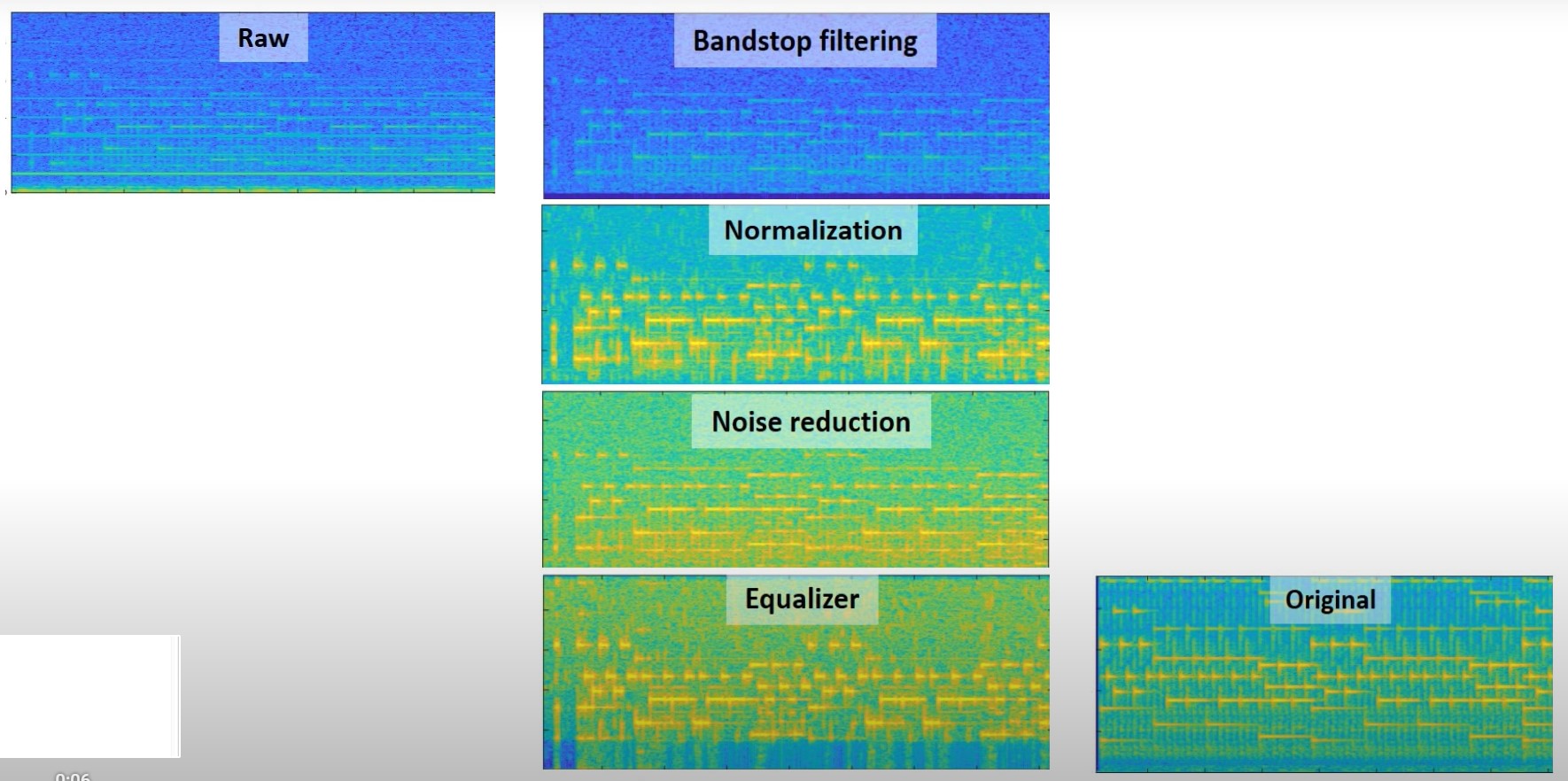

Measurements of the data from the photodiode showed approximate changes in current during oscillations of the bulb at different distances between it and the telescope. It was found out that when using the 24-bit conversion, the oscillations of the light bulb by 300 microns in the plane cause a voltage change of 54 microvolts, which is enough to transmit the test spectrum (100 – 400 Hz) at a considerable (several tens of meters) distance using the optics of the used telescope. Also, the absence of sound is reflected in the spectrogram of the optical signal from the bulb in the form of a peak of 100 Hz (due to its flicker frequency). This feature was also introduced into the algorithm.

The algorithm itself acts sequentially. At the first stage, it works as a filter of informationally insignificant frequencies, such as the flicker frequency, and then selects the spectrum corresponding to speech. After that, it eliminates the frequency signs of extraneous noise, like standard denoisers in voice recorders and studio recorders. The spectrogram processed in this way is converted into sound by a third-party program.

Created by Lamphone scientists in the current version, it allows real-time restoration of speech and music from a room located 25 meters from the observation site. This was objectively proved by the following experiment, an installation equipped with an amateur telescope with a 20-cm lens was installed on the bridge, 25 meters from the window into the room where the lamp was located. Not far from the lamp, The Beatles “Let It Be” and Coldplay “Clocks” were played, as well as a recording of D. Trump’s speech with the phrase “We will make America great again”.

As a result, sound recordings recovered from spectrograms turned out to be quite distinguishable, tunes were easily guessed by Shazam service, and words were recognized by Google’s open API for text recognition.

Dry residues

The device is working. None of this had been reported before. This will simplify the work of the special services in some way, and all who have something to fear should take new precautions. It is not yet clear whether the system can work with anything other than a moving light source. Israeli researchers plan to continue their research.