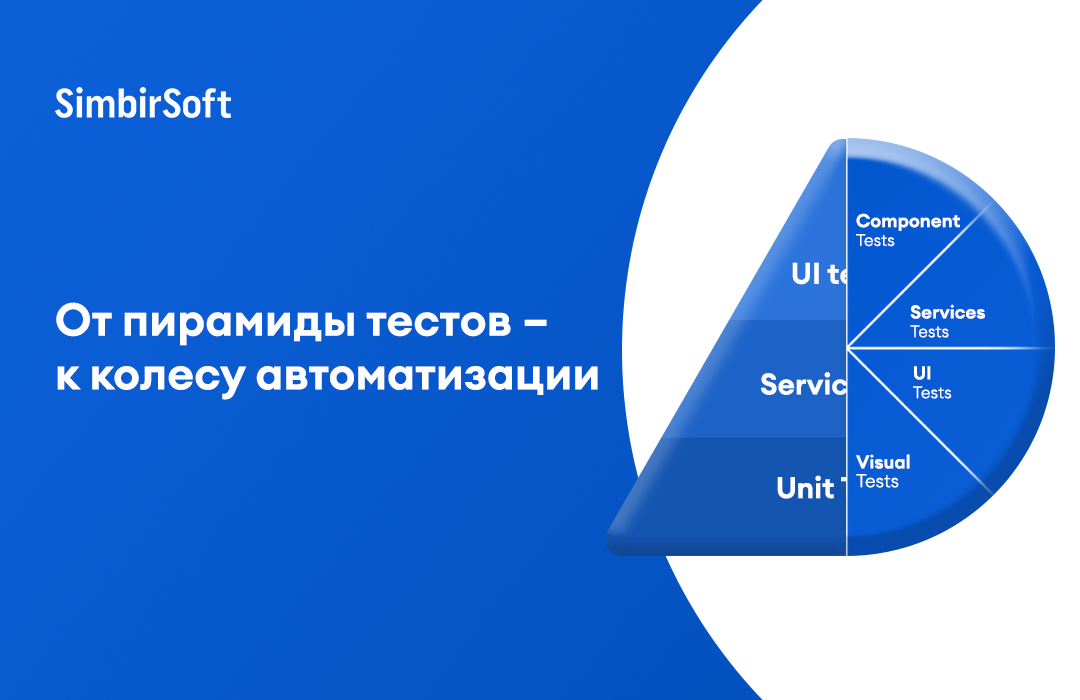

From the test pyramid to the automation wheel: what checks are needed on the project

Test automation, as a rule, is most needed in large-scale applications with a large number of business functions, with the introduction of CI / CD and regular releases. We talked about this in more detail in the article “What Test Automation Gives”.

Courtesy Kristin Jackvoni – Blog Author Think like a tester and a number of popular materials about testing – we translated the article Rethinking the Autotest Pyramid: Automation Wheel (Rethinking the Pyramid: The Automation Test Wheel) At the end of the article, we consider an example of checks from the practice of our experts in test automation (SDET).

Everyone who was involved in autotests heard about the Pyramid of autotests. Usually it has three layers: UI tests, API and Unit. The bottom layer, the widest, is occupied by Unit tests – this means that there should be more such tests than others. The middle layer is API tests; the idea is that they need to be run less than Unit tests. Finally, the top layer is UI tests. They should be less than the rest, because they require more time to complete. In addition, UI tests are the most unstable.

An example of a pyramid of autotests on Habré. The method is described in a book by Mike Cohn “Scrum: Agile Software Development” (Succeeding With Agile. Software Development Using Scrum).

From my point of view, there are two problems in this pyramid:

- describes by no means all types of autotests;

- suggests that the number of tests is the best indicator of the quality of test coverage.

I suggest a different approach to automated testing – Automation wheel.

Each type of test can be thought of as a spoke in a wheel. There is no knitting needle that would be more important than others – they are all necessary. Wheel sector size does not mean the number of tests that need to be automated. In this case, it is necessary to provide for checks in each specified direction, if your project requires quality in the relevant field.

Unit tests (Unit Tests)

Unit tests are the smallest autotests that are possible. They test the behavior of only one function or one method. For example, there is a method that checks if the number is 0, then you can write Unit tests like this:

- with argument 0, the method returns True;

- with argument 1, the method returns False;

- with a string argument, the method returns the expected error.

The independence of unit tests from other services and the high speed of their work make such tests an effective quality control tool. Most often they are created by the same developer who writes the corresponding function or method. Each method or function must be tested in at least one Unit test.

Component tests (Component Tests)

These tests test the different services that the code depends on. For example, if we use the GitHub API, we can write a component test to verify that the request for this API receives the expected response. Or it can be just a ping to the server, checking the availability of the database. There must be at least one component test for each service used in the code.

Note: for example, in SimbirSoft, for component tests, in addition to checking the responses of third-party services, include checking the modules of one system, if its architecture is a set of such.

Service Tests (Services Tests)

These tests test the availability of web services accessed by the application. Most often, work with them is organized through the use of API requests.

For example, for APIs with request types POST, GET, PUT and DELETE, we can write self-tests to check each type of request. Moreover, our tests will check both positive and negative types of scenarios in which an incorrect request returns the corresponding error code.

User interface tests (User Interface Tests, UI Tests)

UI tests verify that the system responds to end-user actions. Such checks include, for example, tests that check the system’s response to filling in text fields or to pressing buttons.

As a rule, any system functionality that can be tested with Unit, component or service test should be tested using the mentioned type of test. User interface tests should focus solely on identifying errors in user interaction with the graphical interface.

Visual tests (Visual tests)

Visual tests verify the display of interface elements on the screen. They are similar to UI tests, but focus on checking the appearance of the interface, rather than on functionality. Examples of such checks may include checking the correct display of the button image or checking the display of the product logo on the screen.

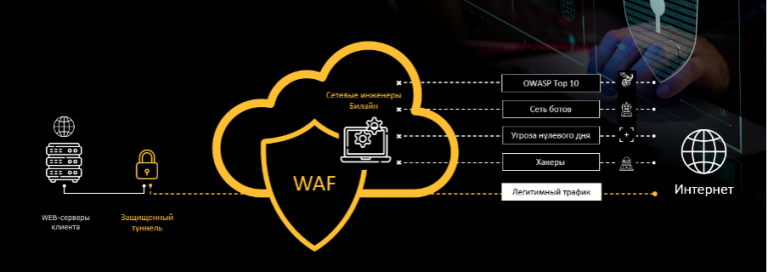

Safety tests (Security Tests)

These are tests that verify compliance with security measures and data protection. They are mechanically similar to service tests, but nevertheless they should be considered separately. For example, a security test can verify that an authorization token will not be created with an invalid combination of login and password. Another example is creating a GET request with an authorization token for a user who does not have access to this resource, and checking that a response with a 403 code will be returned.

Note: with service tests, these checks are related only to the method of calling services – through http requests. The purpose of such tests is solely to verify the security of the system and user data from the actions of an attacker.

Performance tests (Performance Tests)

Performance tests verify that the response to the request comes within the appropriate time period. For example, if there is a system requirement that a GET request should never take longer than two seconds, then if this limit is exceeded, the autotest will return an error. Web interface load times can also be measured using performance tests.

Availability Tests (Accessibility Tests)

Accessibility tests are used to test a variety of things. In combination with user interface tests, they, for example, can check for a text description of the image for the visually impaired. As part of the availability check, visual tests can be used to check the size of the text on the screen.

How to choose verification methods

You may have noticed that the above test descriptions often overlap. For example, security tests can be performed during API testing (service tests), and visual tests can be performed during user interface testing. It is important here that every area be checked carefully, efficiently and accurately.

After the release of the article Rethinking the Autotest Pyramid: Automation Wheel (Rethinking the Pyramid: The Automation Test Wheel) released list of factorshelping to determine the necessary proportion of tests of each type in the automation wheel:

- How many third-party services does your application depend on? If there are many such services, more component tests are needed.

- How complicated is your user interface? If it contains just one or two pages, you will need fewer user interface tests and visual tests.

- How complex is your data structure? If you are dealing with large data objects, you will need more API tests to verify the correct processing of CRUD operations (Create, Read, Update, Delete).

- How secure should your application be? An application that processes banking will need much more security tests than an application that saves photos of kittens.

- What should be the performance of your application? Solitaire does not have to be as reliable as a cardiac monitor. Accordingly, the percentage of performance tests depends on this criterion.

Automation Wheel Advantage [в сравнении с пирамидой тестирования] lies in the fact that it can be adapted to all types of applications. Examining each spoke in the wheel, we will be sure that we are creating a reliable automated test coverage. And vice versa: just as it is impossible to rely on a bicycle in the absence of spokes in the wheel, so you cannot rely on automation in CI / CD, if you are not sure that all aspects of the system are covered by self-tests.

From the practice of SimbirSoft

We believe the automation wheel is a useful way to visualize test types. Of course, we have been using the tests described for a long time, but the picture helps to analyze the coverage of the system and keep everything before our eyes.

If you have a debugged testing process and you regularly run auto tests, this minimizes the risk of defects in the production. In addition, autotests help to quickly identify problems that may arise during the operation of the system. For example, with a daily scheduled autotest run, you can control your site to protect yourself from hacking.

Which checks to use and in what quantity depends on the requirements and on the system being checked. So, in one of our products – a banking mobile application – at the request of a client, autotests of all the types listed above were prepared. Consider their examples below.

Unit tests

We made sure that every function in the application code is covered by tests that check all possible paths. At the same time, moki were actively used, replacing other parts of the system. For example, checking the function of converting rubles to currency, we fed the functions supported by rubles to the input to verify that we get the currency at the output. Comparison was made with reference values. Also tested the response of the function to invalid data.

Component tests

Here we checked the components of the mobile bank. Since we have a microservice architecture, we had to check the availability of all microservices, as well as, for example, the availability of the database. For the service responsible for the client’s settlement accounts, we performed a query to the database to obtain a settlement account and verify that the account can be received.

Services Tests

We checked the API through which in the previous example queries to the database are sent. We sent a GET request for a current account number and checked the answer to it. Be sure to send some incorrect request, for example, with a non-existent client id, and verify that the response is as expected.

UI Tests

For example, we checked the correct reaction of the system to filling in the fields of the account, as well as the response of the system to pressing the “Open Deposit” button.

Visual tests

We tested devices with different versions of iOS and Android and a screen size and checked the location of the elements of the application. This check was needed to make sure that with any combination of these parameters, the buttons do not hit the logo and do not hide behind it, becoming inaccessible to click.

Security tests

A mobile bank is a financial system, so its security had to be ensured at all levels. Among other things, we checked authentication only with the correct data at the user interface level and receiving information only in response to a request with the correct token at the service level.

Performance tests

One of the tests was a comparison with the server response time standard for an application request. We also checked the operation of the application with the maximum expected number of concurrent users in the system.

Accessibility tests

We checked the voice search and display of markup elements with the activated mode for the visually impaired.

We will tell you more about how to organize test automation from scratch in one of the following articles.

Thank you for the attention! We hope you find the translation useful.