How we implemented running Cucumber scripts in parallel on different hosts

Some time ago, due to the systematic increase in the number of autotests, we faced the task of implementing their parallel launch in order to reduce the duration of runs.

Our test framework is based on Cucumber (Java). The main focus is automation of GUI testing of Swing applications (we use AssertJ Swing), as well as interaction with the database (SQL), REST API (RestAssured) and web UI (Selenide).

The situation was complicated by the fact that we could not connect parallelization by standard means (for example, setting up threads in the maven-surefire-plugin), since the specificity of the applications under test does not allow running more than one instance of the application on one physical host.

In addition, it was necessary to take into account that some of the scenarios use shared external resources that do not support parallel requests.

In this article, I want to share our solution and the problems encountered during the development process.

Implementation overview

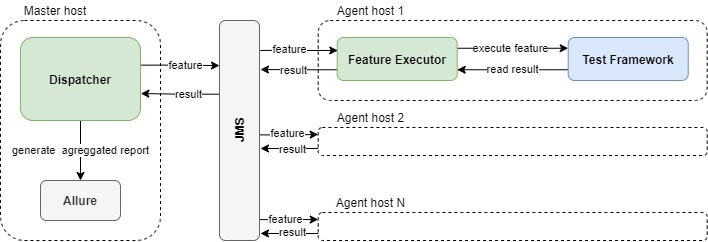

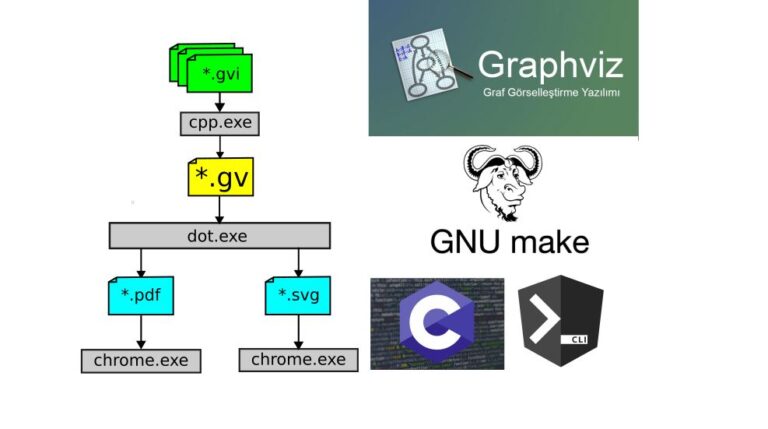

For our task, we decided to use the master-worker pattern with communication via a message queue.

Circuit components:

Master hoston which the module is launched Dispatcher, which controls the creation of a queue of scripts for parallel execution and the subsequent aggregation of the results.

JMS server (for ease of deployment, we are raising our ActiveMQ Artemis built in Dispatcher).

Scale-out agent hostswhich runs FeatureExecutordirectly executing scripts from the queue. For every agent the test framework must first be installed.

Master host at the same time, it can simultaneously act as one of the agents (for this, we run Dispatcherand FeatureExecutor).

Dispatcher at startup, it receives a directory with features as input, reads a list of files, and sends them to the JMS script queue.

FeatureExecutor reads the script from the queue and sends the command to Test Framework to execute this script.

How can I get a general report of the run results from all hosts?

As we know, Allure puts the results files for each scenario in the resultsDir directory (by default, this is build / allure-results).

Since we are already using JMS to create a script queue, we considered it a logical decision to add another queue to transfer the results.

FeatureExecutor after each script, it archives the content of resultsDir and sends it to this queue as BytesMessage, from where it reads them Dispatcher and unpacks into resultsDir already on the master host.

Thus, by the end of the run, all results are accumulated on the master host, where we collect a general report.

What if one of the agents fell?

The dispatcher exits when it has processed all the results (we compare the number of sent scripts VS the number of results received). If one of the agents took the script from the queue, but did not send the result, you can get a stuck run, since the dispatcher will endlessly wait for this result

To solve this problem, we added to Dispatcher service HealthChecker, which in a background thread through the RMI interface via the scheduler calls the isAlive () method on each registered agent (we load the list of agents from the configuration at the start).

The implementation of the method on the agent is primitive:

@Override

public boolean isAlive() {

return true;

}If communication with at least one agent has been lost, the run ends.

This condition was implemented according to the principle of minimum investment in the first version of our mechanism.

There are plans to improve tracking which script was “lost” and re-sending it to the queue to continue working on the remaining agents. Although, in fact, for a year of regular runs, we have not yet reproduced this case.

Alternative options:

Use REST API by analogy with RMI.

Create a separate JMS queue for heartbeat messages that agents will send. And on the side Dispatcher check that we receive at least one heartbeat from each agent every 5-10-30 minutes (configuration to taste).

What about tests that cannot run in parallel?

For example, using shared resources.

Our solution is styled with a special Cucumber tag in the feature file – @NoParallel. Why “stylized”? Because we read this tag by means of java.nio.file, and not through Cucumber. But it fits nicely into the script file:

@NoParallel(“PrimaryLicense”)

@TestCaseId("876123")

Scenario: 001 Use of shared resourceIf two scripts share a common resource (denoted in the @NoParallel tag), they should not run in parallel.

For resource management, we have implemented ResourceManager on the side Dispatcher with two methods in the API:

public boolean registerResource(String resource, String agent);

public void unregisterResource(String resource, String agent);When FeatureExecutor gets the script from the queue with the @NoParallel tag, it calls the registerResource () method.

If the method returns true – the resource was registered successfully, we continue the script execution.

If we get false, the resource is busy. The script is returned to the queue.

After the script is executed, the resource is released via unregisterResource ().

Interaction ResourceManager we have implemented it through RMI.

Alternatively, you can use the REST API.

Summary

This decision cost us 0 changes to the test framework.

As a result, we have full backward compatibility. Autotests can be run in both the old and the new way without changing the code.

No need to worry about test thread safety. They are isolated, so it makes no difference whether they run in parallel or not.

Agents scale horizontally, it is easy to speed up test execution up to a certain limit.

By the time we introduced parallel launch, our autotests were completely atomic. Otherwise, it would be necessary to take into account the dependencies between the scenarios, or change their design.

Hi, I’m the author of this article.

Why did you post it without my permission and attribution?