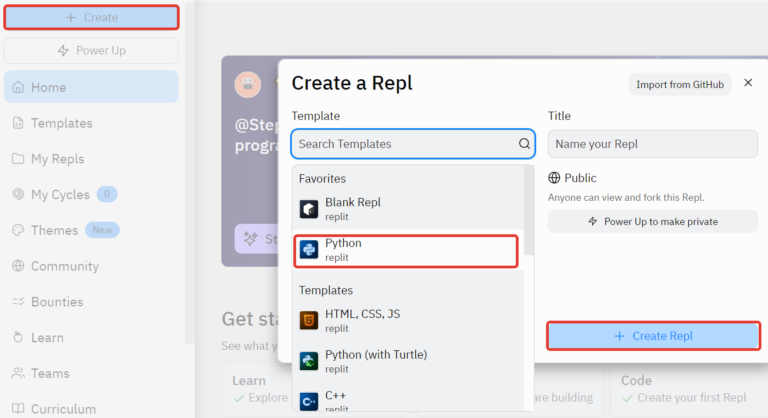

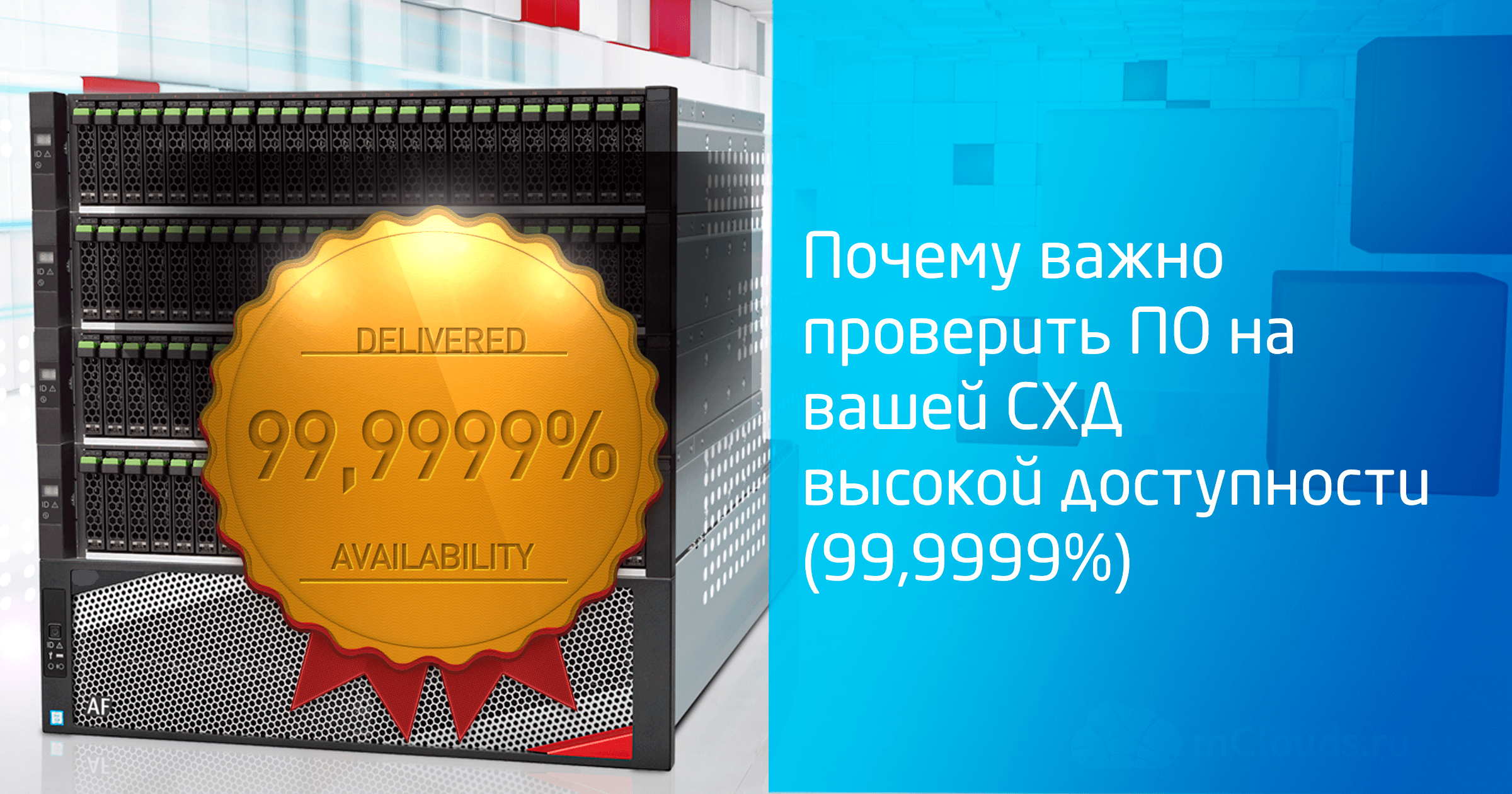

Why it is important to validate software on your HA (99.9999%)

What version of the firmware is the most “correct” and “working”? If the storage system guarantees fault tolerance by 99.9999%, does it mean that it will work smoothly even without a software update? Or vice versa, to get maximum fault tolerance, you should always install the latest firmware? We will try to answer these questions based on our experience.

Small introduction

We all understand that each version of the software, be it an operating system or a driver for a device, often contains flaws / bugs and other “features” that may not “show up” until the end of the hardware service, or “ open up ”only under certain conditions. The number and significance of such nuances depends on the complexity (functionality) of the software and on the quality of testing during its development.

Often, users stay on the “firmware from the factory” (the famous – “it works, then don’t go in”) or always put the latest version (in their understanding, the latter means the most working). We use a different approach – we look at the release of the sheet music for everything used. in the cloud mClouds equipment and carefully select the appropriate firmware for each piece of equipment.

We came to this conclusion, as they say, with experience. Using our example of operation, we will tell you why the promised 99.9999% storage reliability does not mean anything if you do not follow the software update and description in a timely manner. Our case is suitable for users of storage systems of any vendor, since a similar situation can occur with hardware from any manufacturer.

Choosing a new storage system

At the end of last year, an interesting storage system was added to our infrastructure: the youngest model from the IBM FlashSystem 5000 line, which at the time of purchase was called Storwize V5010e. It is now sold under the name FlashSystem 5010, but in fact it is the same hardware base with the same Spectrum Virtualize inside.

By the way, the presence of a unified control system is the main difference between IBM FlashSystem. In models of the younger series, it practically does not differ from models of more productive ones. The choice of a certain model only gives an appropriate hardware base, the characteristics of which make it possible to use this or that functionality or provide a higher level of scalability. At the same time, the software identifies the hardware and provides the necessary and sufficient functionality for this platform.

Briefly about our model 5010. This is an entry-level dual-controller block storage system. She knows how to place NLSAS, SAS, SSD disks in herself. Placement of NVMe in it is not available, since this storage model is positioned to solve tasks that do not require the performance of NVMe disks.

The storage system was purchased to accommodate archival information or data that is not frequently accessed. Therefore, the standard set of its functionality was enough for us: Easy Tier, Thin Provision. Performance on NLSAS disks at the level of 1000-2000 IOPS was also fine for us.

Our experience – how we didn’t update the firmware in time

Now about the actual software update itself. At the time of purchase, the system already had a slightly outdated version of Spectrum Virtualize software, namely, 8.2.1.3.

We have reviewed the firmware descriptions and planned to upgrade to 8.2.1.9… If we were a little quicker, then this article would not have been – on a more recent firmware the bug would not have occurred. However, for some reason, the update of this system was postponed.

As a result, a short update delay led to an extremely unpleasant picture, as in the description at the link: https://www.ibm.com/support/pages/node/6172341…

Yes, in the firmware of that version, the so-called APAR (Authorized Program Analysis Report) HU02104 was just relevant. It manifests itself as follows. Under load, under certain circumstances, the cache begins to overflow, then the system goes into protective mode, in which it turns off I / O for the pool (Pool). In our case, it looked like disconnecting 3 disks for a RAID group in RAID 6 mode. Disconnection occurs for 6 minutes. Further, access to the Volumes in the Pool is restored.

If anyone is not familiar with the structure and naming of logical entities in the context of IBM Spectrum Virtualize, I will now briefly tell you.

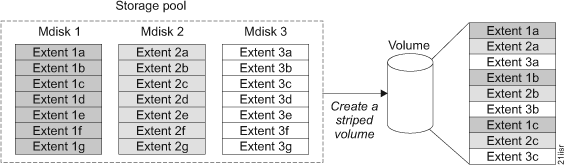

Disks are collected in groups called MDisk (Managed Disk). MDisk can be either classic RAID (0,1,10,5,6) or virtualized – DRAID (Distributed RAID). The use of DRAID can increase the performance of the array, because all disks of the group will be used, and the rebuild time will be reduced, due to the fact that it will be necessary to recover only certain blocks, and not all data from the failed disk.

And this diagram shows the logic of the DRAID rebuild in the event of a single disk failure:

Further, one or more MDisks form the so-called Pool. It is not recommended to use MDisk with different RAID / DRAID levels on disks of the same type within the same pool. We will not go deep into this, because we plan to tell this in one of the next articles. Well, in fact, the Pool is divided into Volumes, which are presented according to one or another block access protocol towards the hosts.

So, we have, as a result of the situation described in APAR HU02104, due to the logical failure of three disks, MDisk ceased to be operational, which, in turn, caused the Pool and the corresponding Volumes to fail.

Because these systems are quite smart, they can be connected to the cloud-based IBM Storage Insights monitoring system, which automatically sends a service request to IBM support when a problem occurs. An application is created and IBM specialists remotely carry out diagnostics and contact the system user.

Thanks to this, the issue was resolved quite quickly and an operational recommendation was received from the support service to update our system to the firmware 8.2.1.9 that we had previously selected, in which at that moment this was already fixed. This confirms corresponding Release Note…

Results and our recommendations

As the saying goes: “what ends well is good.” The bug in the firmware did not turn into serious problems – the servers were restored as soon as possible and without data loss. Some clients had to restart virtual machines, but in general we were prepared for more negative consequences, since we make daily backups of all infrastructure elements and client machines.

We have received confirmation that even reliable systems with 99.9999% promised availability require attention and timely maintenance. Based on the situation, we made a number of conclusions for ourselves and share our recommendations:

It is imperative to monitor the release of updates, study the Release Notes for potential critical issues and timely implement the planned updates.

This is an organizational and even quite obvious point, which, it would seem, is not worth focusing on. However, on this “level ground” you can stumble quite easily. Actually, it was this moment that added the troubles described above. Treat the preparation of the update schedule very carefully and no less carefully monitor its observance. This item is more related to the concept of “discipline”.

It is always best to keep the system up to date. Moreover, the actual one is not the one that has a larger numerical designation, namely, with a later release date.

For example, IBM keeps at least two software releases up to date for its data storage systems. At the time of this writing, these are 8.2 and 8.3. Updates for 8.2 come out earlier. A similar update for 8.3 is usually followed with a slight delay.

Release 8.3 has a number of functional advantages, for example, the ability to expand MDisk (in DRAID mode) by adding one or more new disks (this feature has appeared since version 8.3.1). This is pretty basic functionality, but in 8.2, unfortunately, there is no such feature.

If for some reason it is not possible to update, then for versions of Spectrum Virtualize software earlier than versions 8.2.1.9 and 8.3.1.0 (where the above bug is relevant), to reduce the risk of its occurrence, IBM technical support recommends limiting system performance at the pool level, as shown in the figure below (the picture was taken in the Russian version of the GUI). 10,000 IOPS is shown as an example and is tailored to the specifications of your system.

It is essential to properly load storage systems and avoid overloading. To do this, you can use either the IBM sizer (if you have access to it), or the help of partners, or third-party resources. At the same time, it is imperative to understand the load profile on the storage system. performance in MB / s and IOPS varies greatly depending on at least the following parameters:

operation type: read or write,

operation block size,

the percentage of reads and writes in the total I / O stream.

Also, the speed of operations is affected by how the data blocks are read: sequentially or randomly. When performing multiple data access operations on the application side, there is a concept of dependent operations. It is also desirable to take this into account. All this can help to see the aggregate of data from the performance counters of the OS, storage system, servers / hypervisors, as well as an understanding of the peculiarities of applications, DBMS and other “consumers” of disk resources.

And finally, be sure to have backups up to date and working. The backup schedule should be adjusted based on business-friendly RPOs and periodically be sure to check the integrity of the backups (quite a few backup software vendors have implemented automated verification in their products) to ensure an acceptable RTO value.

Thank you for reading to the end.

We are ready to answer your questions and comments in the comments. Also we invite you to subscribe to our telegram channel, in which we conduct regular promotions (discounts on IaaS and giveaways of promo codes up to 100% on VPS), write interesting news and announce new articles on the Habr blog.