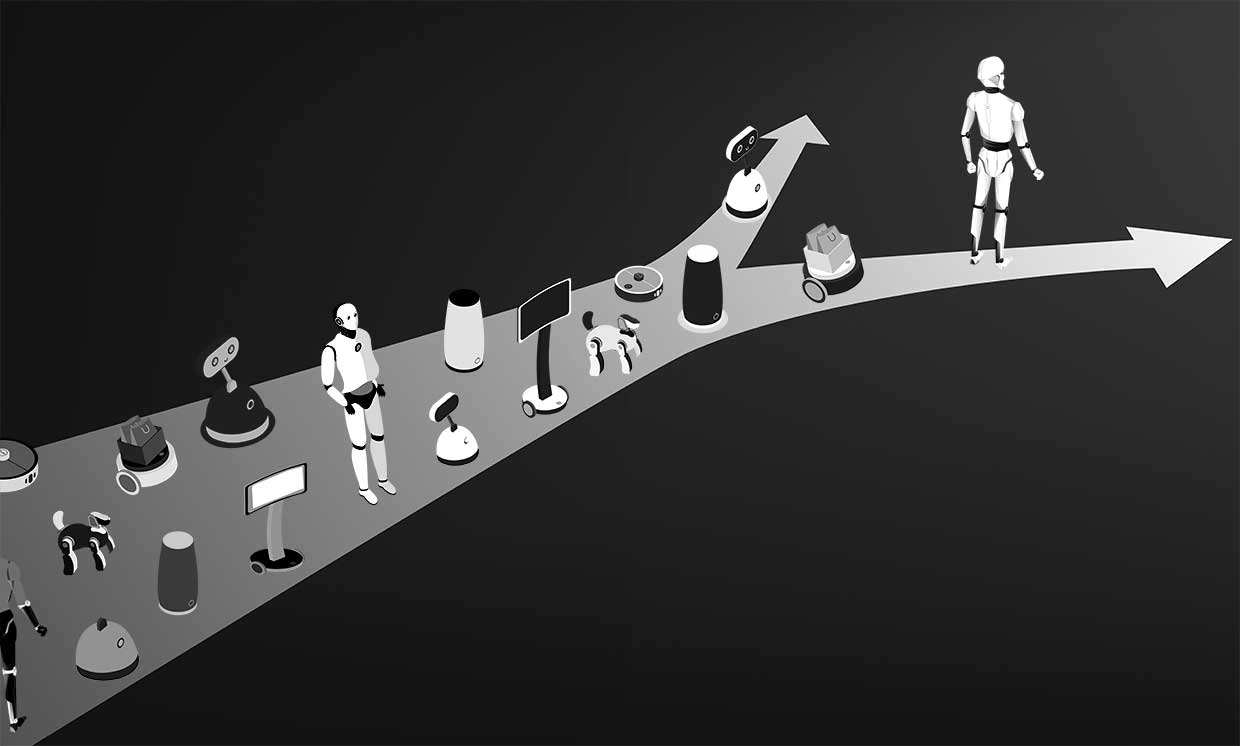

Who is responsible for robots in the human world?

Experts from ANYbotics, Boston Dynamics and Clearpath Robotics answer questions about the irresponsible and unethical use of their robots.

Illustration: iStockphoto / IEEE Spectrum

Over the past five years, the commercial production of autonomous robots that can operate outside of a structured environment has skyrocketed. But this relatively recent transition of robotic technologies from the category of research projects to a commercial product is accompanied by certain difficulties, many of which are associated with the fact that more and more robots are appearing in society.

Robots often haunt the minds of people, perhaps because of their apparent functionality or a typical portrayal in popular culture. Sometimes this leads to positive results, such as innovative ways of using them. But there are also situations where it leads to unethical or irresponsible use. Is there anything that robot sellers can do in such cases? And even if they can, should they?

Robotics believe that robots are primarily tools. We design them, we program them, and even autonomous robots just follow the instructions that we coded into them. However, it is the apparent viability of robots, which arouses such interest, means that people who do not have any experience of interacting with real robots may not understand that the robot itself is not good and not bad, it is only a reflection of its designers and users. …

This can put robotics companies in a quandary. The person who bought the robot from them, hypothetically, can use it as he pleases. Of course, this applies to any tool, but the uniqueness of the situation with robots lies in their autonomy. Autonomy can be said to imply a relationship between a robot and its manufacturer, or, in this case, the company that develops and sells it. Although this association is not entirely justified, it exists, despite the fact that in the end it is the robot’s buyer who has complete control over all its actions.

All our buyers, without exception, must confirm that Spot will not be used to harm people or animals, nor to intimidate them, be used as a weapon or be equipped to hold a weapon.

Robert Plater, Boston Dynamics

Of course, robotics companies understand this, because many of them carefully monitor who they sell their products to and are very clear about the desirable uses of their robots. But how far should this responsibility go when a robot “flies out of the nest” of the company that created it? And how is that even possible? Should robotics companies be held accountable for the actions of their robots in the human world, or should it be recognized that after the sale of a robot, responsibility for it also passes to the new owner? And what can be done if there are cases of irresponsible or unethical use of robots that could adversely affect robotics manufacturers?

To better understand this issue, we contacted employees of three robotics companies, each of which has experience selling unique mobile robots to commercial consumers. We asked them five questions about the responsibility that robotics companies have for the robots they sell, and this is what they answered.

Are there any restrictions on how humans can use your robots? If yes, which ones, and if not, why?

Peter Fankhauser, general director ANYbotics:

We work closely with clients to make sure our product provides the right solution to their problem. Thus, we immediately understand why the robot is being purchased and do not cooperate with customers who want to use our ANYmal robot for other purposes. In particular, we categorically do not allow any military or armed use of our robots, and since the founding of ANYbotics we have been trying to improve the working conditions for people, make them more comfortable, pleasant and safe.

Robert Plater, general director, Boston dynamics:

Yes, we have introduced restrictions on the use of our robots, which are set out in the terms of the sales contract. All our buyers, without exception, must confirm that Spot will not be used to harm people or animals, nor to intimidate them, be used as a weapon or be equipped to hold a weapon. Like any other product, Spot must be used legally.

Ryan Gariepi, Technical Director, Clearpath robotics:

We have strict restrictions and processes for customer due diligence based primarily on Canada’s export control regulations. They depend on the type of equipment sold as well as where it will be used. In general, we also will not sell or support a robot if we know that it will pose an uncontrollable security risk or if we have reason to believe that the purchaser is not qualified to use the product. And, as a rule, we do not support the use of our products for the development of fully autonomous weapons systems.

Why should a buyer of a robot be limited in its use?

Peter Funkhauser, ANYbotics:

We see the robot not as an ordinary object, but rather as an artificial labor force. For us, this means that the transfer of the robot and its use are closely related, and the customer and the supplier need to agree on what tasks the robot will perform. This approach resonates with our customers, who are increasingly interested in the ability to pay for robots as a service or per use.

Robert Plater, Boston Dynamics:

We sell the product and will do everything in our power to prevent attackers from using our technology to harm, but we cannot control every use case. However, we believe that the best impact on our business will come from using technology for peaceful purposes – to work with people as reliable helpers and protect them from danger. We do not want our technology to be used to harm or promote violence. We use the same restrictions as other manufacturers or technology companies that take steps to reduce or eliminate the violent or illegal use of their products.

Ryan Gariepi, Clearpath Robotics:

Assuming the limiting entity is privately held and the robot and its software are sold rather than leased or managed, then there is no compelling legal reason for limiting use. However, the manufacturer is also not obligated to provide support for this particular robot or customer in the future. However, given that we are still only on the verge of social changes that robots will bring, it is in the interests of the manufacturer and the user to honestly communicate their goals to each other. Now you are investing not only in the initial purchase and relationship with the manufacturer, you are investing in the promise of how you can help each other succeed in the future.

If the robot is used in a reckless manner from a safety point of view: intervene! If you are faced with unethical use, do not be silent!

Peter Funkhauser, ANYbotics

What can you realistically do to ensure that the purchased robots are used as intended?

Peter Fankhauser, ANYbotics:

We maintain close cooperation with clients so that our solution will allow them to fulfill all their tasks. Therefore, we have abandoned the technical possibilities of blocking the misuse of products.

Robert Plater, Boston Dynamics:

We carefully check our customers and make sure that the intended use matches the functionality of the Spot robot and does not contradict the terms of the sales contract. We refuse to sell to customers who plan to use robots for tasks that do not quite suit them. And in case of abuse of our technologies or violation of the rules of use, according to the terms of the sales contract, the warranty and the ability to receive updates, maintenance, repair or replacement of the robot are canceled. We can also seize robots that were rented and not purchased. Finally, we will not re-sell robots to customers who violate the terms of the sales contract.

Ryan Gariepi, Clearpath Robotics:

We usually work with customers prior to a sale to ensure that their expectations meet reality, in particular on issues such as security, control requirements and usability. It’s better not to make a deal at all than to sell a robot that will gather dust on the shelf or, even worse, cause harm, so we prefer to reduce the risk of such a situation before receiving the order or sending the robot to the buyer.

How do you rate borderline use cases, for example if someone wants to use your robot in an art or research field that could push the boundaries of what you personally feel is responsible or ethical?

Peter Funkhauser, ANYbotics:

The main thing is to conduct a dialogue, try to understand each other and look for alternatives that will suit all interested parties, and the sooner this dialogue starts, the better.

Robert Plater, Boston Dynamics:

There is a clear line between the study of robots in science and art and the use of robots for violent or illegal purposes.

Ryan Gariepi, Clearpath Robotics:

We have already sold thousands of robots to hundreds of clients, and I don’t remember the last time we didn’t need to deal with export controls and conduct an overall assessment of the client’s goals and expectations. I am sure this will change as prices for robots continue to decline and their flexibility and usability rise.

Now you are investing not only in the initial purchase and relationship with the manufacturer, you are investing in the promise of how you can help each other succeed in the future.

Ryan Gariepi, Clearpath Robotics

What should robotics do if they see a robot being used in an unethical or irresponsible way?

Peter Funkhauser, ANYbotics:

If the robot is used in a reckless manner from a safety point of view, intervene! If you are faced with unethical use, do not be silent!

Robert Plater, Boston Dynamics:

We want robots to bring benefits to humanity, which means, among other things, do not harm people. We think that the robotics industry will only become commercially viable in the long term if humans see robots as useful tools without worrying about whether they might cause harm.

Ryan Gariepi, Clearpath Robotics:

If this is an isolated case, they should discuss the issue with the user, supplier or suppliers, the media, and regulatory or government agencies if an imminent safety threat arises. If this situation runs the risk of repeating itself repeatedly and is not taken seriously, robotics should bring it up for broader discussion at appropriate venues: at conferences, in industry groups, in standards bodies, etc.

Conclusion

As more and more robots with different capabilities appear on the market, these problems may arise more and more often. The three companies we spoke to certainly do not represent all of the points of view. But I suppose (hope?) Anyone involved in robot manufacturing would agree that robots should be used to improve people’s lives. But what “better” means in the context of art, research, and even the use of robots in the military is not always easy to define, and disagreements will inevitably arise as to what is ethical and responsible and what is not.