We use serverless to build analytics on data from AmoCRM in Yandex.Cloud

Today, almost every modern company collects, stores and uses data about its activities using the cloud technology. In this article, you can learn how you can pull data from AmoCRM, process it using functions, and analyze it using DataLens. This case was solved by a team of data analysts Valiotti Analytics together with the Yandex.Cloud team.

Introduction

Many modern digital startups and projects often use CRM systems to track the flow of transactions and monitor the activities of the sales department. We will tell you about the case of the English language school JustSchool… The company manages leads in AmoCRM, as it is a convenient and simple tool available to any sales manager. But AmoCRM itself lacks rich analytics functionality. Therefore, the task arises to take the entire amount of data from the CRM system, upload it to a third-party storage and analyze it. Using the AmoCRM API and the capabilities of the Yandex.Cloud service, we managed to build operational analytics using serverless functions, Managed Service for ClickHouse and the Yandex DataLens visualization tool.

Since the processing and analysis of information in the data storage system itself is extremely inconvenient, we were faced with the task of creating an algorithm for automatically uploading information about transactions and contacts in the required format for subsequent analysis. This was done thanks to the good API functionality that AmoCRM has. The main difficulty was in finding and configuring the process of unloading and processing a large amount of data. We will tell you about this in detail.

Cluster and Database Design

Step 1… Create a cluster in which all information from AmoCRM will be stored and processed

Step 2… Create a ClickHouse database. We did this using Managed Service for ClickHouse, and also created several tables required to store data from AmoCRM.

Step 3. Create an AmoCRM service account to flexibly manage Yandex.Cloud resources by assigning the necessary roles.

Step 4. Implement data upload using Cloud Function and Message Queue services. Data from AmoCRM is divided into:

Each subgroup is processed separately.

Solution architecture description

To build the architecture, we used the AmoCRM API, Yandex.Cloud and Yandex Datalens services. An example of service interaction is shown in the figure. We have built a completely serverless architecture for collecting, transforming and loading data.

Creating functions

We have created 7 functions for collecting and processing data. Since the system stores various data (about users, contacts, etc.), it was necessary to upload the data separately, using different logics and functions.

We also used special triggers to automatically run each function.

Scripts for collecting information

To download data from AmoCRM, we used the API. With its help, after sending a request, we receive data in json format. Then, from this format, we transform the data into a DataFrame and save it to the database. For example, to collect information about users, we created a function amousers…

with requests.Session() as s:

auth_url="https://{}.amocrm.ru/private/api/auth.php".format(subdomain)

get_url="https://{}.amocrm.ru/api/v2/account?with=users&free_users=Y".format(subdomain)

auth_data = {'USER_LOGIN': user_login, 'USER_HASH': user_hash}

s.post(auth_url, data=auth_data)

response = s.get(get_url)

users_dict = json.loads(response.text)['_embedded']['users']

df = DataFrame.from_dict(users_dict).transpose()

# Обработка полученных данных

client.execute("INSERT INTO justkids.amocrm_users VALUES", df.to_dict('records'))Data flow diagram

This diagram shows the data forwarding logic we used.

To organize the storage of intermediate data, we created queues in the Message Queue service.

Algorithm for sending data on the example of processing requests

1. Create a special timer that runs the firstpage function every day at 11:15 pm.

2. Function firstpage queues up amo_leads_pages digit 1 is the index of the first page from the original database of transactions. This means that data collection has started, and 1 is the index of the page from which to start downloading data from AmoCRM. Remember, in AmoCRM, all data is paginated with a maximum of 250 applications.

client = boto3.client(

service_name="sqs",

endpoint_url="https://message-queue.api.cloud.yandex.net",

region_name="ru-central1"

)

queue_pages_url="https://message-queue.api.cloud.yandex.net/путь_до_очереди"

client.send_message(

QueueUrl=queue_pages_url,

MessageBody='1'

)Once in line amo_leads_pages digit appears, trigger amo-leads-pages starts a function instance amoleadsqueue…

3. Function amoleadsqueue takes a number from the queue and starts unloading data from AmoCRM, starting from the page number that was written to the queue. The function reads 250 requests and writes the received json to the queue amo_leadsfrom which another function receives and processes data.

4. Cloud Functions has a runtime limit. As soon as 9 minutes have passed, the function ends its work and queues up the page it left off. When a new number enters the queue, the trigger starts a new instance of the function, which continues to work from the last processed page.

while True:

try:

with requests.Session() as s:

auth_url = "https://{}.amocrm.ru/private/api/auth.php".format(subdomain)

get_url=f'https://{subdomain}.amocrm.ru/api/v4/contacts?with=catalog_elements,leads,customers&page={page}&limit=100'

auth_data = {'USER_LOGIN': user_login, 'USER_HASH': user_hash}

s.post(auth_url, data=auth_data)

response = s.get(get_url)

# Если данные закончились, заносим в очередь флаг END

if response.status_code == 204:

print(page, 'No Content code 204')

client.send_message(

QueueUrl=queue_pages_url,

MessageBody='end'

)

break

contacts_list = json.loads(response.text)['_embedded']['contacts']

client.send_message(

QueueUrl=queue_url,

MessageBody=json.dumps(contacts_list)

)

page += 1

# В Yandex.Cloud есть ограничение по времени выполнения функции, поэтому останавливаем сбор данных через 9 минут

if time.time()-start_time > 500:

break

except Exception as e:

return {

'statusCode': 100,

'body': e

}

# Кладем в очередь номер, страницы на которой остановились

сlient.send_message(

QueueUrl=queue_pages_url,

MessageBody=str(page)

) 5. Function amoleadsqueue only collects data and sends it to the queue amo_leads… When values enter the queue, the trigger amo-leads-to-db runs the function amo_leads, which processes the received json data and writes it to ClickHouse.

Function work amoleads shown in the screenshot.

6. With the help of monitoring, you can observe the progress of the function, track how long it has been executed, and look at the time of occurrence of errors. This is a great tool for tracking code work and quickly solving problems.

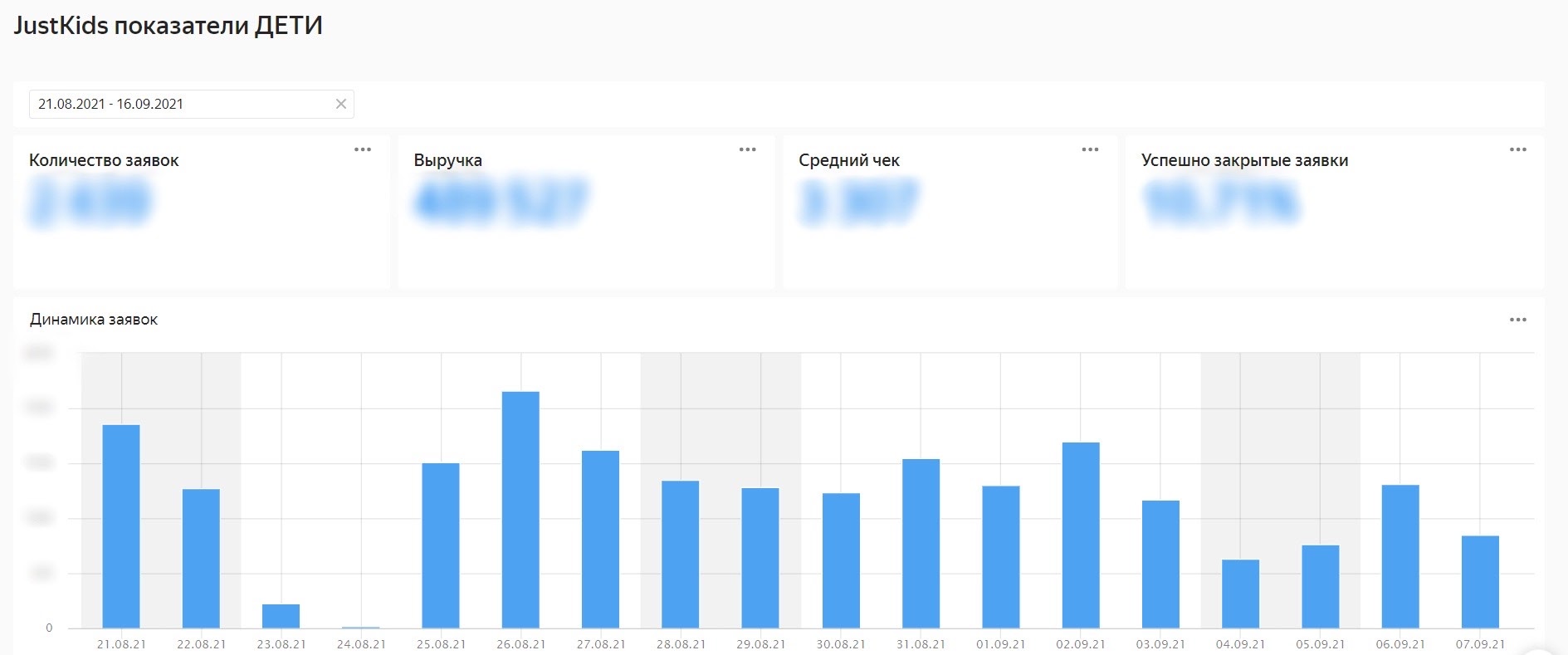

Creating a dashboard in DataLens

We created two datasets for collecting the final dashboard for the children’s school funnel. In the first dataset, which is created based on the table justkids.amocrm_leads_static, we created the necessary fields for calculations and converted the numbers to timestamps.

We created the second dataset based on a SQL query. This helped us calculate the conversion of the funnel stages.

Based on these datasets, we created Charts, assembled a Dashboard and added a filter by the date the request was created.

To filter datasets with a single filter, we created a special relationship between them.

Service benefits

Serverless architecture, which is used on Yandex.Cloud, simplifies working with data and speeds up the development and monitoring of functions. The use of such an architecture allows us to focus on solving the case, since Yandex.Cloud takes care of all possible problems associated with the operation of the server.

It’s easy to set up a Clickhouse cluster using a database management service: installation is done in a few clicks. Then you work with the database, and the service provides fault tolerance, backup, monitoring, updating and other tasks. Vertical and horizontal scaling is available “by button” in the control console.

The Yandex.Cloud data platform presents tools for analytics and data visualization – Yandex DataLens Yandex DataLens, the interaction between which is carried out very quickly within one system.

Challenges and limitations

The Cloud Functions service has a time limit for executing one instance of a function. We need to process more than 4 thousand data pages, and the function manages to process only 100-200 pages in 500 seconds of the allotted time. To solve this problem, Yandex.Cloud employees recommend using the Message Queue service, which works great.

The size of the message that enters the queue is limited, since there are about 150-250 requests on one AmoCRM page. When data enters the queue, the function immediately loads the existing data, processes it and writes it to the database. ClickHouse shows excellent performance for writing large amounts of data. We do not recommend writing data in small pieces (150-250 requests that we receive from the queue). Unfortunately, this problem has not yet been resolved, and the function continues to write data to the table only in small portions.

Company Valiotti Analytics offers a wide range of services related to data analysis: from designing the architecture of data warehouses, building data engineering processes to automating reports and building dashboards. The main thing in our business is to make the data work for the benefit of the company, which is why we work very carefully with the latest technologies.

Read our blog about cases and methods of data analysis LeftJoin, channel in Telegram and subscribe to our profile in Instagram…