The evolution of recruiting in the QA team Compare

Hi all! My name is Vasily, I lead the QA team in Sravni. Today I want to share my experience of hiring and onboarding testers. As the company’s staff grew, these processes changed, the pandemic also made its own adjustments. I hope the article will be useful to everyone who is involved in one way or another in the hiring of employees of technical specialties. But first things first.

About the QA team

Now we have 22 people working in the QA department, in the near future we plan to expand to 27.

Geographically, our quality assurance employees are scattered across different regions: from Moscow and St. Petersburg to Yekaterinburg and Novosibirsk, there are several people from the Tver and Moscow regions.

In QA, we do not have a division into front-end and back-end – all our specialists perform manual full-stack testing. Engineers work in three product areas: mobile application, web and financial marketplace.

As for our approach to hiring, we are looking for specialists who have already developed a certain theoretical base for testing – that is, in addition to practical skills, we pay attention to their knowledge and understanding of how QA processes are arranged.

This does not mean that you need to be a QA guru to work for us – it is clear that everyone can have their own weak points, a lot depends on the level of the position. But there must still be a certain theoretical basis, a person must be guided in how everything works. For our part, we help testers develop, suggest where to go next.

Recruitment in pre-modern times

Previously, the entire recruitment process took place offline: we invited candidates to the office and conducted interviews – first on general questions and previous experience, on soft skills, and then on technical issues.

For example, at the first meeting, the following issues were usually discussed:

previous experience of the candidate, specialization (web, mobile, automation, load testing);

in which team the candidate worked, how many people there were, what roles he performed.

During the conversation, we also assessed how sociable the candidate is, whether he or she has a reasonable constructive position, whether he or she knows how to listen to arguments and reason.

At the technical interview, we tested hard skills, for example:

working with Git;

work with logs;

knowledge of TeamCity;

understanding the DOM;

orientation in devtools (for example, they asked where to see the response codes of groups 3**, 4**, 5**, 2**);

work with bugtrackers;

conducting test cases;

understanding of client-server processes (what happens and where what is processed when entering a request);

work with the database (SQL queries).

Hiring online

As you might guess, with the onset of the pandemic, all hiring has moved online. At first, we didn’t really bother and just transferred the offline interview model to online.

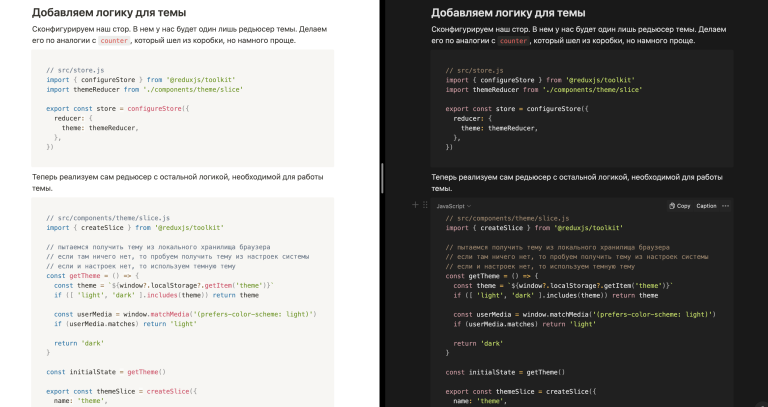

At this time, we just started an active recruitment of new employees. There was a need for other colleagues to conduct interviews besides me, who might not be in the subject of all the details. Therefore, for reference, we recorded some of the expected answers to the questions in writing.

Another problem we faced was that we did not have a unified system for evaluating candidates. And since there were more and more of them, something had to be done about it. As a result, we created a table for comparing candidates, where we assessed their skills and knowledge, for starters, there is (+) or not (-) at the level.

When we had even more candidates, they no longer fit in the table, and besides, it became inconvenient to compare them. Therefore, we decided to make sure that each candidate has his own plate.

We also developed a grading system from junior to QA lead, the so-called interview matrix. Depending on the level of the candidate, we asked questions from the corresponding column (green for juniors, yellow for middles, red for seniors). On the right, we screwed checkboxes to evaluate this or that skill (yes / no).

But somewhere in the fall of 2021, we began to notice that this system also became uncomfortable. For example, it often happened that a candidate came to interview for a middle, but in the process it turned out that he did not pull on a middle, and then we switched to questions for juniors. Accordingly, to the right of each grade, they made their own column with checkboxes to make it clearer what questions the candidate answered.

In addition, we diluted theoretical questions with test tasks (up to 5-6 tasks per interview). These could be tasks to resolve various situations, detect bugs, analyze an error that has occurred, work with a database, and so on.

In general, our table is constantly updated and expanded to better meet our expectations and the realities of the market.

Now we have already accumulated more than 70 questionnaires from different candidates. So, if someone wants to get feedback or comes to us again, we can always raise the questionnaire and remember how the interview went. We have cases when a candidate came to us, did not pass an interview, received feedback, and six months later we met again and made an offer.

About onboarding

At the start of onboarding as such, we did not have. That is, new people came to the team, but it turned out that no one really understood what to do with them: sometimes there were tasks, and sometimes not.

To solve this problem, we have drawn up an onboarding plan for new employees. It was a weekly list of what you need to study, try, who to meet in order to smoothly immerse yourself in the work of the company. This plan was designed for approximately 2.5 months. Every week I held a meeting with a beginner and talked about some next command and / or tool.

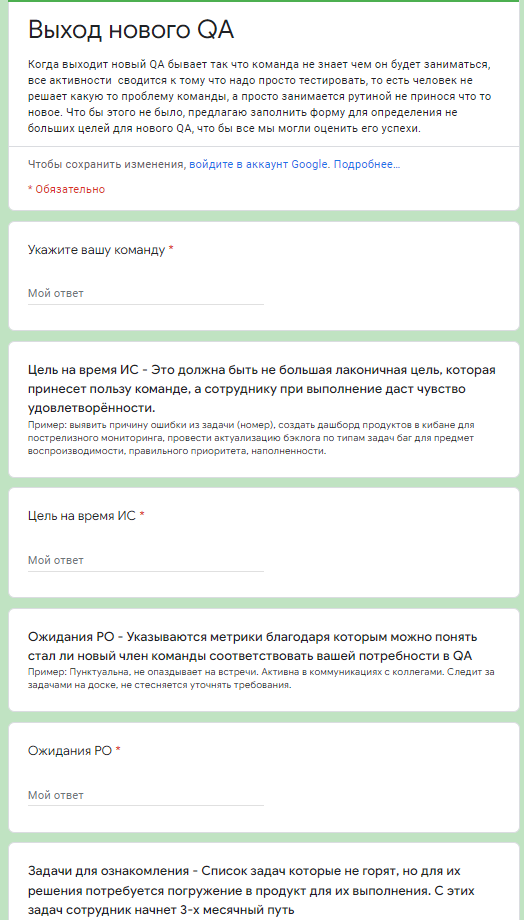

I also set goals for each newcomer for a trial period. For example, such: build a testing process in a team, make a product description, master working with a test environment, etc. – depending on the qualifications of the employee and the team.

Then this process became inconvenient, as the number of newcomers increased, and it became difficult for me to keep up with everything. Then I decided to change the approach a little and moved the onboarding to Confluence, where I collected all the materials on one Welcome page so that employees could familiarize themselves with them.

Then I decided to involve their teammates in the adaptation of newcomers to help them get comfortable and form a list of tasks for a trial period. To do this, I sent a questionnaire to product or technical leaders with questions: what are their expectations from the employee, what should he do during the trial period. Then all this was assembled into a single list of tasks in Jira. But this approach didn’t work because the tasks that came from product or tech leads were often weakly correlated with the responsibilities of the tester (especially at the initial stage).

Therefore, in the end, I came to the conclusion that I created an epic task in Jira for employees on a trial period and now I add all newcomers there. I make a list of goals myself based on communication with the QA team, products and technical leads, and make sure that real testing tasks are included there.

If even at the interview stage I see that the candidate is generally suitable for us, but there are some gaps in knowledge, then I convert these gaps into a goal for a trial period. For example, if a beginner is poorly versed in SQL or does not understand the philosophy of CI / CD, then I set him the task of improving these skills during the trial period.

Now we adhere to this onboarding model and from time to time we make some improvements and improvements.

What is the result?

Overall, despite some hiccups, we were able to successfully move all hiring and onboarding to an online format. In a sense, the company even benefited from this, because it was possible to expand the geography of hiring and systematize all related processes.

In the future, we plan to improve the candidate selection system by shifting priorities from questionnaires to test scenarios that allow the candidate to demonstrate their knowledge in more detail. Perhaps we will introduce partial automation and continue to improve the onboarding process based on feedback from employees, we are also thinking about introducing a grading system.

If you have any comments or questions, I’ll be happy to answer!