The evolution of HighLoad applications on the example of a regional portal of public services

“Tomorrow is the 20th, which means there will be a storm again. It’s impossible to stop it, just prepare and hope that this time it will blow, a miracle will happen, and our lake ferry will conquer the ocean ”. Such thoughts overpowered the team involved in supporting the municipal services portal several years ago. How we got into this situation and how we found a way out of it will be described below.

How it all began

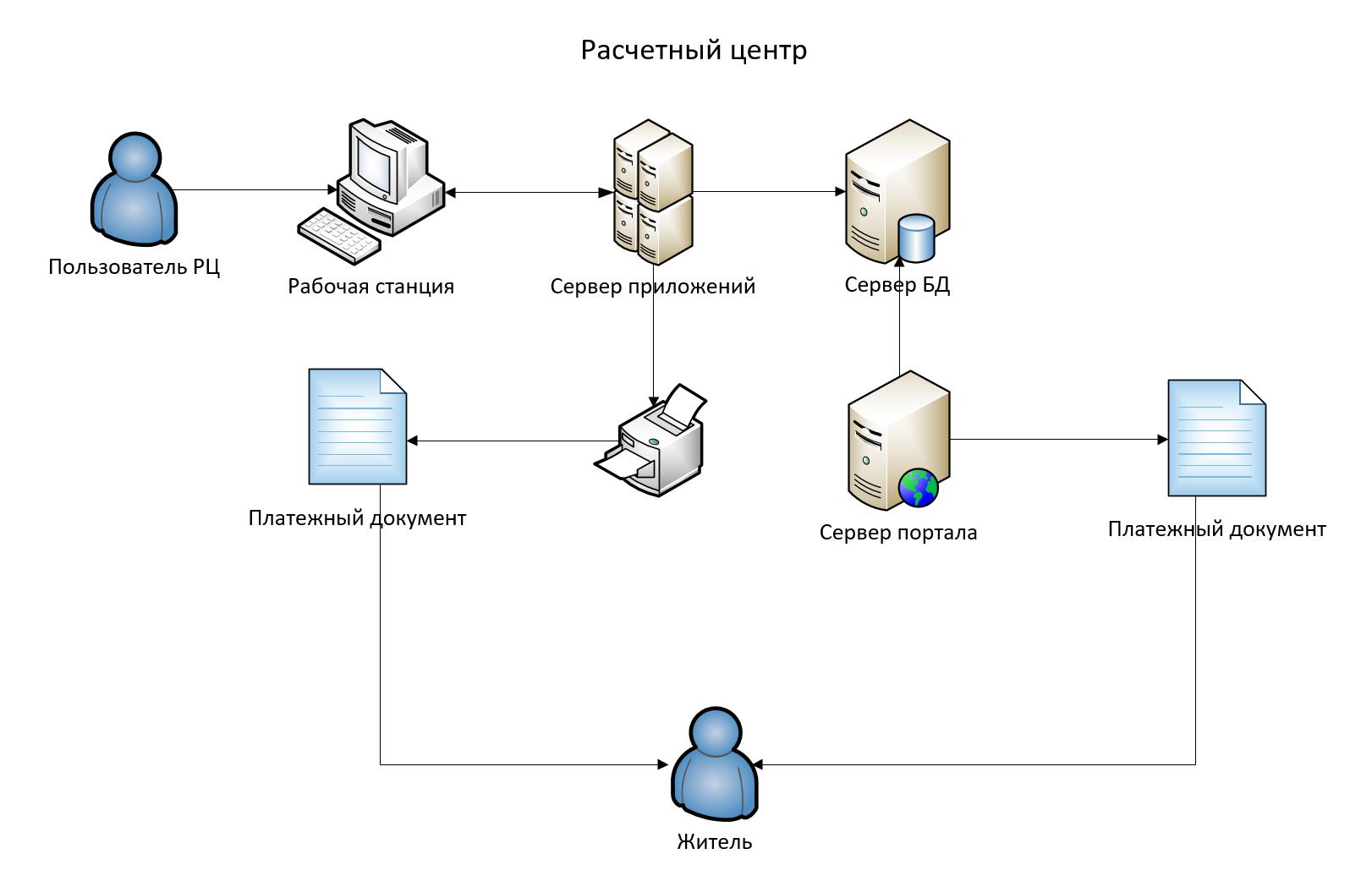

In the distant 1990s, the housing and utilities sector experienced a boom in development, new technologies were introduced, automated information systems, and new equipment was purchased. But something remained for a long time practically unchanged, namely, payments for the apartment. Yes, those same receipts for an apartment, having transformed into payment documents, having acquired barcodes, a detailed decryption, remained as pieces of paper. The typical scheme of work of the settlement center of a housing and communal services enterprise or resource supplying enterprise was as follows:

Gradually, the modem Internet was replaced by broadband access, the thought arose – why not receive online payment documents in electronic form? At the same time, the housing and communal services sector was shaking in an organizational form, MPP housing and communal services (municipal production enterprise of housing and communal services) was replaced by municipal unitary enterprises (municipal unitary enterprises), DEZy (directorate of a single customer). As a result of all the transformations, the IT departments of the housing and communal services enterprises were alienated, and all kinds of settlement centers were born on their basis. The essence of the settlement centers was, in fact, the calculation of the rent and information support of the population.

Growth Stage, 00s

It was then that the first information portals were born that provided the population with electronic services. The number of first users was measured in dozens, not everyone trusted electronic payment documents, others had not heard about innovations, mobile applications in the field of public services were rare. The work of information portals did not differ much from the work of familiar information systems and, of course, was never a highload architecture.

Years passed, the portals improved, opportunities appeared to take readings of metering devices, generate certificates, etc. It was at this time that the first “clouds” appeared on the horizon, more and more users began to register on portals and request data. In the distance, the first wave of approaching loads appeared.

Team (hereinafter referred to as Team 1) took the following optimization measures:

– Change the size of the payment document in PDF from 0.5MB to 0.2MB

– Creating a queue for the formation of payment documents

– Storage of generated payment documents for repeated request

– Creating a replica of the main database, only for the needs of the portal

For several years, the situation seemed stable, the number of users of individual portals was measured in the thousands, it did not storm yet, but it rocked significantly, decentralization of clearing centers played into the hands of developers.

Big Jump Stage

A new milestone in history was the development of a municipal services portal at the regional level. The inclusion of a single entry point for any resident of the republic was a tempting idea; in addition, this would increase the rating of the state site to the level of the best commercial or banking sites, where each resident of the region would receive many different kinds of services. One of the most popular could be the payment of housing and communal services and the inclusion of meter readings.

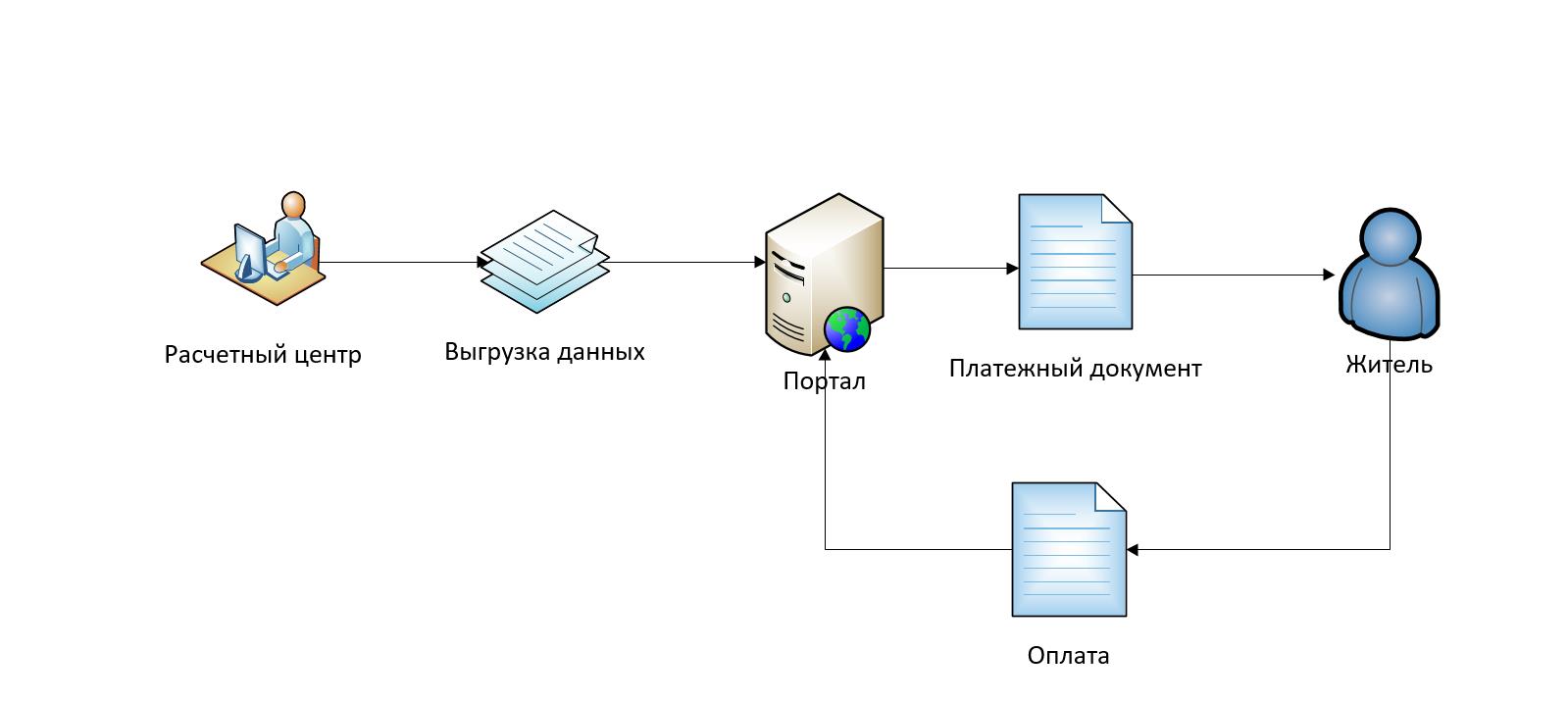

Thus, the next step was the separation of the operational information of the settlement centers from the information displayed in the payment document. For this, a simple data transfer format and a database for storing information were developed, and the calculation of the space required for storage for 5 years of operation was calculated.

Key decisions made at this stage:

– Department of information part of the operational database;

– Development of a database for storing this format;

– Combining the data of settlement centers of the regions into a single database;

– Integration with portal processes, development of services.

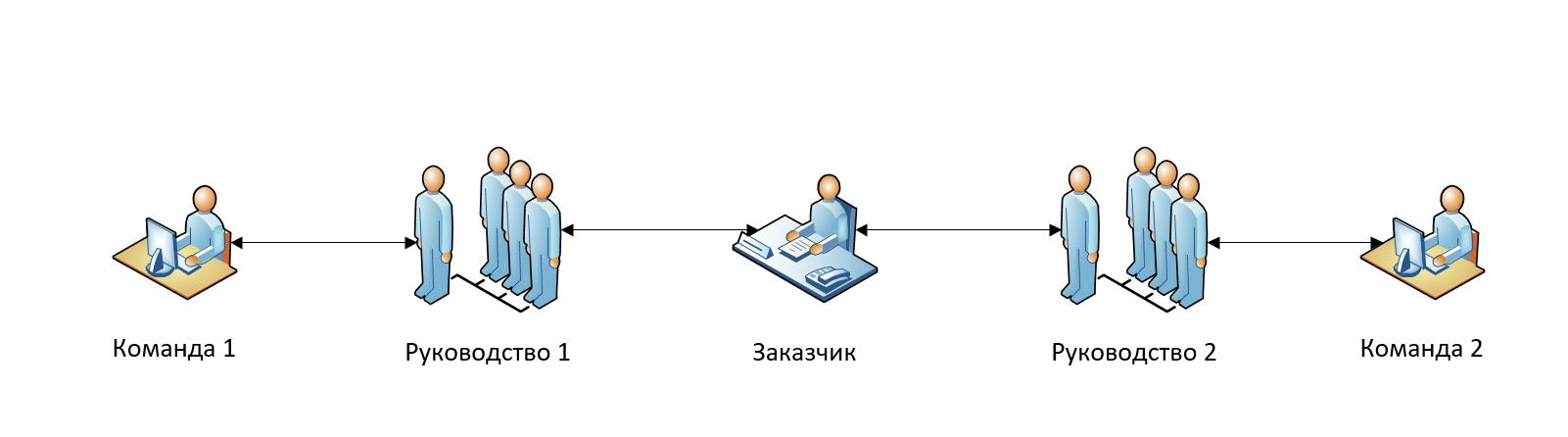

At this stage, the solution turned from a full-fledged into a backend, since the portal provided users with a single interface. The portal had its own team, which developed it regardless of the current solution of settlement centers. Appears in our story second team (hereinafter referred to as Team 2) and a new vendor. As we will see later, this has significantly affected the development and resolution of problems. The essence of the design solution was as follows:

When designing the database, a simple mapping of the format to the database was accepted, PostgreSQL 9.3 was selected as the DBMS (at that time it was a very current version). The format consisted of 9 flat files, each of which was loaded with the COPY command (we read – very quickly) into the many tables of a specific billing center (each billing center had its own registration number) of the portal database. In some settlement centers, the number of records required for the formation of payment documents reached 1,000,000. In a year this amounted to 12,000,000, over 5 years -60,000,000. The number of requests to this database increased to the sum of all users of district portals and could make up tens of thousands. There was something to think about.

Having no such experience, Team 1 took the following steps to reduce potential load:

– The tables were divided by settlement centers and months of partitioning;

– The process of loading data is timed for different billing centers.

Growth problems

The portal was launched, and the prepared plans were faced with reality:

– The number of users exceeded the estimate very quickly

– The queries went to the master table, but the DBMS still polled all the tables

– Spurious load on the database server. On this server there were other, incomparably large databases, the information in which came at random moments, loading it took all available resources

– The service of forming a payment document was slowed down due to inefficient implementation

– The load from users was not evenly distributed across the month, but concentrated in two periods:

This moment is described at the beginning of the post. It was difficult to diagnose the problem, because the problems seemed in all places at once. Team 1 and Team 2, equally loved by their leadership, took steps to get out of the situation, but there was practically no communication between themselves:

Team 2 seemed to take a logical and useful step: the formation of a payment document began to be requested immediately after the user entered the system, in the expectation that while he reached the right place through the pages, the AP was already formed, and the finished document could be pulled up quickly.

At that time Team 1 each month heroically solved one problem per month, each time convincing the customer that it was there that the root of the problem was hiding:

– Optimized SQL (received a performance increase at times);

– Separated the database server for the portal from other databases;

– Made the formation of the payment document in a separate application and also optimized;

– Revised access to the master table, changed the version of PostgreSQL;

– Once again, we revised the appeal (now only 1 particular partition was requested from the master table – even acceleration at times).

The heroic efforts of the teams led to local successes (for 2-3 months it seemed that the problem was solved). But reality threw up new introductory notes all the time:

– The database has already contained several years and has grown more than 1 TB;

– The number of users was already hundreds of thousands.

While the fight was on Team 2 I set up an automatic service test, so that any performance problem became known in a matter of minutes and the problem escalated to the highest levels of control automatically via email.

At this time in Team 1:

– The database archiving time began to exceed the permissible limits (the service became unavailable);

– One call to the service immediately affected many months, respectively, generated many requests;

– DBMS has become worse than fulfilling requests due to the increase in the number of tables (partitions);

– Users who only wanted to make instrument readings still requested the formation of payment documents (recall the decision of Team 2).

It became clear that, from a technical point of view, the solution had reached its limit and it was time to start analyzing user behavior in order to optimize processes or radically change technologies.

As a result of the analysis (here is the topic for a separate article), the following actions were taken:

– The data were cut off until the last 3 years, since there were no calls in the previous period; since then the old period has been cut off annually;

– We redid the appeal to the master table to refer to a specific partition directly (reduced the load on the DBMS).

Present

Now the system is quite stable, fits within the regulatory timeframe and requirements for non-functional characteristics, but clouds again appeared on the horizon:

– Further increase in the number of users;

– An increase in the number of households served;

– Increasing the complexity of the services provided;

– Increased granularity of data available to users.

Therefore, a plan is drawn up and the implementation of the following measures is being prepared:

– Analysis of user behavior: how many of them are actually viewing the payment document, and how many simply pay the amount, like for a cell phone;

– Preliminary formation of payment documents for those users who usually view payment documents at the time of the least load;

– Transition to alternative technologies (yes, many of those who reached this point have much more advanced solutions that solved all issues initially, on the resources of a mobile phone).

It would seem that this can put a big optimistic point. All well done: those who did and those who would have done better immediately. But tomorrow there will be new Highload projects, new unfamiliar problems. How to prepare for them now, what can be envisaged when there is no data yet?

A systematic approach to solving problems

Can we turn experience into a methodology for approaching problems in Highload projects? Oddly enough, the answer is YES, everything has already been invented for us and is within Theories of Constraint E. Goldratt (Theory of Constraint TOC). Only 5 simple steps:

1. Find the restriction (s) of the system.

2. Decide how to make maximum use of the system’s limitation (s).

3. Subordinate everything else to this decision.

4. Expand the restriction (s) of the system.

5. ATTENTION! If the restriction has been removed in the previous 4 steps, return to step 1, but do not allow inertia to cause a system restriction.

The description of this theory and the essence of the steps are quite well described in the literature at the end of the article, but I will write my vision in the framework of the current process:

1. Restriction: time of formation of the payment document.

2. Solution: Generate all payment documents in advance, when downloading data.

3. Subordinate to the decision: When contacting the user, issue a finished document.

4. To expand the restriction: To optimize the time of formation of the payment document.

5. To abandon the system of preliminary formation for ALL households, to define a new restriction.

Such an approach would reduce the solution time by several years, with minimal labor costs for programmers. The post does not indicate all the problems that have arisen and there are no some details of the solutions, since they are not so significant in the proposed approach.

What to read on the topic:

1. “Goal. Continuous Improvement Process ”, Eliyahu Goldratt

2. “Theory of Constraints. Basic approaches, tools and solutions ”, Dmitry Egorov

3. “Goldratt’s Theory of Constraints. A systematic approach to continuous improvement ”William Detmer