Technologies for Testing Total Dictation: What Can Be Improved?

I am on the jury World AI & Data Challenge… This is such an international competition for technology developers to solve various social problems, such as fighting poverty, helping people with hearing and vision impairments, improving feedback between people and government organizations, and so on. Now the second stage of the competition is underway, it will last until October. As part of this stage, we select the best solutions for the further implementation of projects. Since we at ABBYY work a lot with texts and their meaning, I was most interested in checking the texts within the framework of the Total Dictation project. Let’s use this problem as an example to figure out why natural language processing is one of the most underestimated areas of modern machine learning, and let’s discuss why, even when it comes to checking a dictation, everything is “a little more complicated than it seems”. And more interesting, of course.

I am on the jury World AI & Data Challenge… This is such an international competition for technology developers to solve various social problems, such as fighting poverty, helping people with hearing and vision impairments, improving feedback between people and government organizations, and so on. Now the second stage of the competition is underway, it will last until October. As part of this stage, we select the best solutions for the further implementation of projects. Since we at ABBYY work a lot with texts and their meaning, I was most interested in checking the texts within the framework of the Total Dictation project. Let’s use this problem as an example to figure out why natural language processing is one of the most underestimated areas of modern machine learning, and let’s discuss why, even when it comes to checking a dictation, everything is “a little more complicated than it seems”. And more interesting, of course.

So, the task: to create an algorithm for checking “Total dictation”. It would seem, what could be easier? There are correct answers, there are texts of the participants: take it and do it. Everybody knows how to compare lines. And here the interesting begins.

Such different commas; or semicolons?

Natural language is a complex thing, often with more than one interpretation. Even in such a task as checking a dictation (where, at first glance, there is the only correct solution), one must take into account from the very beginning that besides the author’s one there may be other correct options. Moreover, the organizers of the competition even thought about it: they have several acceptable spellings. At least sometimes. It is important here that the compilers are unlikely to be able to indicate all the correct options, so the participants of the competition, perhaps, should think about a model pre-trained on a large corpus of texts not directly related to the dictation. In the end, depending on understanding the context, a person can put a comma or not put a semicolon; in some cases anything is possible: using a colon, dash (or even parentheses).

The fact that it is a dictation and not an essay that needs to be evaluated is not a bug, but a feature. Automatic essay grading systems are very popular in the USA. 21 states use automated essay proofing solutions for the GRE. Only recently It revealedthat these systems give high marks to longer texts that use more complex vocabulary (even if the text itself is meaningless). How did you find out? MIT students developed a special program Basic Automatic BS Essay Language (BABEL) Generator, which automatically generated strings of complex words. Automated systems rated these “essays” very highly. Testing modern systems based on machine learning is a pleasure. Another equally hot example: former MIT professor Forest Perelman offered the e-rater system from ETS, which produces and grades the GRE and TOEFL exams, to check the 5000 word essay from Noam Chomsky. The program found 62 non-existent grammatical errors and 9 missing commas. Conclusion – algorithms do not work well with meaning yet. Because we ourselves can very badly define what it is. The creation of an algorithm that checks the dictation has an applied sense, but this task is not as simple as it seems. And the point here is not only the ambiguity of the correct answer, which I said here, but also that the dictation is dictated by a person.

The personality of the dictator

Dictation is a complex process. The way the “dictator” reads the text – as the organizers of the total dictation jokingly call those who help carry it out – can influence the final quality of work. An ideal proofreading system would correlate the results of the writers with the quality of the dictation using text to speech. Moreover, similar solutions are already being used in education. For instance, Third space learning Is a system created by scientists from University College London. The system uses speech recognition, analyzes how the teacher conducts the lesson, and based on this information, makes recommendations on how to improve the learning process. For example, if a teacher speaks too fast or too slowly, quietly or loudly, the system will send him an automatic notification. By the way, on the basis of the student’s voice, the algorithm can determine that he is losing interest and is bored. Different dictators can influence the final results of the dictation for different participants. There is an injustice that can be removed by what? Right! Artificial Intelligence Dictator! Repent, our days are numbered. Okay, seriously, online you can simply either give everyone the same soundtrack, or put an assessment of the quality of the “Dictator” into the algorithm, no matter how seditious it sounds. Those who were dictated faster and less clearly can count on additional points “for harmfulness”. Anyway, if we have speech-to-text, then another idea comes to mind.

Robot and man: who will write the dictation better?

If we do sound recognition in the broadcast, then it goes without saying to create a virtual participant in the dictation. It would be cool to compare the successes of AI and humans, especially since similar experiments in different educational disciplines are already being actively carried out in the world. So, in China in 2017, AI took the gaokao state exam in Chengdu, a kind of Russian Unified State Exam. He scored 105 points out of 150 possible – that is, he passed the subjects with a solid “three”. It is worth noting that, as in the “Total Dictation” problem, the most difficult thing for the algorithm was understanding the language – in this case, Chinese. In Russia Sberbank last year spent competitions to develop algorithms for passing tests in the Russian language. The Unified State Exam consisted of tests and an essay on a given topic. Tests for robots were compiled with an increased level of complexity and consisted of three stages: directly completing the task, highlighting examples according to the given rules and wording, and also correctly recording the answer.

Let’s get back to the dictation task from the discussion of “what else can be done”.

Error map

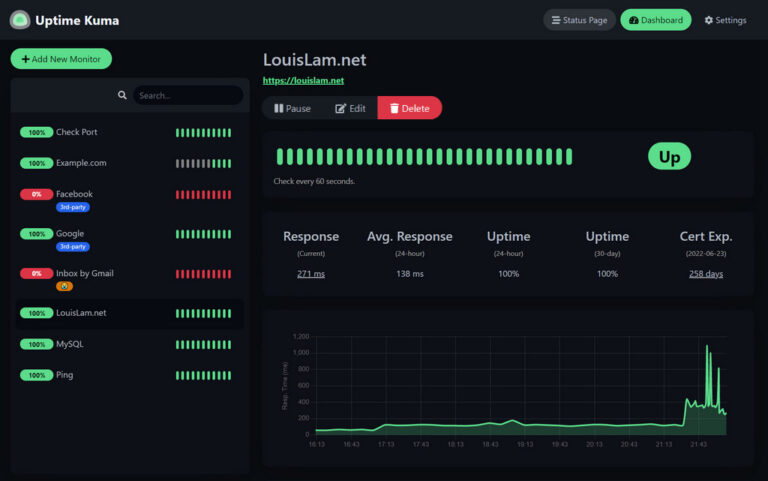

Among other things, the competition organizers ask for a heatmap of errors. Tools like heatmap show where and how often people go wrong; it is logical that more often they make mistakes in difficult places. In this sense, in addition to the discrepancy with the reference options, you can use a heatmap based on the discrepancies of other users. Such collective validation of each other’s results is easy to implement, but can significantly improve the quality of verification.

Partially similar statistics “Total Dictation” is already collecting, but it is done manually with the help of volunteers. For example, thanks to their work we learned that most of all users make mistakes in the words “slow”, “too much”, “planed”. But collecting such data quickly and efficiently becomes the more difficult, the more participants in the dictation. Several educational platforms are already using similar tools. For example, one of the popular applications for learning foreign languages uses such technologies to optimize and personalize lessons. To do this, they developed a model whose task is to analyze the frequency combinations of errors of millions of users. This helps predict how quickly a user can forget a particular word. The complexity of the topic being studied is also taken into account.

In general, as my father says: “All tasks are divided into bullshit and deaf. Bullshit are tasks that have already been solved, or have not yet begun to be solved. Deaf people are tasks that you are solving at the moment. ” Even around the problem of text validation, machine learning allows you to ask a lot of questions and create a bunch of add-ons that can qualitatively change the experience of the end user. We will find out what the participants of the World AI & Data Challenge will do by the end of the year.