Simulators of computer systems: the familiar full-platform simulator and unknown to anyone tactact and tracks

In the first part I talked about what simulators are in general, and also about the levels of modeling. Now, on the basis of that knowledge, I propose to dive a little deeper and talk about full-platform simulation, how to collect tracks, what to do with them later, and also about beat-based microarchitectural emulation.

Full platform simulator, or “Alone in the field – not a warrior”

If you want to investigate the operation of one particular device, for example, a network card, or write firmware or a driver for this device, then such a device can be modeled separately. However, using it in isolation from the rest of the infrastructure is not very convenient. To start the corresponding driver, you will need a central processor, memory, access to the bus for data transfer and so on. In addition, the driver needs an operating system (OS) and network stack. In addition, a separate packet generator and response server may be required.

A full-platform simulator creates an environment for launching a full software stack, which includes everything from the BIOS and the bootloader to the OS and its various subsystems, such as the same network stack, drivers, user-level applications. To do this, it implements software models of most computer devices: processor and memory, disk, input-output devices (keyboard, mouse, display), as well as the same network card.

Below is a block diagram of the Intel x58 chipset. In a full-platform computer simulator on this chipset, it is necessary to implement most of the listed devices, including those that are inside the IOH (Input / Output Hub) and ICH (Input / Output Controller Hub), which are not drawn in detail on the block diagram. Although, as practice shows, there are not so few devices that are not used by the software that we are going to launch. Models of such devices can not be created.

Most often, full-platform simulators are implemented at the processor instruction level (ISA, see previous article). This allows you to relatively quickly and inexpensively create the simulator itself. The ISA level is also good because it remains more or less constant, unlike, for example, the API / ABI level, which changes more often. In addition, the implementation at the instruction level allows you to run the so-called unmodified binary software, that is, run the already compiled code without any changes, exactly as it is used on real hardware. In other words, you can make a copy (“dump”) of the hard drive, specify it as the image for the model in a full-platform simulator, and voila! – The OS and other programs are loaded in the simulator without any additional actions.

Simulator Performance

As mentioned just above, the process of simulating the entire system as a whole, that is, all its devices, is a fairly quick event. If you also implement all this at a very detailed level, for example, microarchitectural or logical, then the implementation will become extremely slow. But the level of instructions is a suitable choice and allows the OS and programs to run at speeds sufficient for the user to interact comfortably with them.

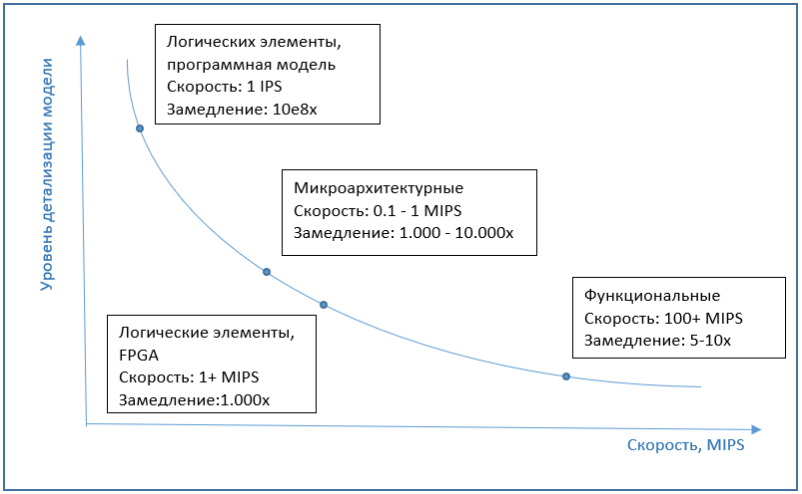

Here it will just be appropriate to touch on the topic of simulator performance. Usually it is measured in IPS (instructions per second), more precisely in MIPS (millions of IPS), that is, the number of processor instructions executed by the simulator in one second. At the same time, the speed of the simulation also depends on the performance of the system on which the simulation itself works. Therefore, it may be more correct to talk about the “slowdown” of the simulator compared to the original system.

The most common full-platform simulators on the market, the same QEMU, VirtualBox or VmWare Workstation, have good performance. It may not even be noticeable to the user that the work is going on in the simulator. This is due to the special virtualization feature implemented in processors, binary translation algorithms, and other interesting things. This is all the topic for a separate article, but if briefly, virtualization is a hardware feature of modern processors that allows simulators not to simulate instructions, but to execute them directly in a real processor, unless, of course, the simulator and processor architectures are similar. Binary translation is the translation of the guest machine code into the host code and subsequent execution on a real processor. As a result, the simulation is only slightly slower, every 5-10 times, and often generally works at the same speed as the real system. Although this is influenced by a lot of factors. For example, if we want to simulate a system with several dozens of processors, then the speed will immediately drop by several dozens of times. On the other hand, simulators like Simics in recent versions support multiprocessor host hardware and effectively parallelize simulated cores to the cores of a real processor.

If we talk about the speed of microarchitectural simulation, then it is usually several orders of magnitude, approximately 1000-10000 times, slower than execution on a regular computer, without simulation. And implementations at the level of logical elements are slower by several orders of magnitude. Therefore, FPGA is used as an emulator at this level, which can significantly increase performance.

The graph below shows an approximate dependence of the simulation speed on the detail of the model.

Bottom-line simulation

Despite the low speed of execution, microarchitectural simulations are quite common. Modeling the internal blocks of the processor is necessary in order to accurately simulate the execution time of each instruction. This may lead to misunderstanding – because it would seem, why not just pick up and program the execution time for each instruction. But such a simulator will work very inaccurately, since the execution time of the same instruction may differ from call to call.

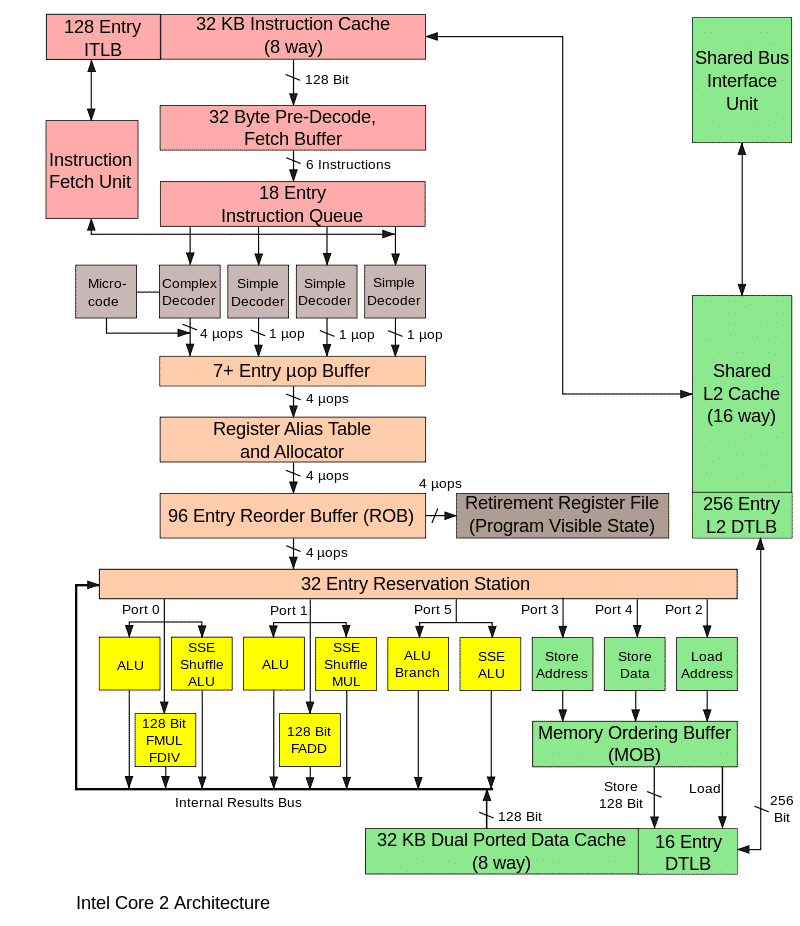

The simplest example is a memory access instruction. If the requested memory location is available in the cache, then the execution time will be minimal. If the cache does not have this information (“cache miss”, cache miss), this will greatly increase the execution time of the instruction. Thus, a cache model is required for accurate simulation. However, the business is not limited to the cache model. The processor will not just wait for data from memory if it is not in the cache. Instead, he will begin to execute the following instructions, choosing ones that are independent of the result of reading from memory. This is the so-called “out of order execution” (OOO, out of order execution), necessary to minimize processor downtime. Taking all this into account when calculating the execution time of instructions will help modeling the corresponding processor units. Among these instructions, executed while the result of reading from memory is expected, a conditional branch operation may occur. If the result of the condition is unknown at the moment, then again the processor does not stop execution, but makes an “assumption”, performs the corresponding transition and continues to proactively execute instructions from the transition point. Such a block, called branch predictor, must also be implemented in a microarchitectural simulator.

The picture below shows the main blocks of the processor, it is not necessary to know it, it is given only in order to show the complexity of the microarchitectural implementation.

The operation of all these units in a real processor is synchronized by special clock signals, similarly occurs in the model. Such a microarchitectural simulator is called cycle accurate. Its main purpose is to accurately predict the performance of the processor being developed and / or calculate the execution time of a certain program, for example, some benchmark. If the values are lower than necessary, then you will need to refine the algorithms and processor units or optimize the program.

As shown above, tick-wise simulation is very slow, so it is used only when studying certain moments of the program, where you need to find out the real speed of the programs and evaluate the future performance of the device, the prototype of which is being modeled.

At the same time, a functional simulator is used to simulate the rest of the program’s time. How does such combined use happen in reality? First, a functional simulator is launched, on which the OS and everything necessary to run the investigated program are loaded. After all, we are not interested in either the OS itself, or the initial stages of launching the program, its configuration, and so on. However, we cannot skip these parts and immediately proceed to program execution from the middle. Therefore, all these preliminary steps are run on a functional simulator. After the program is executed until the moment of interest to us, there are two options. You can replace the model with a push-pull model and continue execution. The simulation mode, in which executable code is used (i.e., regular compiled program files), is called execution driven simulation. This is the most common simulation. Another approach is also possible – trace driven simulation.

Track based simulation

It consists of two steps. Using a functional simulator or on a real system, the program action log is collected and written to a file. Such a log is called a trace. Depending on what is being investigated, the route may include executable instructions, memory addresses, port numbers, information about interrupts.

The next step is to “play” the track, when the beat simulator reads the track and follows all the instructions written in it. At the end, we get the execution time of this piece of the program, as well as various characteristics of this process, for example, the percentage of cache hit.

An important feature of working with tracks is determinism, that is, by starting the simulation as described above, over and over again we reproduce the same sequence of actions. This makes it possible, by changing the parameters of the model (the size of the cache, buffers and queues) and using various internal algorithms or by tuning them, to investigate how a particular parameter affects the system performance and which option gives the best results. All this can be done with the prototype model of the device before creating a real hardware prototype.

The complexity of this approach lies in the need to pre-run the application and collect the trace, as well as the huge file size with the trace. The pluses include the fact that it is enough to simulate only the part of the device or platform that is of interest, while the simulation of execution requires, as a rule, a complete model.

So, in this article we examined the features of full-platform simulation, talked about the speed of implementations at different levels, beat simulation and traces. In the next article I will describe the main scenarios for using simulators, both for personal purposes and from the point of view of development in large companies.

![Thermonuclear fusion [своими руками]](https://prog.world/wp-content/uploads/2022/03/2841d5b8e4c70d380c685a6e1d5969ef-768x403.png)