Reusable Python Logging Template for All Your Data Science Applications

The ideal way to debug and track applications is with well-defined, informative, and well-structured logs. They are a necessary component of any – small, medium or large – project in any programming language, not just Python. Don’t use print () or the default root logger, set up project-level logging instead. To the start of a new course on Data Science, we have translated an article, the author of which decided to share his logging template. It would not be superfluous to say that many specialists liked this template – from data scientist professionals to software developers of various levels.

I started working with the Python logging module a couple of years ago and since then have studied countless tutorials and articles on the Internet on how to work with it efficiently, in the best possible way for my projects.

They all explain well how to set up a logging system for a single Python script. However, it is nearly impossible to find an article that explains how to configure the Python logging library for application-wide use, and how to integrate and conveniently share logging information across all project modules.

In this article, I will share my personal logging template that you can use in any project with multiple modules.

It is assumed that you already know the basics of logging. As I said, there are many good articles from which you can glean useful information.

Let’s get down to business!

Let’s create a simple Python project

Explaining a new concept should always be done in simpler terms first, without being distracted by background information. With that in mind, let’s initialize a simple project for now.

Create a folder called ‘MyAwesomeProject’. Inside it, create a new Python file named app.py. This file will be the starting point for the application. I’ll use this project to create a simple working example of the template I’m talking about.

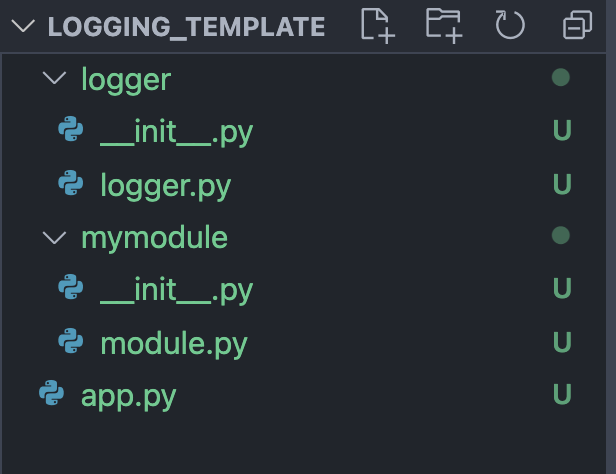

Open your project in VSCode (or your preferred editor). Now let’s create a new module to configure logging at the application level. Let’s call it logger. We are done with this part.

Create an application-level logger

This is the main part of our template. Let’s create a new file logger.py. Let’s define a root logger and use it to initialize the application-level logger. It’s time to do some coding. Several imports and the name of our application:

qimport logging

import sys

APP_LOGGER_NAME = 'MyAwesomeApp'The function that we will be calling in our app.py:

def setup_applevel_logger(logger_name = APP_LOGGER_NAME, file_name=None):

logger = logging.getLogger(logger_name)

logger.setLevel(logging.DEBUG)

formatter = logging.Formatter("%(asctime)s - %(name)s - %(levelname)s - %(message)s")

sh = logging.StreamHandler(sys.stdout)

sh.setFormatter(formatter)

logger.handlers.clear()

logger.addHandler(sh)

if file_name:

fh = logging.FileHandler(file_name)

fh.setFormatter(formatter)

logger.addHandler(fh)

return loggerWe will define our logger with the default DEBUG level and use Formatter to structure the logger messages. Then we’ll assign it to our handler to write messages to the console. Next, we also make sure to include a file in which we can additionally store all our log messages. This is done by logging the FileHandler. Finally, we return the logger.

Another function is needed to ensure that our modules can invoke the logger when needed. Define the get_logger function.

def get_logger(module_name):

return logging.getLogger(APP_LOGGER_NAME).getChild(module_name)Also, to work with the module as a package, we can optionally create a logger folder and place this file in it. If we do this, we will also need to include the _init.py file in the folder and write a line like this:

from .logger import *This is to ensure that we can import our module from the package. Sumptuously. The base is complete.

Installing a modular-level logger

For a better understanding of the pattern, you can make a simple module to test the logger. Let’s define a simple module.py.

import logger

log = logger.get_logger(__name__)

def multiply(num1, num2): # just multiply two numbers

log.debug("Executing multiply function.")

return num1 * num2Now this module has access to the logger and should display a message with the corresponding module name. Let’s check it out.

Run the script and test the logger

Let’s build app.py.

import logger

log = logger.setup_applevel_logger(file_name="app_debug.log")

import mymodule

log.debug('Calling module function.')

mymodule.multiply(5, 2)

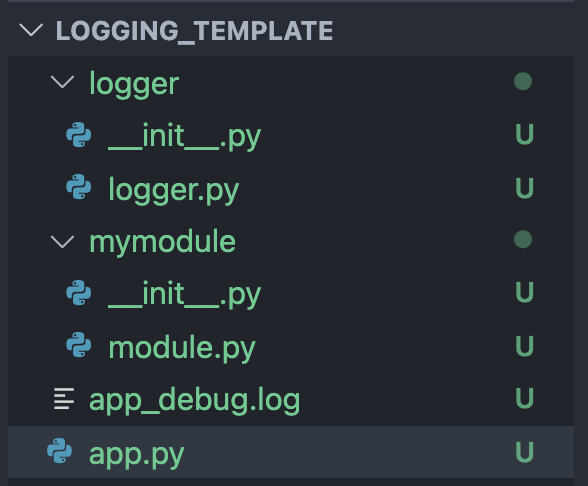

log.debug('Finished.')Notice how we are importing the module after logger initialization? Yes, it is mandatory. Now make sure your directory contains these files:

Finally, run the script with this command:

python3 app.pyYou will get output like this:

The directory structure should also change: a new log file should appear in it. Let’s check its contents.

Conclusion

This is how you can easily integrate logging into your workflow. It’s simple, it can be easily extended to include numerous hierarchies between different modules, catch and format exceptions with a logger, do advanced configuration with dictConfig, and so on. The possibilities are endless! The repository with this code is located here…

And if you are interested in the sphere Data Science and you are thinking to learn – by the link you can familiarize yourself with the course program and specializations that you can master on it.

find outhow to level up in other specialties or master them from scratch:

Other professions and courses

PROFESSION

COURSES