Recognizing a melody by studying the musician’s body language

An artificial intelligence-based musical gesture recognition tool developed at the MIT-IBM Watson AI Lab uses body movements to distinguish between the sounds of individual musical instruments.

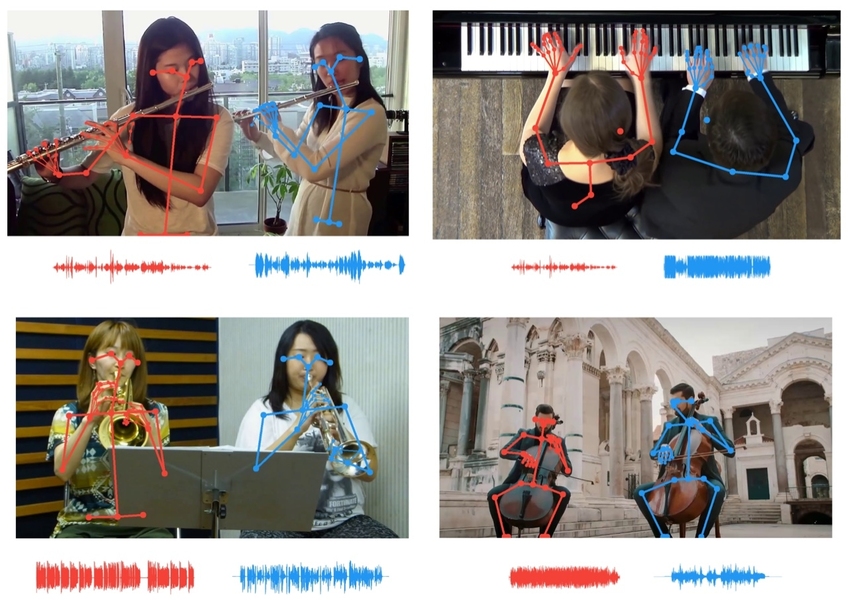

Image courtesy of the researchers.

Researchers use skeletal key point data to correlate musicians’ movements with the tempo of their parts, allowing listeners to isolate instruments that sound the same.

Image courtesy of the researchers.

We enjoy the music not only with our ears, but also with our eyes, watching with gratitude how the pianist’s fingers fly over the keys, and the violinist’s bow sways on the crest of strings. When the ear is unable to separate two musical instruments, our eyes help us by matching the movements of each musician with the rhythm of each part.

New Artificial Intelligence Tool developed MIT-IBM Watson AI Lab uses virtual eyes and computer ears to separate sounds that are so similar that it is difficult for a person to differentiate them. The instrument has been improved over previous iterations by aligning the movements of individual musicians using key points of their skeleton with the tempo of individual parts, allowing listeners to isolate the sound of an individual flute or violin among several of the same instruments.

Possible uses for the job range from mixing sound and increasing the volume of an instrument in a recording to reducing the confusion that causes people to interrupt each other during video conferencing. The work will be presented at the conference Computer Vision Pattern Recognition this month.

“Key points in the body provide powerful structural information,” says lead study author Chuang Gan, a researcher in the IBM lab. “We’re using them here to improve the AI’s ability to listen and separate sound.”

In this and other similar projects, the researchers used synchronized audio-video tracks to recreate the way people learn. An artificial intelligence system that learns with multiple sensory modalities can learn faster, with less data, and without having to manually add pesky shortcuts to every real-world view. “We learn from all our senses,” says Antonio Torralba, an MIT professor and co-author of the study. “Multisensory processing is the forerunner of embodied intelligence and artificial intelligence systems that can perform more complex tasks.”

This tool, which uses body language to separate sounds, builds on earlier work in which motion cues were used in image sequences. Its earliest incarnation, PixelPlayer, allowed click on an instrument in a concert video to make it louder or quieter. Update PixelPlayer allows you to differentiate between two violins in a duet by matching the movement of each musician with the tempo of their part. This latest version adds key point data (which sports analysts use to track athletes’ performance, to extract more granular motion data) to differentiate between nearly identical sounds.

The work highlights the importance of visual cues in teaching computers so they can hear better, and the use of audio cues to give them sharper vision. Just as the current study uses visual information about a musician’s movements to separate parts of similar-sounding musical instruments, previous work used sounds to separate similar objects and animals of the same species.

Torralba and colleagues have shown that deep learning models trained on paired audio-video data can learn recognize natural soundssuch as birdsong or waves hitting the shore. They can also determine geographic coordinates moving car by the sound of its engine and wheels moving towards or away from the microphone.

The latest research suggests that audio tracking tools could be a useful addition to self-driving cars, helping their cameras in poor visibility conditions. “Sound trackers can be especially useful at night or in bad weather, helping to mark vehicles that might otherwise have been missed,” says Hang Zhao, Ph.D. ’19, who was involved in research into motion and sound tracking.

Other authors of the CVPR study of musical gestures are Dan Huang and Joshua Tenenbaum of MIT.

That’s all. To learn more about the course, we invite you to sign up for the open day at the link below:

Read more:

How I taught my computer to play Doble using OpenCV and Deep Learning