open body Go code that supports requests

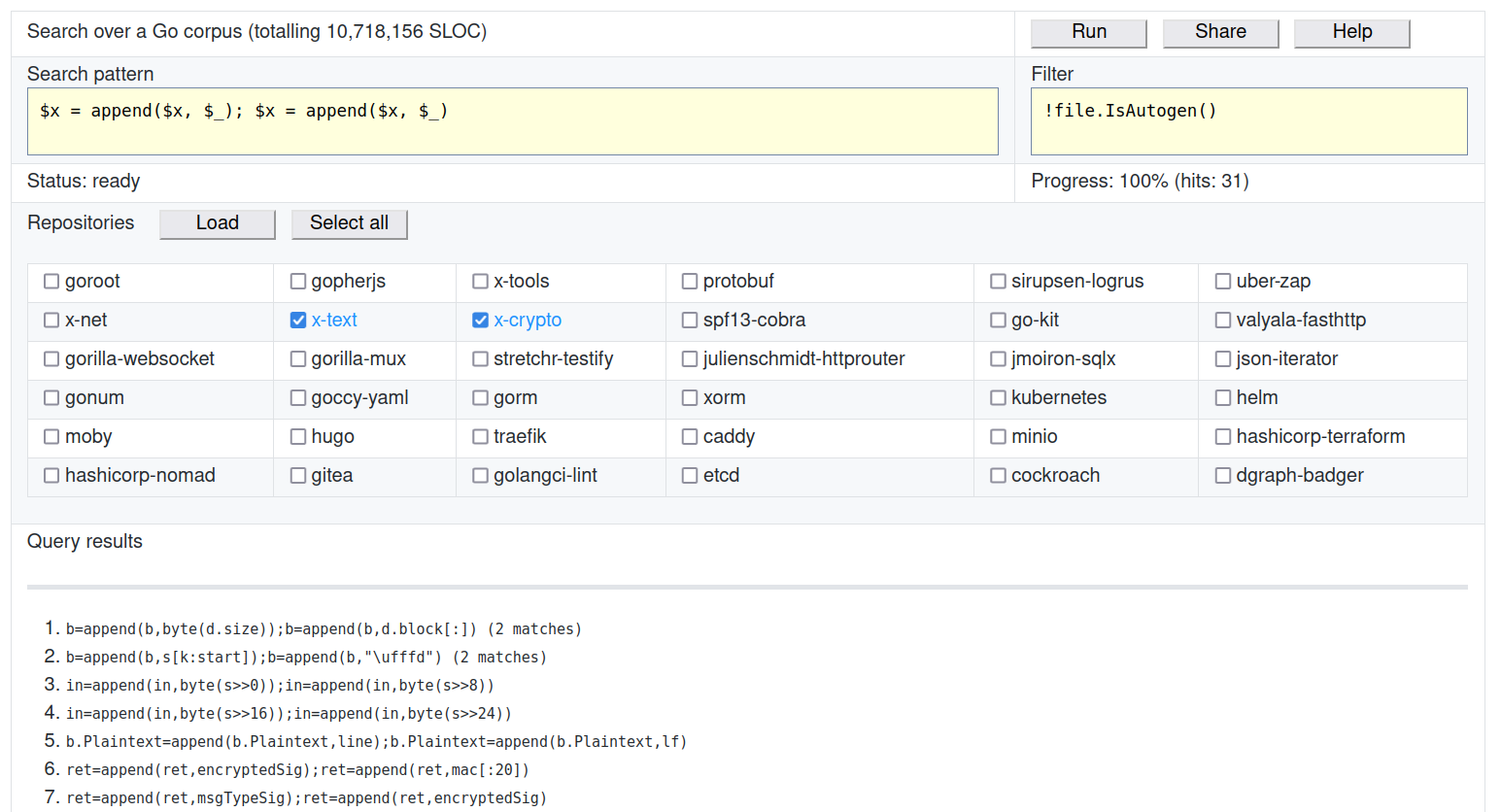

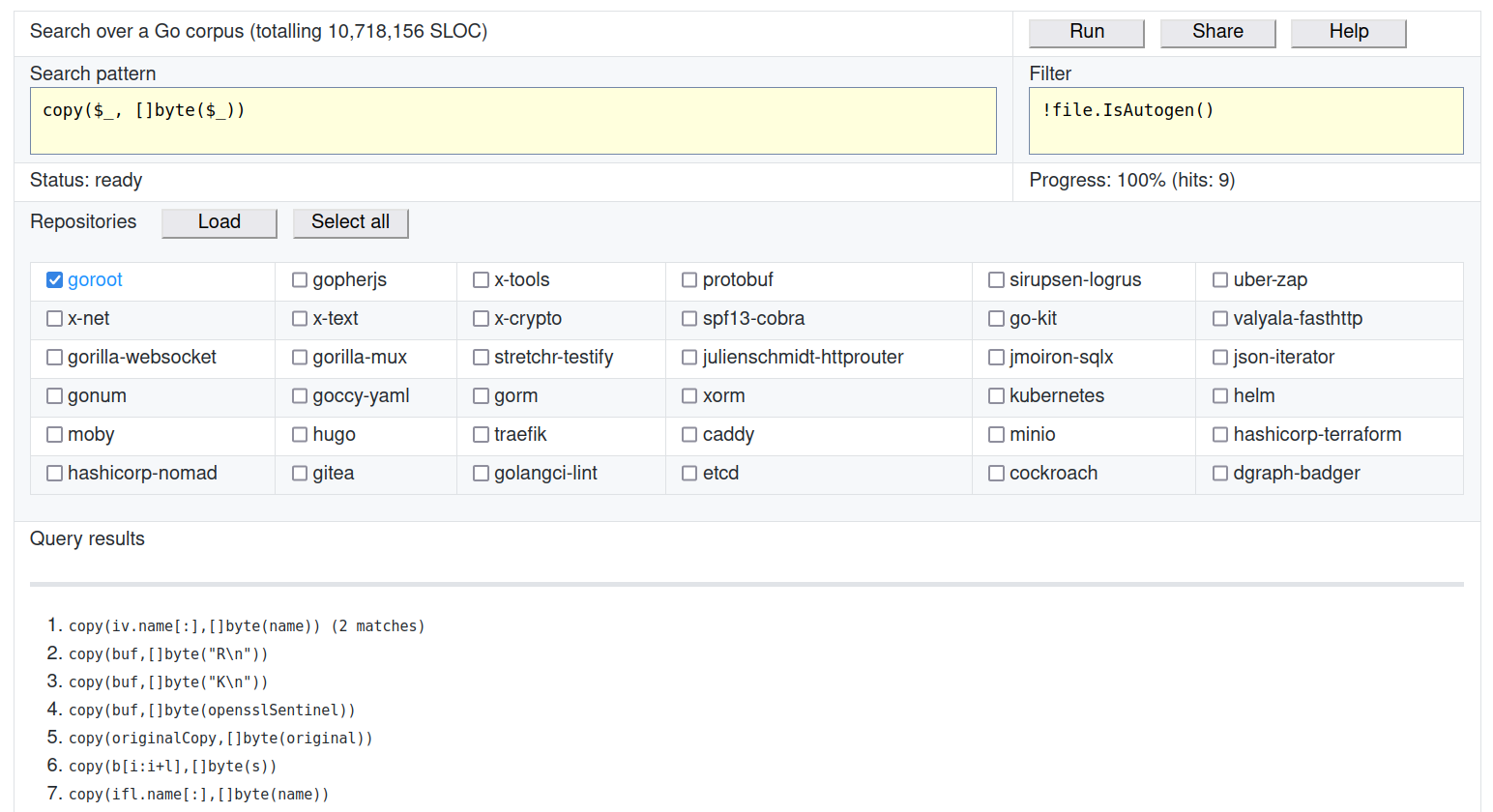

The other day I launched wasm applicationwhich allows you to run gogrep templates on a relatively large body of Go code (~ 11 million lines of code).

In this post I will write how to use it and why it may be needed at all.

Bring the stars here The source code can be found here: github.com/quasilyte/gocorpus…

What for?

Let’s say you want to test the claim that no one writes like this in average Go code (or vice versa, that everyone writes that). To do this, you will have to follow these steps:

- Build a collection of Go code. Several repositories, preferably varied.

- Figure out how to perform the search. Regular expressions may not be the most suitable tool.

- Make the results reproducible for other people so they don’t have to take your word for it.

Project gocorpus solves all these steps for you.

Is that at the time of this writing, paragraph

(3)not fully resolved. Another person can visit the page and retry the request, but the “share” is not implemented. As planned, share will provide a URL with options for launching: search template, filters, selected repositories.

Since I personally needed gocorpus to check statistics, and not specifically to search for projects, the results are now displayed without information about the location in the source code. This is not a fundamental limitation: I plan to add customization of the results format in the future.

About existing solutions

I have heard several times about bigquery corpus, but as far as I know, it’s not exactly free stuff. Besides, I think that this option is not very convenient to use.

codesearch works on regular expressions. This is not always enough, as I mentioned above.

Was still old frame from Russ Cox, but it is already in the archive and its contents have never been updated. Moreover, this is just a collection of code, and not a ready-made all-in-one solution.

Daniel marti (original author gogrep) collected something like an index of popular code: github.com/mvdan/corpus… In theory, this index can be used to form a set of repositories available in my application.

And some others:

My solution compares favorably at least in that it is sharpened for Go: autogenerated files, files with tests are recognized correctly, and filters can be applied to expressions. For example, you can require them to have no side effects ($x.IsPure).

Code body

To select repositories, I did a GitHub search with filters and sorting by the number of stars. Not the most scientific approach, but good enough for the first iteration.

After that, the selected repositories are cloned, analyzed, minified and put in compressed tar archives.

As we analyze the files, we record some metrics and facts about the file in the metadata. For example, does the file import “unsafe” or “C” (cgo). This information can then be used in filters.

On the client, we download tar.gz files and uncompress them in place.

More details on how the body is assembled can be found in makecorpus…

Filters

Currently, the following filters have been implemented:

$var.IsConst()expression captured$varis constant$var.IsPure()expression captured$varhas no side effects$var.Is<Kind>Lit()expression captured$varis a literal of the given type *file.IsTest()true for files with suffix_test.goin the title or_testin package namefile.IsMain()true for files with package namemainfile.IsAutogen()true for files that are marked as auto-generatedfile.MaxDepth()int value that can be compared to a constant **

(*)Kind maybeString,Int,Float,Rune(token.CHAR),Complex…

(**)Some files have an abnormal maximum AST depth, which is why stack overflow inside wasm code can occur in some browsers. By choosing the rightMaxFileDepthyou can work around this problem by ignoring these dreaded files.

Filters are regular Go expressions. They can be combined through && and ||… You can also use ( and ) for grouping, and ! to invert the effect.

file Is a predefined variable that is bound to the currently processed file. $<var> Is a variable from the search pattern.

$x.IsConst() && !file.IsTest()– do not search in tests,$xmust be constant.$x.IsPure() && !$y.IsPure()–$xshould be pure expression and$y– No.!file.IsAutogen() && !file.IsTest()– do not search in tests and automatically generated files.file.MaxDepth() <= 100– skip files in which the maximum AST depth is higher than 100

If you have used ruleguard, then it may remind you of the filters in Where()…

Examples of requests

Here are some examples of search patterns.

The same variable names will require identical matches. That is $x = $x finds only self-assignment, and $x = $y can find any assignment (including self-assignment). The exception to the rule is $_ – an empty variable does not need to be matched even if it is used multiple times.

Here’s a more complex pattern: map[$_]$_{$*_, $k: $_, $*_, $k: $_, $*_}… It finds map literals that contain duplicate keys. Modifier * works just like regular expressions: there will be 0 or more matches. To find any calls fmt.Printf, we can make a template like this: fmt.Printf($*_)…

A variable with a modifier does not have to be empty. For example, this is also a valid template: fmt.Printf($format, $*args)…

Modifier + no, but it can often be emulated like this: f($_, $*_) – challenges f with one or more arguments.

More examples of templates can be found in go-critic rules…

Frequency score

If you make several requests, you can compare the number of matches between them. If pattern X gives twice as many matches, then we assume that it occurs in the code the same number of times more often.

But how to analyze the frequency of a pattern in relation to the average statistical code? The mythical 100 matches can mean different things depending on the size of the data on which we ran the request.

To calculate a certain frequency, I took as a basis the frequency err != nil… Over 11 million lines of code, there were ~ 150 thousand of these error checks. We will assume that the coefficient 1.0 Is 1 match for 70 lines of code. Therefore, if the tested pattern occurs once every 140 lines, then its frequency score will be equal to 1.0… To make the results easier to interpret, I multiply the value by 100 to get more beautiful numbers.

The frequency score is reported on a par with the other results after the request has been fully executed.

Conclusion

If you think you need to add some kind of repository to the corpus, let me know… Similarly, if a feature is missing or you find a bug, we also open an issue and I will try to fix it.

I would be glad to receive feedback.

PS – I have little experience with the frontend, so the TypeScript and Wertska code leaves much to be desired. If anyone can help with this part I would greatly appreciate it.