On the growing popularity of Kubernetes

At the end of the summer, we want to remind you that we are continuing to work on the topic Kubernetes and decided to publish an article on Stackoverflow showing the state of the art in this project as of early June.

At the time of this writing, Kubernetes is about six years, and over the past two years, its popularity has grown so much that it is consistently among most beloved platforms. Kubernetes is in third place this year. As a reminder, Kubernetes is a platform for running and orchestrating containerized workloads.

Containers originated as a special construct for process isolation in Linux; since 2007, containers include cgroupsand since 2002 – namespaces. The containers took shape even better by 2008 when they became available LXC, and Google has developed its own internal corporate mechanism called Borgwhere “all work is done in containers”. From here, fast forward to 2013, when the first Docker release took place, and containers finally moved to the category of popular mass solutions. At that time, the main tool for container orchestration was Mesos, although, he was not very popular. The first release of Kubernetes took place in 2015, after which this tool became the de facto standard in the field of container orchestration.

To try to understand why Kubernetes is so popular, let’s try to answer a few questions. When was the last time developers were able to agree on how to deploy applications to production? How many developers do you know use tools as they are provided out of the box? How many cloud administrators are there today who don’t understand how applications work? We will consider the answers to these questions in this article.

Infrastructure as YAML

In the world that went from Puppet and Chef to Kubernetes, one of the biggest changes has been the move from infrastructure as code to infrastructure as data — specifically like YAML. All resources in Kubernetes, which include pods, configurations, deployed instances, volumes, etc., can be easily described in a YAML file. For example:

apiVersion: v1

kind: Pod

metadata:

name: site

labels:

app: web

spec:

containers:

- name: front-end

image: nginx

ports:

- containerPort: 80This view makes it easier for DevOps or SREs to fully express their workloads without having to write code in languages such as Python or Javascript.

Other advantages of organizing infrastructure as data, in particular, are as follows:

- GitOps or Git Operations Version control. This approach keeps all Kubernetes YAML files in git repositories, so you can track exactly when a change was made, who made it, and what exactly changed. This increases the transparency of operations throughout the organization, and improves operational efficiency by eliminating ambiguity, in particular, where employees should find the resources they need. At the same time, it becomes easier to automatically make changes to Kubernetes resources – by simply merging a pull request.

- Scalability. When resources are defined in YAML, it becomes extremely easy for cluster operators to change one or two numbers in a Kubernetes resource, thereby changing how it scales. Kubernetes provides a mechanism for horizontal auto-scaling of pods, with which it is convenient to determine what is the minimum and maximum number of pods that a particular deployed configuration needs to cope with low and high traffic levels. For example, if you deployed a configuration that requires additional capacity due to a spike in traffic, the maxReplicas can be changed from 10 to 20:

apiVersion: autoscaling/v2beta2

kind: HorizontalPodAutoscaler

metadata:

name: myapp

namespace: default

spec:

scaleTargetRef:

apiVersion: apps/v1

kind: Deployment

name: myapp-deployment

minReplicas: 1

maxReplicas: 20

metrics:

- type: Resource

resource:

name: cpu

target:

type: Utilization

averageUtilization: 50- Security and management. YAML is great for evaluating how things are deployed to Kubernetes. For example, a major security issue is whether your workloads are running as a non-administrator user. In this case, tools such as conftest, the YAML / JSON validator, plus Open Policy Agent, a policy validator to ensure that the context SecurityContext your workloads does not allow the container to run with administrator privileges. If required, users can apply a simple policy rego, like this:

package main

deny[msg] {

input.kind = "Deployment"

not input.spec.template.spec.securityContext.runAsNonRoot = true

msg = "Containers must not run as root"

}- Cloud provider integration options. One of the most visible trends in today’s high tech is to run workloads at the facilities of public cloud providers. Using the component cloud-provider Kubernetes allows any cluster to integrate with the cloud provider it runs on. For example, if a user runs an application on Kubernetes on AWS and wants to open access to this application through a service, the cloud provider helps to automatically create the service.

LoadBalancerwhich will automatically provide the load balancer Amazon Elastic Load Balancerto redirect traffic to application pods.

Extensibility

Kubernetes is very extensible and developers love it. There is a set of resources available such as pods, sweeps, StatefulSets, secrets, ConfigMaps, etc. True, users and developers can add other resources in the form custom resource definitions…

For example, if we want to define a resource CronTab, you could do something like this:

apiVersion: apiextensions.k8s.io/v1

kind: CustomResourceDefinition

metadata:

name: crontabs.my.org

spec:

group: my.org

versions:

- name: v1

served: true

storage: true

Schema:

openAPIV3Schema:

type: object

properties:

spec:

type: object

properties:

cronSpec:

type: string

pattern: '^(d+|*)(/d+)?(s+(d+|*)(/d+)?){4}$'

replicas:

type: integer

minimum: 1

maximum: 10

scope: Namespaced

names:

plural: crontabs

singular: crontab

kind: CronTab

shortNames:

- ct

Later, we can create a CronTab resource something like this:

apiVersion: "my.org/v1"

kind: CronTab

metadata:

name: my-cron-object

spec:

cronSpec: "* * * * */5"

image: my-cron-image

replicas: 5

Another option for extensibility in Kubernetes is that the developer can write their own operators. Operator Is a special process in the Kubernetes cluster that follows the pattern “control circuit“. With the help of an operator, the user can automate the management of CRDs (custom resource definitions) by exchanging information with the Kubernetes API.

There are several tools in the community that make it easy for developers to create their own operators. Among them – Operator Framework and his Operator SDK… This SDK provides a framework from which a developer can very quickly start creating a statement. Let’s say you can start from the command line something like this:

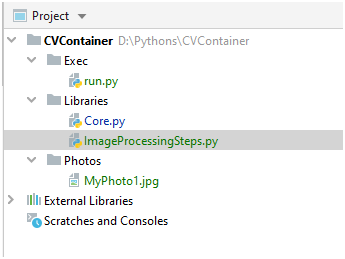

$ operator-sdk new my-operator --repo github.com/myuser/my-operatorThis creates all the stereotyped code for your operator, including the YAML files and Golang code:

.

|____cmd

| |____manager

| | |____main.go

|____go.mod

|____deploy

| |____role.yaml

| |____role_binding.yaml

| |____service_account.yaml

| |____operator.yaml

|____tools.go

|____go.sum

|____.gitignore

|____version

| |____version.go

|____build

| |____bin

| | |____user_setup

| | |____entrypoint

| |____Dockerfile

|____pkg

| |____apis

| | |____apis.go

| |____controller

| | |____controller.goThen you can add the API and controller you want, like this:

$ operator-sdk add api --api-version=myapp.com/v1alpha1 --kind=MyAppService

$ operator-sdk add controller --api-version=myapp.com/v1alpha1 --kind=MyAppServiceThen, finally, collect the operator and send it to the registry of your container:

$ operator-sdk build your.container.registry/youruser/myapp-operatorIf the developer needs even more control, then you can change the stereotyped code in the files to Go. For example, to modify the specifics of the controller, you can make changes to the file controller.go…

Another project, KUDO, allows you to create operators using only declarative YAML files. For example, an operator for Apache Kafka would be defined approximately So… With it, you can install a Kafka cluster on top of Kubernetes with just a couple of commands:

$ kubectl kudo install zookeeper

$ kubectl kudo install kafkaAnd then configure it with another command:

$ kubectl kudo install kafka --instance=my-kafka-name

-p ZOOKEEPER_URI=zk-zookeeper-0.zk-hs:2181

-p ZOOKEEPER_PATH=/my-path -p BROKER_CPUS=3000m

-p BROKER_COUNT=5 -p BROKER_MEM=4096m

-p DISK_SIZE=40Gi -p MIN_INSYNC_REPLICAS=3

-p NUM_NETWORK_THREADS=10 -p NUM_IO_THREADS=20

Innovation

Over the past few years, major Kubernetes releases have been released every few months – that is, three to four major releases per year. The number of new features implemented in each of them does not decrease. Moreover, there are no signs of slowing down even in our difficult times – look what it is now Kubernetes project activity on Github…

New capabilities allow you to more flexibly cluster operations across a variety of workloads. Plus, programmers like more control when deploying applications directly to production.

Community

Another major aspect of Kubernetes’ popularity is the strength of its community. In 2015, upon reaching version 1.0, Kubernetes was sponsored by Cloud Native Computing Foundation…

There are also diverse communities SIG (special interest groups) aimed at exploring different areas of Kubernetes as this project evolves. These teams are constantly adding new capabilities to make working with Kubernetes more convenient and convenient.

The Cloud Native Foundation also hosts CloudNativeCon / KubeCon, which is the largest open source conference in the world at the time of this writing. Typically, it is held three times a year and brings together thousands of professionals who want to improve Kubernetes and its ecosystem, as well as master new features that appear every three months.

Moreover, Cloud Native Foundation has Technical Supervision Committee, which, together with SIGs, is considering new and existing projects fund focused on the cloud ecosystem. Most of these projects help improve the strengths of Kubernetes.

Finally, I believe that Kubernetes would not have had such success without the deliberate efforts of the entire community, where people hold on to each other, but at the same time, gladly accept newcomers into their ranks.

Future

One of the main challenges that developers will have to deal with in the future is the ability to focus on the details of the code itself, rather than the infrastructure in which it operates. It is to these trends that serverless architecture paradigm, which is one of the leading today. There are already advanced frameworks, for example, Knative and OpenFaaswho use Kubernetes to abstract the infrastructure away from the developer.

In this article, we’ve only taken a rough look at the current state of Kubernetes – in fact, this is just the tip of the iceberg. Kubernetes users have many other resources, capabilities, and configurations at their disposal.