Nvidia and Moore’s law. Moore is dead, long live Huang

What is Moore’s Law

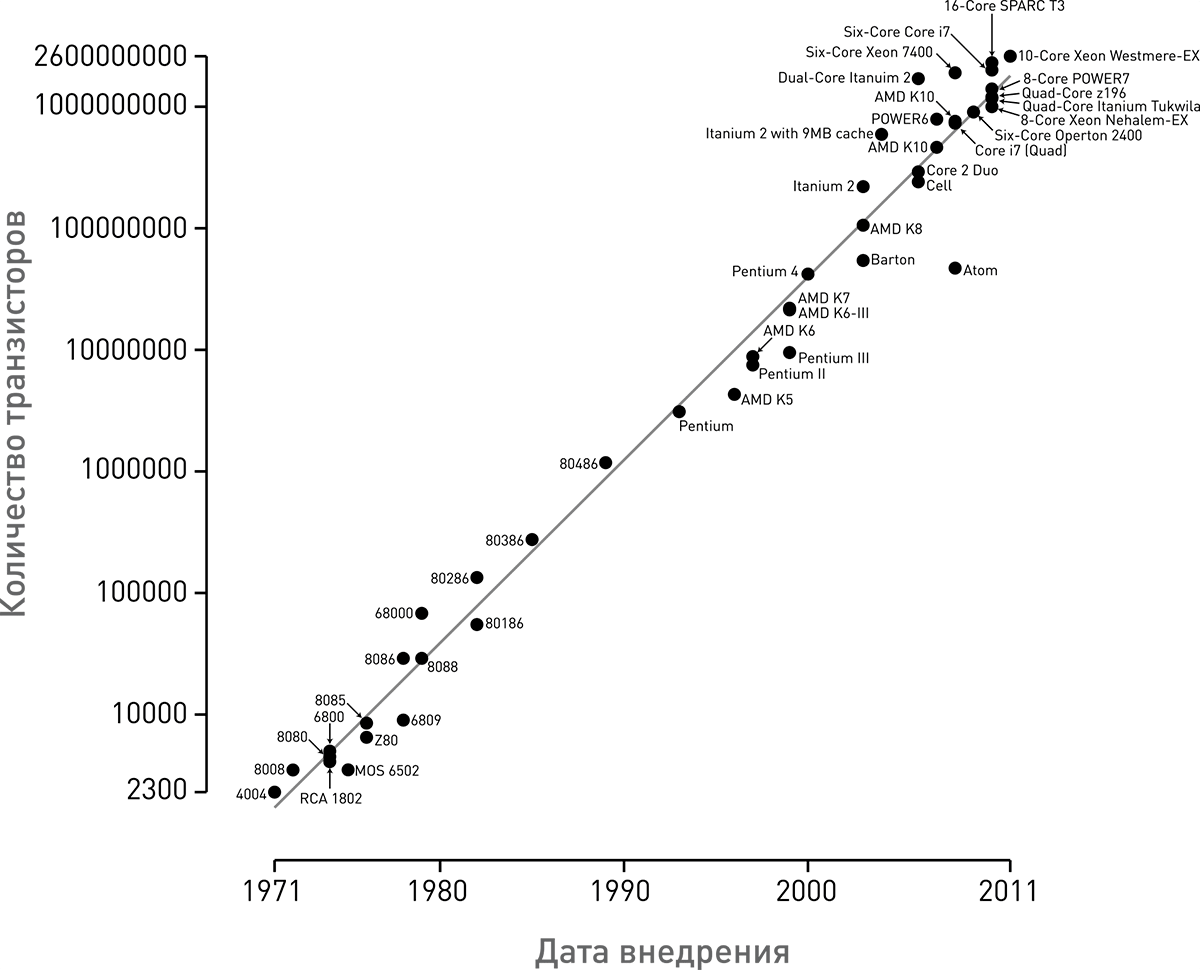

In 1965, one of the founders of Intel, Gordon Moore, noted an empirical pattern according to which the number of transistors on a chip chip doubles every 12 months. In 1975, Gordon Moore revised this rule to indicate that the number of transistors on a chip doubled every 24 months. Since then, this pattern has been called Moore’s law.

It is important to understand that following this law provides an exponential increase in the number of transistors on a chip chip.

Moore’s law continued to operate for 50 years, but in recent years, many have begun to believe that this law is no longer enforced and the growth rate of the number of transistors on chips, and as a result, the performance growth rate is noticeably declining.

Why NVIDIA?

In order to confirm and refute this observation, I conducted a study of all the flagship Nvidia video chips produced from 1998 to 2022. Nvidia graphics cards were selected for analysis for several reasons: 1) The great importance of the GPU for the AI and machine learning industry. 2) Greater availability of data about them, compared to central processing units. 3) Personal interest in this class of devices.

We study video cards

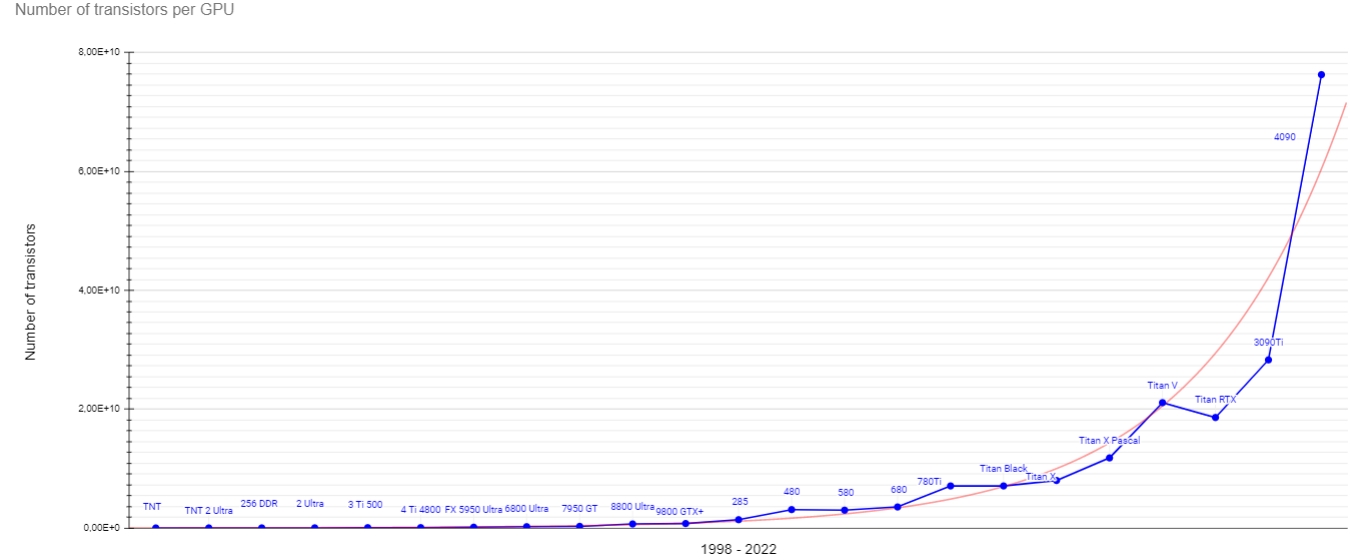

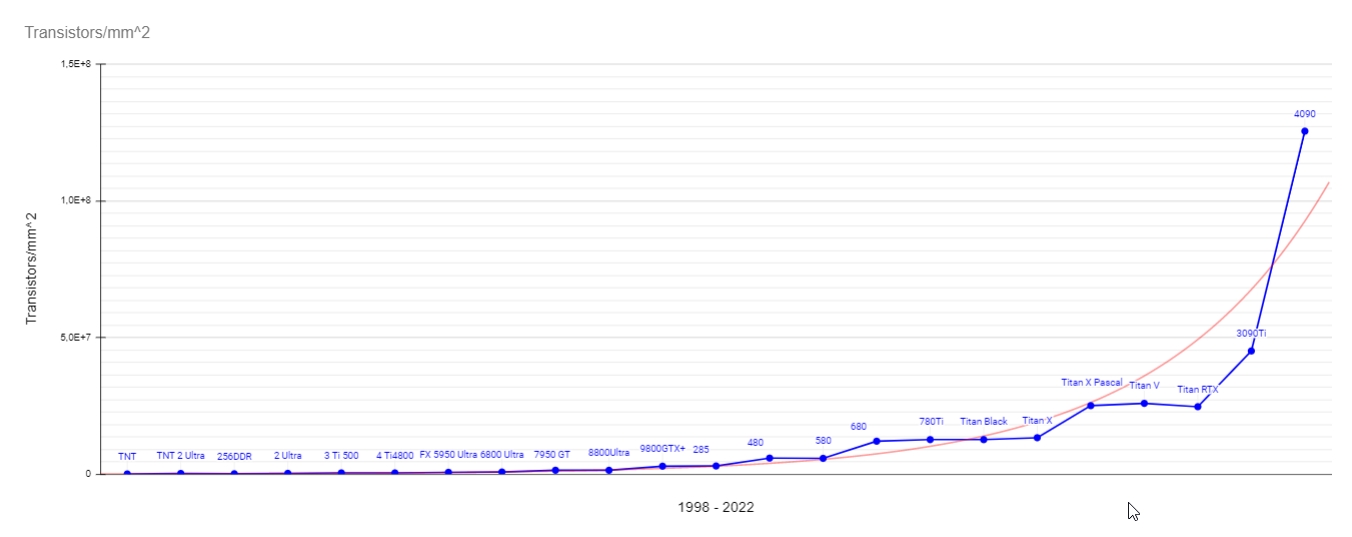

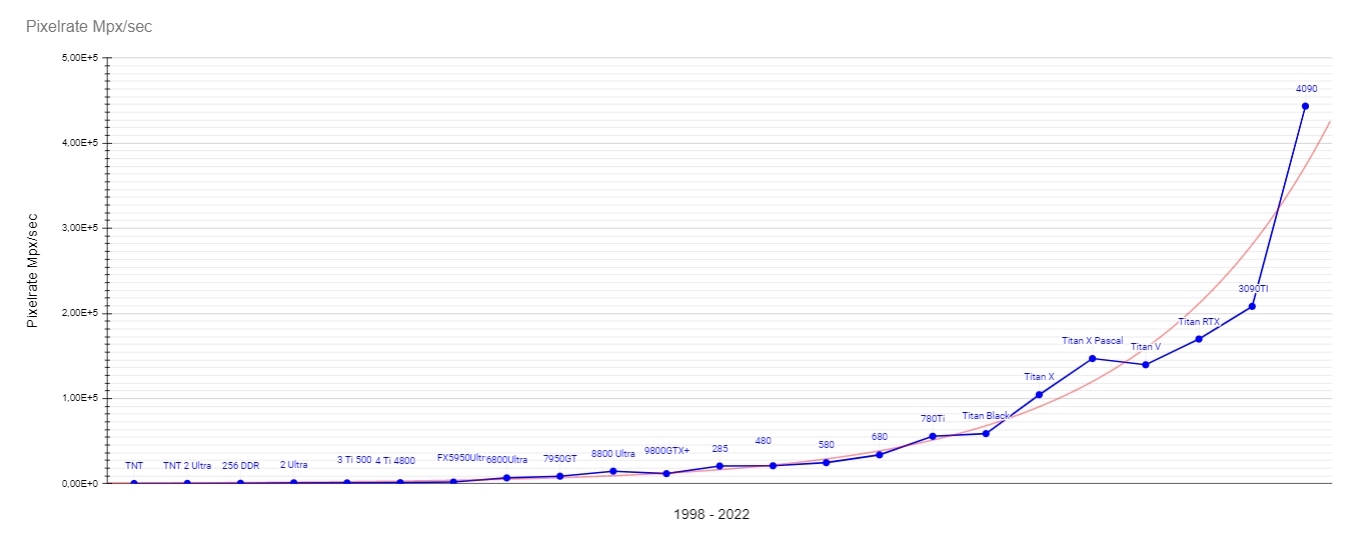

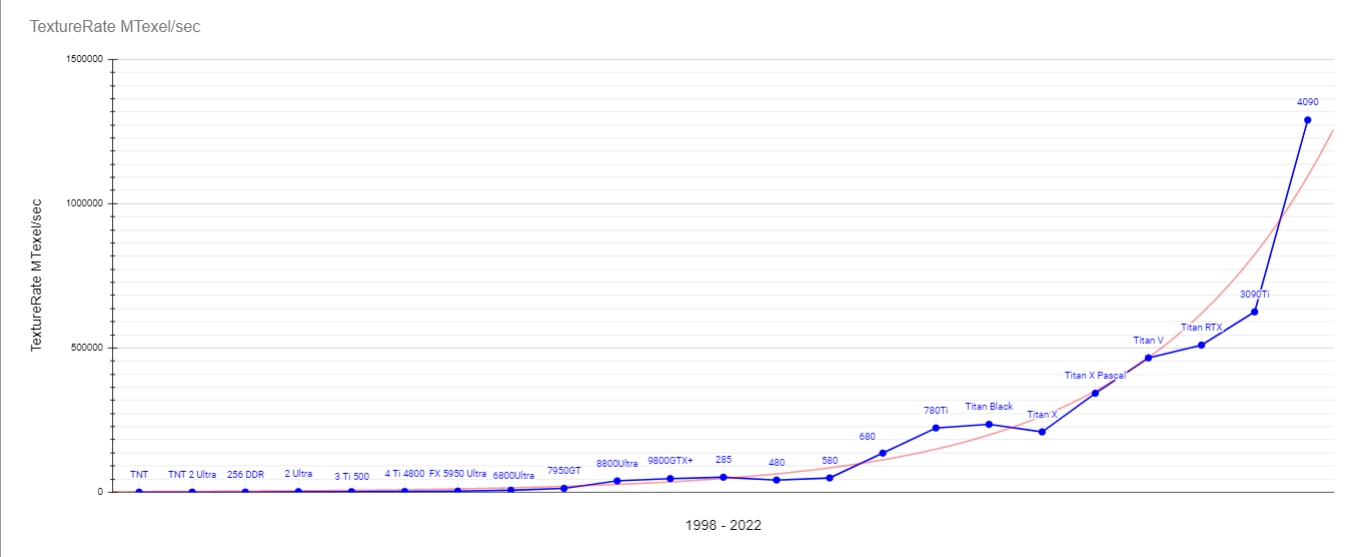

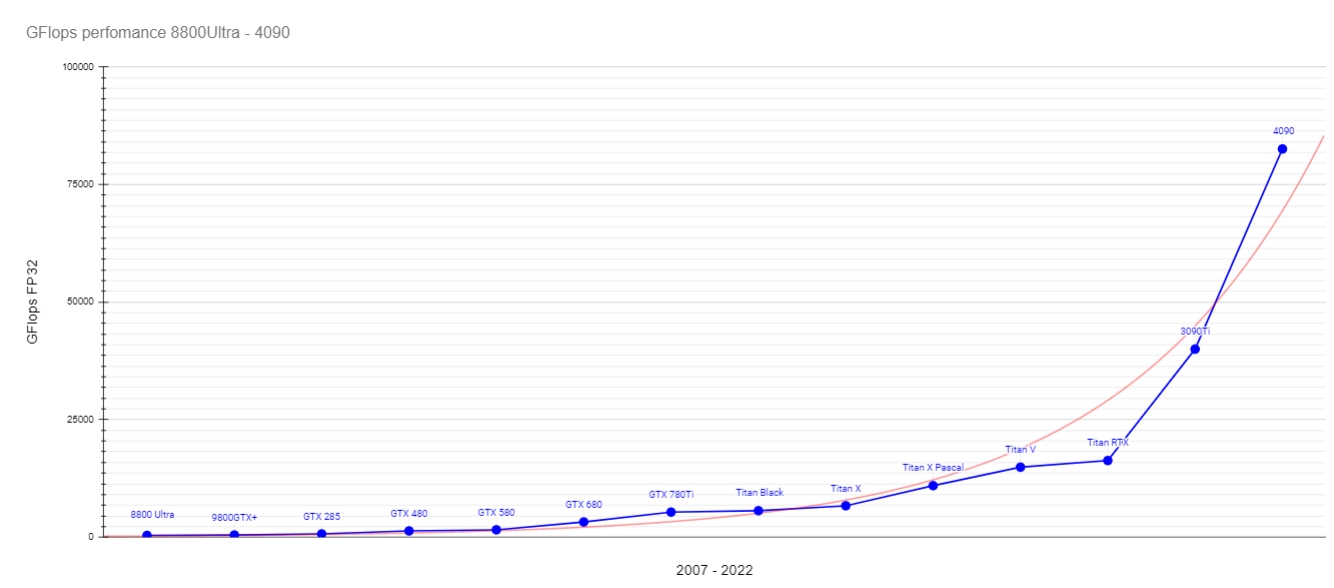

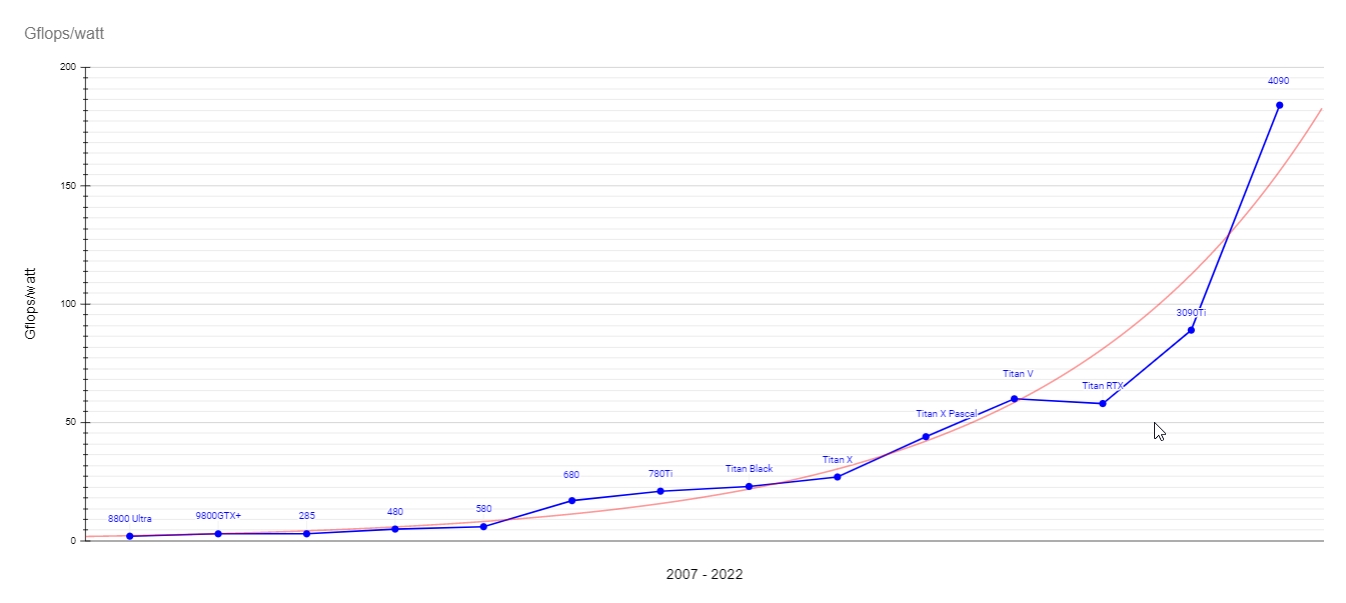

Data was obtained on the following characteristics of video cards: Number of transistors on a chip, number of transistors per square millimeter of a chip, pixelRate, textureRate, performance in Flops with single precision, and number of Flops per 1 watt TDP. Based on the information received, graphs were constructed that demonstrate the exponential growth of all these indicators in a given time period.

Graphs. Graphs. Graphs

As you can see, almost all values fall on the exponent, especially noticeable is a sharp jump during the transition to the Ada Lovelace architecture, in which the number of transistors on a GPU chip increased by 2.7 times in 1 generation (1 year).

From the transistor density graph, you can see that a sharp jump in transistor density and a return to exponential growth after some stagnation occurred with the release of the Ampere (3090Ti) architecture. And the transition to the Ada Lovelace architecture made it possible to overtake the expected exponential growth. This fact illustrates the so-called “Huang’s law”, according to which GPU performance growth occurs faster than CPU performance growth (which is expected to be exponential or even slightly behind the exponential).

Pixelrate and Texturate values are also showing exponential growth, although they are not important indicators of performance for modern video cards.

In my opinion, the most interesting indicator is the performance in Flops. Since, this is an expression of pure mathematical performance. And it is it that is important for solving problems not directly related to graphics. And as we can see, it also obeys the rule of exponential growth. I note that this graph contains only video cards starting from 8800Ultra, since data on this indicator is not available for older models.

Another important metric is performance per watt. And often it is this that causes the most concern, since in modern video cards the values of power consumption and heat dissipation have increased significantly compared to previous generations. But despite this, the performance per watt is also growing exponentially. Therefore, this concern seems to me not entirely justified.

Conclusion

Summing up, I note that the concern about the slowdown in the growth rate of chip performance is unfounded. After all, the growth rates of productivity and the number of transistors on a chip not only obey exponential growth, but also overtake it. Therefore, we can say that Moore’s law is dead, and Huang’s law has come to replace it. At least in the GPU realm.

In the future, we plan to release a similar work on Apple’s mobile SOC.

Link to table with source data