How we dragged the neural network into the browser

Hello comrades! I want to write a good story about how I ported the neural network to the browser.

The problem came to me from my institute friends from INM RAS. There is a frontend where the doctor uploads a CT scan. The doctor is asked to select a sector with a heart using the web interface, which will be transferred to the server, where the aortic graph is algorithmically segmented for further analysis.

I was asked to make a neural network to select a 3d sector with a heart, and the time spent should not exceed 2-3 seconds.

It is unprofitable to drive the entire CT image to the server only for the coordinates, because A CT scan usually consists of 600-800 frames of 512 * 512 pixels, so my suggestion of a browser-based option came in handy.

We considered the problem as a segmentation problem. Mobilenetv3 was used as a model. First, we took its version from the segmentation models package (https://github.com/qubvel/segmentation_models.pytorch), but its execution time was too long. Then they found its reduced version in the mediapipe package – https://google.github.io/mediapipe/solutions/selfie_segmentation.html, but its execution time was unsatisfactory. Then I cropped its layers and got a suitable mesh with 50k parameters, weighing only 210 KB. The size of the image and grid was selected according to the speed of processing the entire batch of images at once and acceptable accuracy. As a result, we trained on images of 64 * 64 pixels.

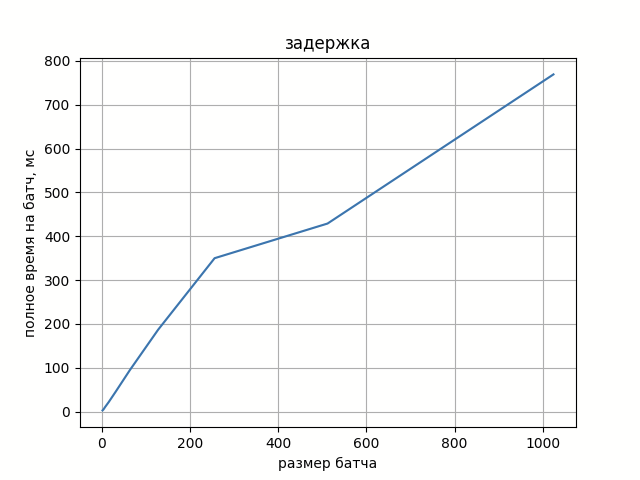

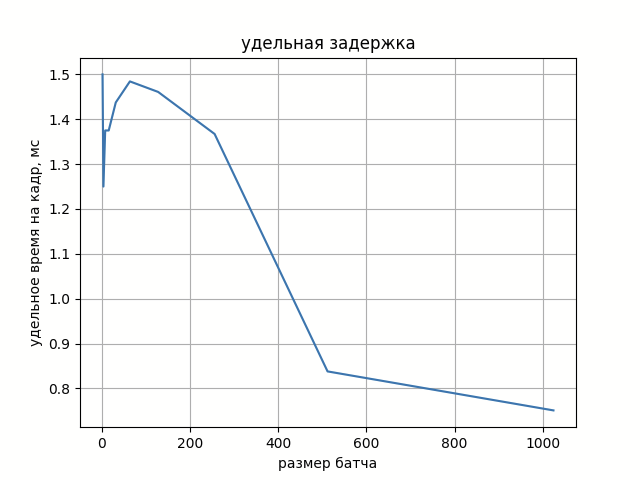

In Figure 1, you can see the dependence of batch processing time on its size. And in Figure 2, the specific time for processing a frame from a batch.

The increase in the processing delay of a full batch depends almost linearly on its size, and the specific delay per frame fluctuates noisily around a certain constant.

If we add the time for photo pre-processing (reducing the resolution from 512 * 512 to 64 * 64), then in total we get the desired 1.5 seconds.

The code was written in C++, and was used for porting to the browser https://emscripten.org/ . It allows you to quite simply build a cmake project into a special wasm module that can be loaded in JS code, but all dependencies need to be built statically under wasm. This project required the opencv and onnxruntime libraries. Fortunately, the developers of both have prepared their assembly instructions for this – https://onnxruntime.ai/docs/build/web.html And https://docs.opencv.org/4.x/d4/da1/tutorial_js_setup.html . To change the default opencv installation path, you can edit https://github.com/opencv/opencv/blob/4.x/platforms/js/build_js.py.

As a result of the assembly, we get three files: main.js, main.wasm, main.data. The file with the .data extension contains attached files (in this case, the weights of the neural network), with the .wasm extension – compiled C ++ code, in main.js – a wrapper for loading and instantiating the module. When building, it is possible to compress everything into 1 js file, but in this case *.data and *.wasm files will be converted to base64 and placed inside the js file – and this will take much more space, so we did not use it.

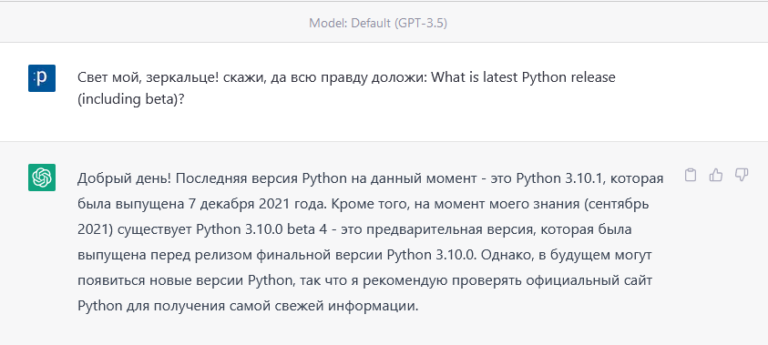

In addition to the above approach, there is another option to use tensorflow.js or the mediapipe framework (https://habr.com/ru/post/502440/) with their collection of gorgeous demos (https://mediapipe.dev/). Or build tflite (C++) in wasm (under cmake in the old version of tflite, and the latest version only in the project under the bazel builder). But I really missed a good repository with an example of building a simple pipeline. That’s how this article came about.

The final code and dockerfile for reproducibility are here – https://github.com/DmitriyValetov/onnx_wasm_example. The demo has two modes: the first is processing 200 target photos and displaying detections, the second is a speed test with graphs (your performance may differ from those given in this article, because it will depend on the hardware).

In the future, there is an idea to make a benchmark of a simple grid, maybe even the same one, but with different frameworks: onnxruntime, tflite and mxnet. Openvino has not rolled out to wasm yet, but they set this task for google summer of code (https://github.com/openvinotoolkit/openvino/wiki/GoogleSummerOfCode/628ff2fe7f78bc4e07ecd473042cae8374aacba3).