How to migrate 50 Oracle databases

Even technologically advanced companies may not just have old databases, but very old ones. They continue to work, but their antiquity can one day create a lot of problems. And it’s good if these problems can be resolved in advance, before the ecosystem of already unsupported software begins to crumble. We will tell you how the Jet Infosystems team updated more than 50 outdated Oracle DBMSs of one of its clients in order to avoid catastrophic consequences.

If you try hard, then in large companies you can find a Paleolithic DBMS. The IT staff is full of other tasks, and the databases … they are up and running, technical debt has been building up over the years. Used “old ladies” DBMS turn out to be without the support of the manufacturer, it is impossible to use modern solutions with them, to connect additional functionality, etc. And one day the update still comes.

A similar scenario played out in our client. The organization had more than 50 Oracle DBMS of different versions. They worked on dedicated servers, long removed from the vendor’s support, in various hypervisors, including frankly outdated versions. Some lived on Oracle VM, others on VMware, and still others on Hyper-V.

The DBs were not too heavily loaded, but they worked closely together with each other, and a significant part of the equipment “under” them worked to the limit of their capabilities. Real business processes cover several areas of the company’s activities at once, and in order to reflect them, dependencies were built between the databases and a constant exchange of information was established. Therefore, in the event (God forbid!) The fall of one base, the work of several more would be disrupted.

Background

This story unfolded in 2019 when Oracle announced an automatic change to the source code. As a result, data exchange between DBMSs of different versions via Database Link could stop. And Database Link was widely used by our client just for interaction between DBMS, linking different data sets. In general, the giant decided to update the DBMS versions to the most optimal and up-to-date at the time to ensure the Database Link will work after the Oracle code update.

Analysis of the current situation added additional tasks to us. First, it turned out that the existing configuration was not fully fault-tolerant. In case of trouble, the problem of restoring the operation of the DBMS and the system as a whole could become an impossible mission. And secondly, in parallel with the database migration, the customer proposed to update the Oracle Forms and Reports software, thereby significantly complicating the overall architecture of the application layer.

We transfer the DBMS to the new infrastructure

However, the DBMS migration was not easy either. The “update to newest version” approach did not work here because it did not take into account many important factors. Together with the client’s specialists, we came to the conclusion that it was necessary to update the DBMS to versions 11.2.0.4 and 12.2.0.1, although at the time of the project, the 18th version was already available. It was versions 11.2.0.4 and 12.2.0.1 that turned out to be the most familiar and understandable to the customer, all the applied software used exactly worked in them.

It was decided to host the DBMS in a single virtual environment to provide the required level of flexibility and fault tolerance. With the transition to a shared hyper-converged infrastructure, we have retired several dozen legacy x86 servers. And since the transactional load on the client’s DBMS was not so high, the databases were placed on a five-node VMware / vSAN virtualization cluster.

The migration turned out to be laborious. We dealt with databases not only of different ages, but also with different functionality (for example, for user authorization). They could not simply be transferred to a new version of the DBMS – they often had to set up everything from scratch, reinventing the wheel.

First, after analyzing all the incoming data, we managed to “sweat and blood” agree on the golden image of the OS, on which the required versions of the Oracle DBMS could run. It was SUSE Linux Enterprise Server 12.3 with preinstalled binaries required for Oracle packages, as well as the Enterprise Manager and NetBackup agents used by the company. Then the process was repeated for each database according to the following scenario:

We created a new virtual machine for each database. Based on the results of preliminary sizing, the required number of virtual processors and the amount of memory were selected for it.

Filled in a copy of the database. Considering that we had to deal with the most different versions of the DBMS (from 8 to 12), they had to be “uploaded” in different ways. In some cases, the “export-import” scheme worked, in others we restored the database from a backup on a new VM, and sometimes even copied it from the product.

After checking the functionality, the developers connected to testing, and we created several clones of the database, updated to the required versions and tested the new DBMS with real data.

Ordinary users were involved in testing, who checked the operation of the DBMS “in battle”, using those functions to which they were accustomed.

As a result, only after going through all the previous steps (sometimes more than once), we finally migrated the productive base – after such a serious preparation, we worked according to a detailed plan.

As a result, the migration time for each DBMS did not exceed 2-4 hours. It is important that all databases were migrated on a schedule that took into account changes to the Oracle source code. Thus, we managed to transfer critical databases from the Database Link point of view in the first place – problems with data exchange were avoided.

Failover configuration

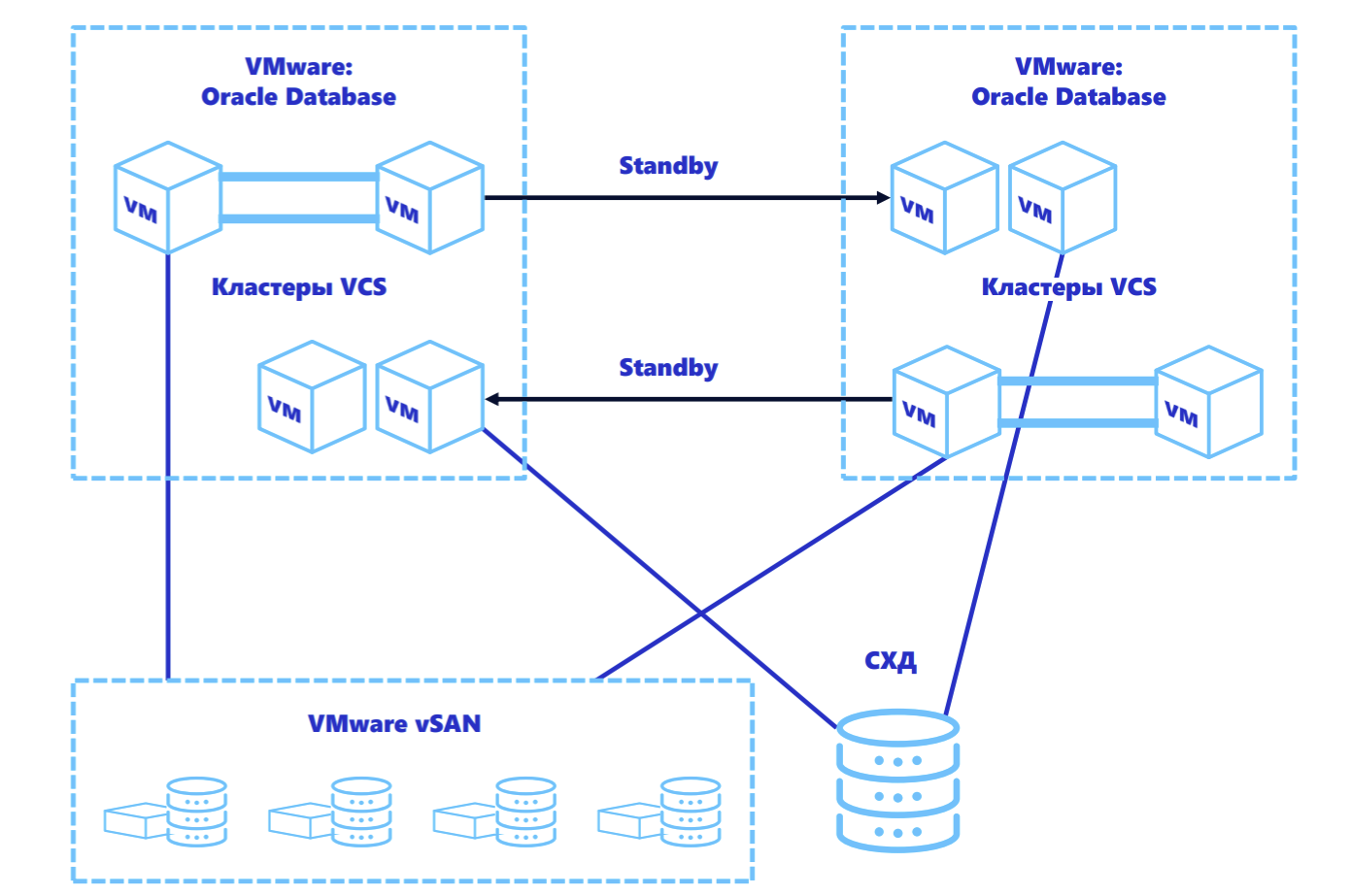

On the new platform for the DBMS, we have reached a new level of fault tolerance:

Business Critical databases were protected using Oracle Data Guard (Physical Standby). And the most critical DBMS received additional protection using Veritas HA Cluster.

The least critical Office Productivity databases have been protected at the backup level with the ability to quickly recover in the event of a failure on a new VM.

A separate array and a VMware / vSAN hyperconverged environment were used as a data storage system. As a result, the working DBMS and backups were divided between vSAN and the array so as to distribute the load, but at the same time exclude the possibility of failure of both copies at the same time.

The only problem at this stage was the use of Veritas HA Cluster on the VMware platform. In order for the cluster to work under VMware, it is necessary to allocate shared disks to virtual machines (in VMware they are called Multi-Writer). This leads to the fact that all the great VMware features that administrators are used to – dynamically changing VM resources, cloning and migrating, even just backing up – stop working. But this story deserves a separate post.

Dealing with Middleware: Expectation / Reality

The project would be much simpler if it weren’t for the Middleware updates. The fact is that the old version of Oracle Forms and Reports used a two-tier architecture with client-server interaction, and the new version of this software required the implementation of a three-tier architecture and the installation of an application server.

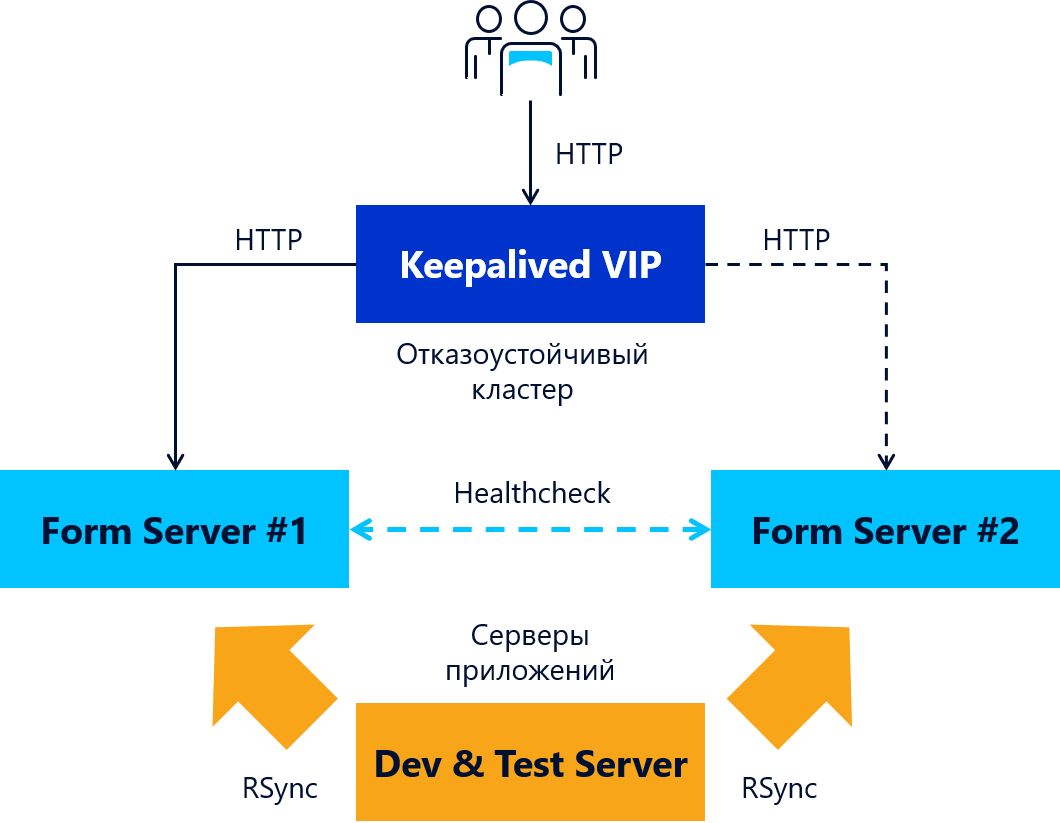

We came up with a “nice” solution: to run the application server in Active-Active mode, using the equipment available to the customer – NetScaler. Having suffered with the settings for some time, we put into practice the idea of such balancing. But at the last moment, they abandoned the plan: although the solution was successfully tested, with an increase in user load, it began to issue “floating” bugs, slowing down some sessions. In general, a more trivial solution had to be used to cluster the application server. For application servers, we provided fault tolerance using the open source keepalived clustering solution (Figure 2).

conclusions

During the project, we once again made sure that updating outdated and unsupported software (in this case Oracle Forms and Reports) can become the most problematic part of the project – the difficulties of database migration were understandable, and we were ready for them, because we regularly do similar projects.

To migrate over 50 (and taking into account all copies and clones – more than a hundred) databases to a single platform and unified version, a huge preparatory work had to be done. Redundancy in a virtual environment became the foundation for the required level of availability for each DBMS, and most importantly, it turned out to return the manageability of critical infrastructure. The “old women” of the DB have safely survived the relocation to the “new body” and are now full of strength: they can boast of fault tolerance and correct backup.

Now, looking at availability rates of more than 99%, it becomes creepy that the previously working DBMS were on the verge of a foul. In the event of any failure, recovery would take several days, and no one could guarantee its completeness. Of course, it is better to launch projects for upgrading versions of infrastructure software in advance, before the sky crashed to earth. In this case, the trigger for this was an update to the Oracle Database source code. And it is very good that the database was transferred to the new platform without any real incidents with data loss.

Authors:

Alexey Struchenko, Head of the DB “Jet Infosystems”

Ksenia Aleksandrova, Project Manager of Jet Infosystems