How Google’s BigQuery democratized data analysis. Part 2

Read the first part

Data management

Strong Data Governance is the main tenet of Twitter Engineering. As we integrate BigQuery into our platform, we are focusing on data discovery, access control, security, and privacy.

To discover and manage data, we have expanded our Data Access Layer – DAL) to provide tools for both local data and Google Cloud data, providing a consistent interface and API for our users. As Google Data Catalog moves towards general availability, we will include it in our projects to provide users with features such as column search.

BigQuery makes it easy to share and access data, but we needed some control to prevent data exfiltration. Among other tools, we have chosen two functions:

- Domain restricted sharing: A beta feature that prevents users from sharing BigQuery datasets with users outside of Twitter.

- VPC service controls: A control that prevents data exfiltration and requires users to access BigQuery from known IP ranges.

We have implemented Authentication, Authorization and Auditing (AAA) security requirements as follows:

- Authentication: We used GCP user accounts for ad hoc requests and service accounts for work requests.

- Authorization: We required each dataset to have an owner service account and a group of readers.

- Auditing: We exported BigQuery stack driver logs, which contained detailed query execution information, to a BigQuery dataset for easy analysis.

To ensure that Twitter users’ personal data is properly handled, we must register all BigQuery datasets, annotate personal data, maintain proper storage, and delete (clean up) data that has been deleted by users.

We reviewed Google Cloud Data Loss Prevention APIwho uses machine learning to classify and edit sensitive data, but chose to manually annotate the dataset due to accuracy. We plan to use the Data Loss Prevention API to complement the custom annotation.

On Twitter, we’ve created four privacy categories for BigQuery datasets, listed here in decreasing order of sensitivity:

- Highly sensitive datasets are available as needed based on the principle of least privilege. Each dataset has a separate group of readers, and we will track the use of individual accounts.

- Medium sensitivity datasets (one-way pseudonyms using salted hashing) do not contain Personally Identifiable Information (PII) and are available to a larger group of employees. This is a good balance between privacy and data usefulness considerations. This allows employees to perform analysis tasks such as calculating the number of users who have used a feature without knowing who the real users are.

- Low sensitivity datasets with all user identifying information. This is a good privacy approach, but it cannot be used for user-level analysis.

- Public datasets (released outside of Twitter) are available to all Twitter employees.

Before registration, we used scheduled tasks to enumerate BigQuery datasets and register them in the Data Access Layer (DAL), Twitter’s metadata repository. Users will annotate datasets with confidentiality information as well as retention periods. As for cleaning, we estimate the performance and cost of two options: 1. Cleaning up datasets in GCS using tools like Scalding and loading them into BigQuery; 2. Using BigQuery DML Operators. We will probably use a combination of both methods to meet the requirements of different groups and data.

System functionality

Because BigQuery is a managed service, there was no need to involve Twitter’s SRE team in systems management or on-duty duties. It was easy to provide more capacity for both storage and computing. We could change slot reservations by creating tickets in Google support. We figured out what could be improved, such as self-service for slot allocation and better monitoring dashboards, and passed those requests to Google.

The cost

Our preliminary analysis showed that the cost of queries for BigQuery and Presto were at the same level. We have purchased slots for fixed price to have a stable monthly cost instead of payment on demand for TB of processed data. This decision was also based on feedback from users who didn’t want to think about costs before making each request.

Storing data in BigQuery brought in costs in addition to GCS costs. Tools like Scalding require datasets in GCS, and to access BigQuery, we had to load the same datasets in BigQuery format Capacitor… We are working on a Scalding connection with BigQuery datasets that will eliminate the need to store datasets in both GCS and BigQuery.

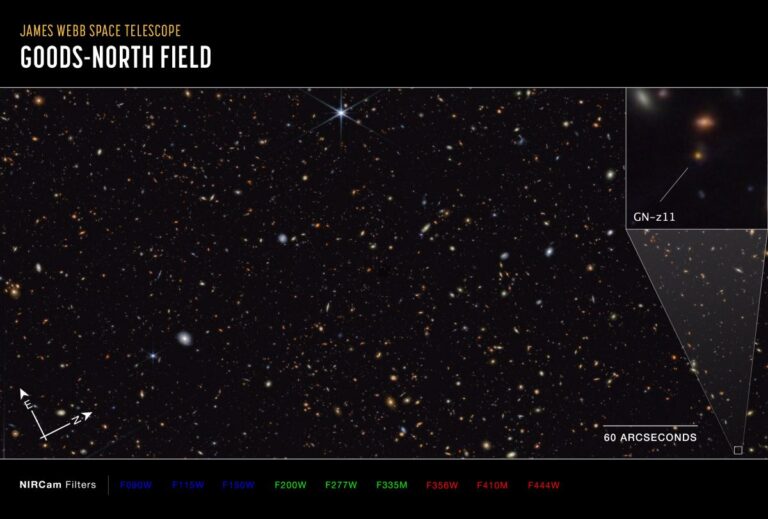

For rare cases that required infrequent requests for tens of petabytes, we decided that storing datasets in BigQuery was not cost effective and used Presto to directly access datasets in GCS. To do this, we are looking at BigQuery External Data Sources.

Next steps

We’ve noticed a lot of interest in BigQuery since the alpha release. We’re adding more datasets and more commands to BigQuery. We are developing connectors for data analysis tools such as Scalding for reading and writing to BigQuery storage. We’re looking at tools like Looker and Apache Zeppelin for generating corporate quality reports and notes using BigQuery datasets.

The partnership with Google has been very productive and we are delighted to continue and develop this partnership. We worked with Google to implement our own Partner Issue Trackerto send queries to Google directly. Some of them, like BigQuery Parquet Downloader, are already implemented by Google.

Here are some of our high-priority feature requests for Google:

- Tools for easy data ingestion and support for LZO-Thrift format.

- Hourly segmentation

- Access control improvements such as table, row, and column permissions.

- BigQuery External Data Sources with Hive Metastore integration and support for LZO-Thrift format.

- Improved data catalog integration in BigQuery UI

- Self-service for slot allocation and monitoring.

Conclusion

Democratizing data analysis, visualization, and machine learning in a safe way is a top priority for the Data Platform team. We identified Google BigQuery and Data Studio as tools that can help us achieve this goal, and we released BigQuery Alpha for the entire company last year.

We found that BigQuery queries were simple and effective. We used Google tools for simple pipelines to receive and transform data, but for complex pipelines we had to create our own Airflow infrastructure. In data management, BigQuery services for authentication, authorization, and auditing meet our needs. We needed a lot of flexibility to manage metadata and maintain confidentiality, and we had to build our own systems. BigQuery, being a managed service, was easy to use. Request costs were similar to existing tools. Storing data in BigQuery has incurred costs in addition to the cost of GCS.

Overall, BigQuery performs well for general SQL analysis. We see a lot of interest in BigQuery, and we are working to migrate more datasets, engage more teams, and build more pipelines with BigQuery. Twitter uses a variety of data, which will require a mix of tools like Scalding, Spark, Presto, and Druid. We intend to continue to build on our data analysis tools and provide clear guidance to our users on how best to use our offerings.

Words of gratitude

I would like to thank my collaborators and teammates, Anjou Jha and Will Pascucci, for their great collaboration and hard work on this project. I would also like to thank the engineers and managers from several teams on Twitter and Google who helped us and BigQuery Twitter users who provided valuable feedback.

If you are interested in working on these tasks, check out our vacancies on the Data Platform team.

DWH Data Quality – Data Warehouse Consistency