How Google’s BigQuery democratized data analysis. Part 1

Every day, over a hundred million people visit Twitter to find out and discuss what’s going on in the world. Every tweet and every other user action generates an event available for internal data analysis on Twitter. Hundreds of employees are analyzing and visualizing this data, and improving their experience is a top priority for the Twitter Data Platform team.

We believe that users with a wide range of technical skills should be able to find data and have access to well-performing SQL-based analysis and visualization tools. This would allow a whole new group of users with less technical bias, including data analysts and product managers, to extract information from the data, allowing them to better understand and use the power of Twitter. This is how we democratize Twitter data analysis.

As our tools and capabilities for internal data analysis have improved, we have seen improvements in the Twitter service. However, there is still room for improvement. Current tools like Scalding require programming experience. SQL-based analysis tools like Presto and Vertica have performance issues on a large scale. We also have the problem of spreading data across multiple systems without constant access to it.

Last year we announced new collaboration with Google, within which we transfer parts of our data infrastructure on Google Cloud Platform (GCP). We have concluded that Google Cloud tools Big Data can help us with our initiatives to democratize analysis, visualization and machine learning on Twitter:

- BigQuery: enterprise data warehouse with SQL engine based Dremel, which is famous for its speed, simplicity and copes with machine learning…

- Data Studio: a big data visualization tool with collaboration features like Google Docs.

In this article, you will learn about our experience with these tools: what we have done, what we have learned, and what we will do next. We will now focus on batch and interactive analytics. We will discuss real-time analytics in the next article.

History of Twitter data storage

Before diving into BigQuery, it’s worth briefly retelling the history of Twitter data storage. In 2011, Twitter data analysis was done in Vertica and Hadoop. To create MapReduce Hadoop jobs, we used Pig. In 2012, we replaced Pig with Scalding, which had a Scala API with advantages such as the ability to create complex pipelines and ease of testing. However, for many data analysts and product managers who were more comfortable working with SQL, it was a steep learning curve. Around 2016, we started using Presto as the SQL interface for Hadoop data. Spark offered a Python interface, which makes it a good choice for ad hoc data mining and machine learning.

Since 2018, we have used the following tools for data analysis and visualization:

- Scalding for production conveyors

- Scalding and Spark for ad hoc data analysis and machine learning

- Vertica and Presto for ad hoc and interactive SQL analysis

- Druid for low interactive, exploration and low latency access to time series metrics

- Tableau, Zeppelin and Pivot for data visualization

We found that while these tools offer very powerful capabilities, we had difficulty making these capabilities available to a wider audience on Twitter. As we expand our platform with Google Cloud, we are focusing on simplifying our analytics tools across Twitter.

Google BigQuery Data Warehouse

Several teams on Twitter have already included BigQuery in some of their production pipelines. Using their experience, we began evaluating BigQuery’s capabilities across all Twitter use cases. Our goal was to offer BigQuery to the entire company, and to standardize and support it within the Data Platform toolbox. This was difficult for many reasons. We needed to develop an infrastructure to reliably receive large amounts of data, support data management throughout the company, ensure proper access control, and ensure customer privacy. We also had to build systems for resource allocation, monitoring, and chargebacks so teams can use BigQuery effectively.

In November 2018, we released an alpha release of BigQuery and Data Studio for the entire company. We have offered Twitter employees some of our most used personal data cleared spreadsheets. BigQuery has been used by over 250 users from a variety of teams including engineering, finance, and marketing. Most recently, they ran about 8,000 requests, processing about 100 PB per month, not counting scheduled requests. With very positive feedback, we decided to move forward and offer BigQuery as our primary resource for interacting with data on Twitter.

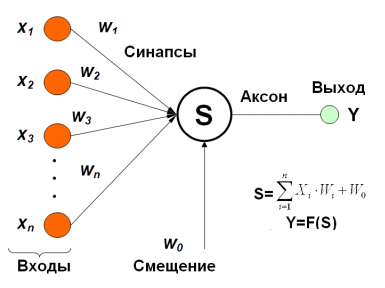

Here is a diagram of the high-level architecture of our Google BigQuery data warehouse.

We copy data from on-premises Hadoop clusters to Google Cloud Storage (GCS) using the internal Cloud Replicator tool. We then use Apache Airflow to create pipelines that use “bq_load»To load data from GCS into BigQuery. We use Presto to query Parquet or Thrift-LZO datasets in GCS. BQ Blaster is an internal Scalding tool for uploading Vertica and Thrift-LZO HDFS datasets to BigQuery.

In the following sections, we will discuss our approach and knowledge in the areas of ease of use, performance, data management, system health, and cost.

Ease of use

We found it easy for users to get started with BigQuery as it did not require software installation and users could access it through an intuitive web interface. However, users needed to become familiar with some of the features and concepts of GCP, including resources such as projects, datasets, and tables. We have developed tutorials and tutorials to help users get started. With this basic understanding, it is easy for users to navigate datasets, view schema and table data, run simple queries, and visualize results in Data Studio.

Our goal with BigQuery data entry was to ensure smooth loading of HDFS or GCS datasets with one click. We considered Cloud Composer (managed by Airflow) but were unable to use it due to our Domain Restricted Sharing security model (more on this in the Data Management section below). We experimented with using the Google Data Transfer Service (DTS) to organize BigQuery load tasks. While DTS was quick to set up, it was not flexible for building dependency pipelines. For our alpha we have created our own Apache Airflow framework in GCE and are preparing it for production and the ability to support more data sources like Vertica.

To transform data into BigQuery, users create simple pipelines of SQL data using scheduled queries. For complex multi-stage pipelines with dependencies, we plan to use either our own Airflow framework or Cloud Composer along with Cloud Dataflow…

Performance

BigQuery is designed for general purpose SQL queries that process large amounts of data. It is not designed for the low latency, high throughput queries required by a transactional database, or for the low latency time series analysis implemented by Apache Druid… For interactive analytic queries, our users expect a response time of less than one minute. We had to design BigQuery usage to meet these expectations. To ensure predictable performance for our users, we used BigQuery, a feature available to customers at a flat rate, which allows project owners to reserve minimum slots for their queries. Slot BigQuery is a unit of computing power required to execute SQL queries.

We analyzed over 800 queries processing about 1 TB of data each and found that the average execution time was 30 seconds. We also learned that performance is highly dependent on the use of our slot in various projects and tasks. We had to clearly distinguish between our production and ad hoc slot reserves to maintain performance for production use cases and interactive analysis. This greatly influenced our design for slot reservations and project hierarchy.

We will talk about data management, functionality and cost of systems in the coming days in the second part of the translation, and now we invite everyone to free live webinar, within which it will be possible to learn in detail about the course, as well as ask questions to our expert – Egor Mateshuk (Senior Data Engineer, MaximaTelecom).

Read more:

- Data Build Tool or what is common between Data Warehouse and Smoothie

- Delta Lake Dive: Enforcement and Circuit Evolution

- High Speed Apache Parquet in Python with Apache Arrow