Critical software testing methodology

“Critical safety systems” are those systems that, if their operation ceases or deteriorates (for example, as a result of incorrect or accidental operation), can lead to catastrophic or critical consequences.

Examples of critical safety systems are aircraft flight control systems, automatic trading systems, control systems for nuclear power units of nuclear power plants, medical systems, etc.

Critical safety systems should consider the following aspects:

Traceability in terms of regulatory requirements and means of ensuring compliance

Rigorous approach to development and testing

Security Analysis

Redundancy and architectural quality

Focus on quality

High level of documentation (scale and depth of coverage of documentation)

A higher degree of control.

Risk management, which reduces the likelihood and/or impact of a risk, is essential to the development and testing of critical security systems. Suppliers of such systems are generally liable for any costs or damages incurred, and testing is used to reduce this liability. The test results indicate that the system has been properly tested to avoid catastrophic or critical consequences. Testing of critical security systems usually involves the application of industry (domain) standards.

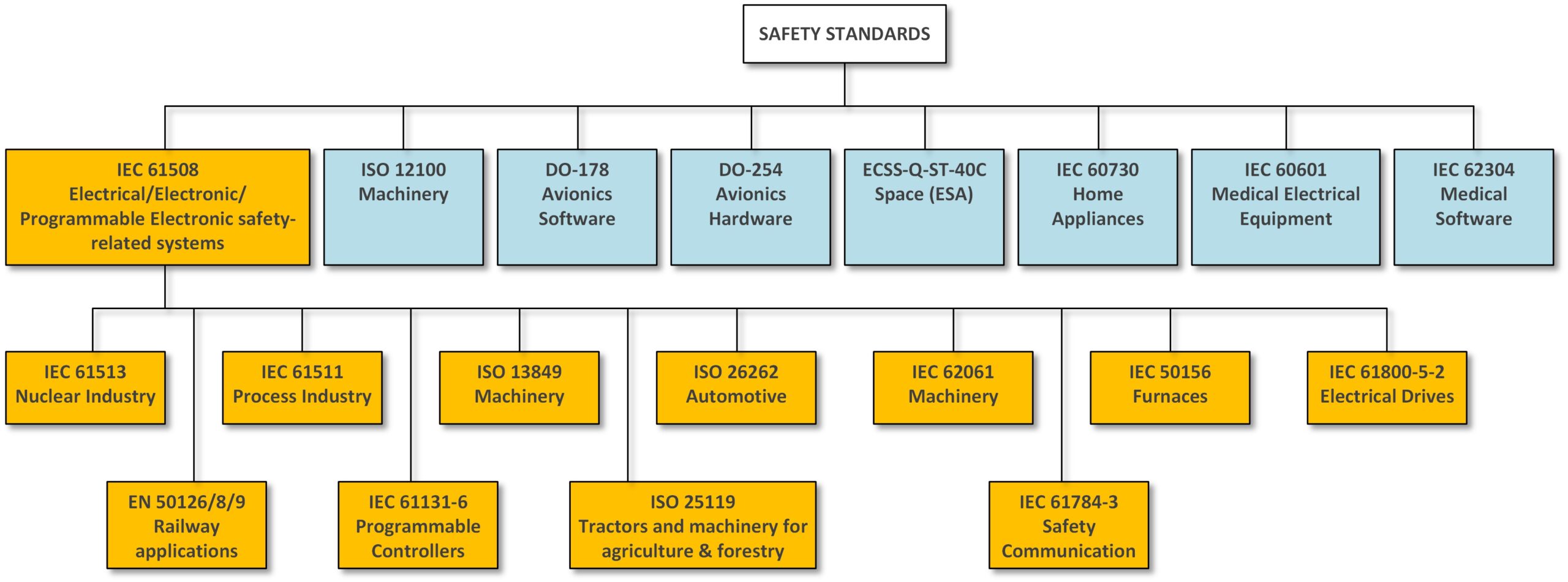

In international practice, for the development of critical systems in various fields, organizations such as FAA, NRC, EUROCONTROL, CENELEC, IEC, ISO have issued relevant standards. Examples of such standards are: IEC 61508 in the field of industry; ISO 26262 in the automotive domain; DO-178B and DO-178C in avionics/aeronautics; CENELEC EN 50126, EN 50128 and EN 50129 in the field of railway transport; IEC 62304 in medicine; IEC 61513 in the nuclear industry; ECSS standards for the space domain (Fig. 1).

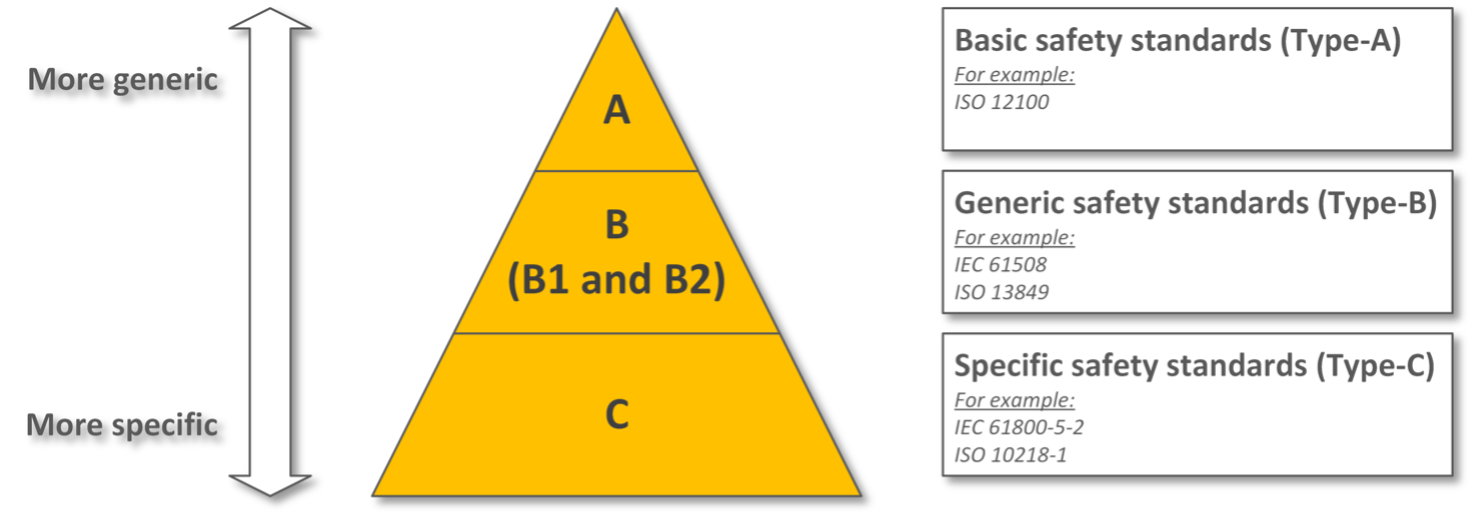

Note that standards can be subdivided into types. Suppose a company intends to develop safety components for machines that are subsequently to be placed on the European market. In this situation, basic safety standards (e.g. IEC 61508, ISO 12100, etc.), general safety standards (e.g. IEC 62061, ISO 13849, etc.), as well as product-specific safety standards (e.g. , IEC 61800-5-2 etc.). These standards are often referred to as Type A, B, and C standards (Figure 2).

These standards are intended to provide guidance on activities within the software development and testing life cycle. In order to qualify for final product certification, development requires evidence for each artifact produced.

There are four main parties involved in the certification process: the standard(s), the applicant, the assessment body, and a body independent of the applicant. The applicant must provide evidence (sometimes referred to as a “certification package”) of the proper implementation of the standard guidelines. The evaluation body reviews the certificate package and the software product (if available at that particular stage) to prove that they conform to the standards. The authorized body passes the certificate to the applicant based on the results of the assessment or may request additional evidence.

Test Methodology

Critical software testing can be represented as three methods:

reliability, availability, maintainability, safety and security (Reliability, Availability, Maintainability, Safety and Security – RAMS);

verification and validation (Verification and Validation) including the introduction of faults, formal methods and methods of safety assessment and qualification;

qualification and certification support.

RAMS analysis – analysis of hazards, failure patterns, consequences and criticality of failures, common causes of failures, interaction of hardware and software. The RAMS method usually refers to a set of quality characteristics used in the field of technology. The way to achieve acceptable levels of RAMS for software, and then to evaluate the quality of the product in accordance with them, has given rise to methods adapted to software and operating at every stage of the development life cycle.

Examples are: SFMECA (Software Failure Mode, Effects and Severity Analysis), SFTA (Software Failure Pattern Analysis), ETA (Event Pattern Analysis), SCCFA (Software Common Cause and Failure Analysis), HSIA (Hardware and Software Interaction Analysis). security) at the top level. At a lower level, broader methods are used to ensure efficiency and provide feedback on RAMS analysis. They cover all stages of the development process: principles and methods of design (for example, reuse, modularity, separation or auxiliary methods such as simulation, mathematical modeling), standards and conventions for the coding process, methods for verifying and validating software (Verification and Validation ) (e.g. testing and analysis), evaluation methods (e.g. RAMS estimation based on measurements), fault tolerance mechanisms.

The key problems in actually implementing these methods are related to their cost-effectiveness in relation to the required quality; in the case of software, this creates unique and intractable problems, both in terms of “cost” and “quality assurance”.

Verification and validation (Verification and Validation). Verification and validation activities are carried out throughout the life cycle of system development and testing, from planning to acceptance. These activities ensure that the product or system is free of faults and operates within its system/hardware/software specifications. Verification and validation also ensures that the results of the safety and reliability analysis are not erroneous due to incorrect implementation and that the system does what it was designed to do. Verification and validation activities can be applied at all levels of the system, both in hardware and software. Verification is to ensure that the system meets the requirements at all stages of the life cycle. This is achieved through analysis, verification and formal evaluation of intermediate and final system artifacts. Artifacts to be analyzed are selected in accordance with previous criticality analysis, which increases the value for money of the activity. Validation demonstrates that the system is fulfilling its intended purpose. This is achieved by testing the product in either real or simulated conditions. The purpose of validation is to evaluate error handling behavior, safety and security issues, and areas where a failure could cause undesired consequences. Validation can also be done using a set of methods that will test the system from different perspectives to ensure it is fit for its intended use. Examples of these methods are fault injection, formal methods and safety assessment.

Qualification and certification support – definition of necessary processes, methods and certification; support for analysis, selection and qualification of instruments; preparation of safety cases and evidence for certifying authorities.

Certification is the confirmation that a fact or statement is true and supported by documentary evidence, which increases the credibility of a product or system. Certification is usually a combination of a rigorous verification and validation program and a RAMS (Safety and Reliability Analysis) program guided by a set of mandatory standards. During the program, the lifecycle evidence is reviewed by an external regulatory organization legally empowered to approve or deny permission to deploy and use the system. Obtaining objective certification usually requires a certification body and is mandatory in certain areas, including aviation, nuclear, railroads, and medicine. This usually involves:

A process or set of guidelines that defines what objectives must be achieved in order to achieve certification;

Collection of security artifacts used as evidence/records/traceability that goals were achieved;

Making objects visible to the CA through an interoperability process throughout the product life cycle.

Qualification is the formal process of confirming the suitability of a given product for use in a particular environment. Environmental conditions determine the qualification requirements. Qualification can be thought of as a set of objectives that must be met before a product can be considered fit for use in accordance with a specific standard or guidelines. In some cases, a qualifying body is involved. The qualification can be considered as part of the certification program. Although it conforms to a set of standards and requirements, it is usually limited to an extended verification and validation program that covers non-functional system requirements such as testability or maintainability.

Conclusion

To ensure impartial, reliable, and guaranteed test execution, a certain level of independence from the development team is generally recommended. The use of such methods increases the reliability of the system and software development, even when third party certification is not required. They are important for detecting and fixing existing bugs and can be seen as a response to fixing bugs after they have been found in the system. To ensure the high integrity of the system under development, other more proactive actions (such as reliability, security and RAMS analysis, and suitability) can be applied earlier in development to prevent defects in the system.

List of sources used

Rex Black, “Critical Testing Processes”, Addison-Wesley, 2003, ISBN 0-201-74868-1

Leveson, NG: The role of software in spacecraft accidents. AIAA Journal of Spacecraft and Rockets 41 (2004) 564–575

Calzarossa, MC, Tucci, S.: Performance Evaluation of Complex Systems: Techniques and Tools. Performance 2002 Tutorial Lectures, Lecture Notes in Computer Science 2459 (2002)

European Organization for the Safety of Air Navigation: Review of Techniques to Support the EATMP Safety Assessment Methodology I (2004)

European Cooperation on Space Standardization (ECSS): ECSS-Q-HB-80-03 Draft (2012)

CENELC: EN 50128:2011 – Railway applications – Communication, signaling and processing systems – Software for railway control and protection systems (2011)

Amberkar, S., Czerny, BJ, D’Ambrosio, JG, Demerly, JD, Murray, BT: A Comprehensive Hazard Analysis Technique for Safety-Critical Automotive Systems. SAE technical paper series (2001)

Pentti, H., Atte, H.: Failure Mode and Effects Analysis of software-based automation systems. VTT Industrial Systems 190 (2002)

Grottke, M., Trivedi, KS: Fighting bugs: Remove, retry, replicate, and rejuvenate. IEEE Computer 40(2) 107–109 (2007)

Vesely, W.: Fault Tree Handbook with Aerospace Applications. NASA office of safety and mission assurance, Version 1.1 (2002)

Stephens, RA, Talso, W.: System Safety Analysis handbook: A Source Book for Safety Practitioners. System Safety Society, 2nd edition (1997)

Von Hoegen, M.: Product assurance requirements for first/Planck scientific instruments. PT-RQ-04410 (1) (1997)

Pezze’, M., Young, M.: Software Testing and Analysis: Process, Principles and Techniques. Wiley (2007)

RTCA and EUROCAE: Software consideration in airborne systems and equipment certification (1992)

Software Defect Prevention – In a Nutshell, SixSigma

Defect Prevention: Reducing Costs and Enhancing Quality, SixSigma

Smith, D. Simpson, K., “Functional Safety, A Straightforward Guide to applying IEC 61508 and Related Standards, Second Edition”, Elsevier, 2004

Hjortnaes, Kjeld, ESA Software Initiative, May 7th, 2003

Glass, Robert L., Sorting out software complexity, ACM Communications, November 1st, 2002

RW Butler ”What is Formal Methods?”, August 2008

John Harrison, “Formal methods in industry”, Intel Corporation, September 1999

Girish Palshikar An introduction to model checking, February 2004

Geoff Sutcliffe, “Automated Theorem Proving”, June 2007