Conference DEVOXX UK. Choose a framework: Docker Swarm, Kubernetes or Mesos. Part 1

- Local development.

- Deployment Features

- Multicontainer applications.

- Service discovery service discovery.

- Scaling service.

- Run-once assignments.

- Integration with Maven.

- A “rolling” update.

- Creating a Couchbase database cluster.

As a result, you will get a clear idea of what each orchestration instrument has to offer, and learn how to use these platforms effectively.

Arun Gupta is Amazon Web Services’ premier open-source product technologist who has been developing Sun, Oracle, Red Hat, and Couchbase developer communities for over 10 years. He has extensive experience working in leading cross-functional teams involved in the development and implementation of marketing campaigns and programs. He led the Sun engineering team, is one of the founders of the Java EE team and the creator of the American branch of Devoxx4Kids. Arun Gupta is the author of more than 2 thousand posts in IT blogs and has made presentations in more than 40 countries.

Good afternoon, I know this is the last performance before dinner, but I hope you have some more energy left from breakfast to listen to me. My name is Arun Gupta, I work for AWS, which we will talk about now, as well as Docker and Kubernetes, so you can choose the most suitable of these frameworks for yourself.

I am the captain of Docker, which I am very proud of; there are only 70 people around the world recognized by Docker in this status. We started with the earliest version of the 0.3 system, I wrote a book about Docker, so I know something about this technology. In addition, I have been a JavaOne rock star for 4 years in a row, so I understand the topic. I always learn from my audience and exchange useful tips with her, so thank you for participating.

We begin our consideration of the issue from the highest level. This is not Docker 1.01, so don’t expect me to teach you the basics. Raise your hands, who is using Docker in any form today?

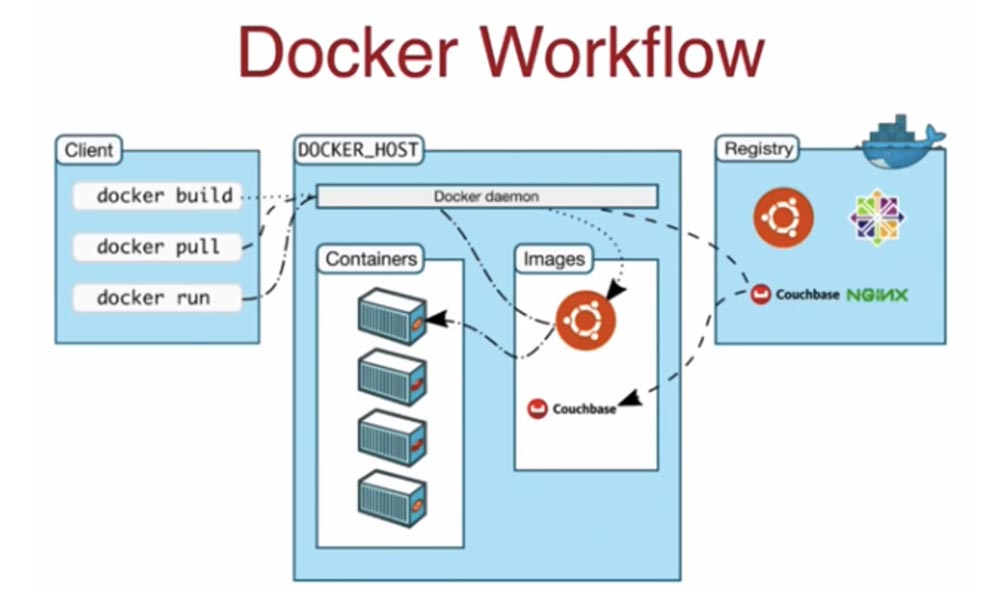

Excellent, almost 90% of those present. So, globally, the Docker workflow consists of 3 main components: the client part, the Docker host, and the Docker registry. Suppose you want to run a container for a specific image. You tell the client: “Download this image and launch the containers!”. To communicate with the host, the client uses the docker build, docker pull, and docker run commands. He gives a command to the host, he says: “Yeah, I don’t have this image!”, Then by default he accesses the registry of the Docker hub at hubdocker.com, downloads the desired image, saves it and runs it in containers. In this sense, the client has absolutely no states outside the working location, it simply sends a CLI command over the wire, it goes to the host as a REST API, and the host, understanding the API requirements, downloads the image from the hub or the automated registry of Amazon ECR Docker containers, and then launches containers based on this image. This is a completely static state, managed only on the host. In this case, we have one host, but it is easy enough to use a multi-host solution.

This is exactly what the orchestration framework is for – you do not want to create a distributed application system on a single host, because this is the weakest point, which in case of a failure will bring down the entire system. You want to distribute the task across multiple hosts to avoid a global failure. For example, you want to run a Java application that uses an application server, database server, caching, many different components. Moreover, it often requires the simultaneous operation of several instances of this application, since a typical Java application is a multi-container application that uses containers on multiple hosts. Therefore, you need an orchestration framework, not only for planning containerization, but also for creating the ability to manage your cluster, life cycles, and other necessary operations.

In this presentation, I will tell you how the two main application orchestration tools – Docker and Kubernetes – differ in their capabilities, pros and cons of using them. I will consider these issues from the point of view of a developer who begins to create any application with a key, fundamental concept. This concept includes components such as a cluster, a single container, many containers, their interaction with each other, discovery of services (or cataloging of services that allows them to know about each other), load balancing, persistent storage intended for stateful containers , as well as knowledge of how local development will be performed.

From the Dev perspective, we will move on to the Ops perspective, on the architectural side of the system: how to multiply the master node, how the scheduler algorithm should work when working on multiple hosts, what are the rules and restrictions with which I can run the container on a specific host, how can I perform monitoring and rolling updates, how to use cloud support. Today we’ll talk about all this.

Let’s start with Docker Swarm – this is one of the features of the Docker Engine, starting with version 1.2, a standard clustering tool. It converts a set of Docker hosts into a single sequential Swarm cluster. If you go to docker.com, you can download one of two editions: Community Edition or Enterprise Edition (today these versions are called Stable and Edge). The first build is what I use, it is stable and updated every 3 months, unlike the latest Edge build, which is updated every month. I am using the Docker CE version for Mac. So, when I download Docker CE to my computer, during the installation I note the Swarm contained in it, and after that I can immediately start creating clusters, orchestrating and planning containers.

Please note that the Docker Swarm module requires connection with the host, so when using a network card with a multi-host system, you need to listen to a specific Docker host address using the docker swarm unit – – listen-addr: 2377 command.

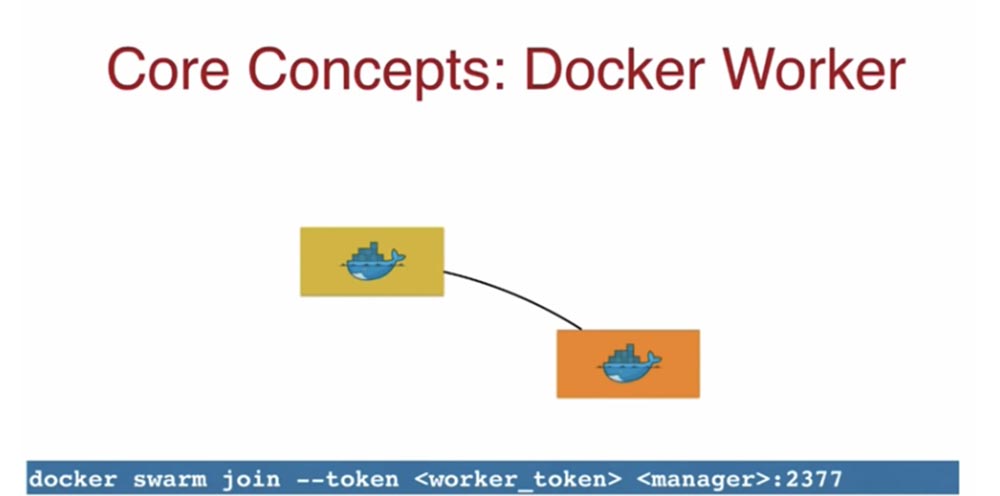

A yellow icon indicates a single master node by default. The wizard gives me a worker-token control token, I take it and use it to attach a new work node, a physical or virtual machine, it does not matter.

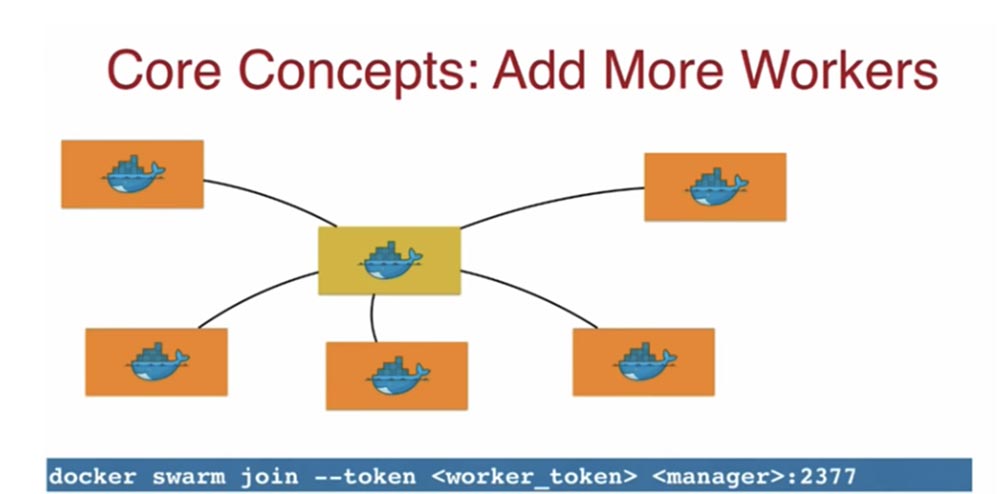

This token indicates that this node should join the original master. Using worker-token, I can form my own Docker cluster. Thus, using Docker, it is very easy to create a cluster of several nodes. The next slide shows a cluster of one master node and 5 working nodes.

With the same ease, you can create clusters with several master nodes – the primary master node and the secondary master node, linking them to work nodes.

On the client side, when you send a request to the secondary master, it as a proxy redirects it to the primary master, and that one already executes the request, distributing the load between the work nodes. By default, your container can also work in a manager node, but when creating a cluster, containers should be placed only in work nodes, and the manager will only administer the cluster.

The following basic concept, a cluster, means an architecture of several master nodes and several working nodes in which containers are running. This is a strictly consistent scheme, replicated based on the Raft consensus algorithm, with the highest speed due to reading from RAM.

I highly recommend using a template with the Raft protocol, because if you split the networks, the system does not have to think about who now plays the role of a wizard, because with Raft, the selection will be made automatically. At the bottom level are Swarm working nodes that communicate with each other via the Gossip protocol. You see that red containers work on 3 nodes, and green containers on two nodes – this suggests that Swarm Worker allows you to start and perform several different tasks.

How does the Docker Replicated Service concept work? Since I am a Java developer, in this case I use the WildFly container.

To do this, through the Docker command line, I enter the command docker service create – – replicas 3 – – name web jboss / wildfly, indicating the number of replicas I need, the name of the service is web, and add a wildfly image. This image will be uploaded to the 3 Docker hosts and launch the container.

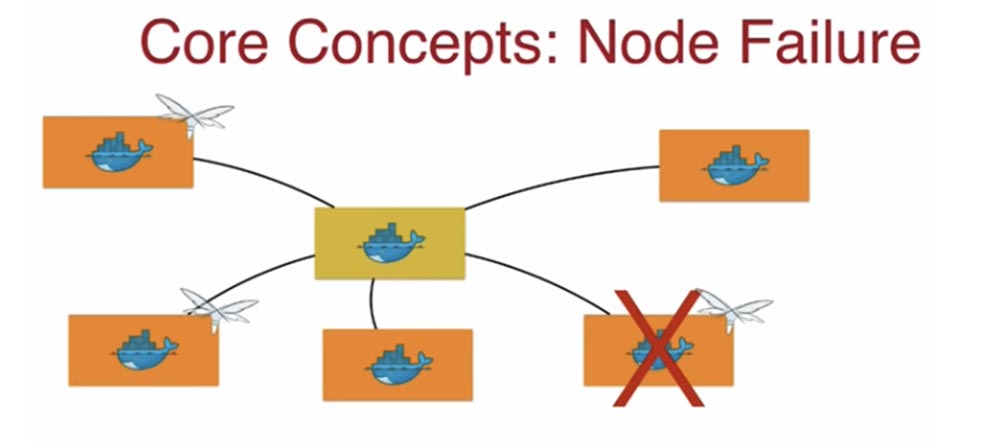

Another important concept is the failure of the Node Failure node. What happens?

Docker sees that the current state of the system does not match the desired one, one node fell out of the cluster, which means that it is necessary to transfer containers from this node to another host. That is, if you declared the need for three replicas to work, Docker will make sure that these 3 replicas exist under any conditions. A situation may occur when a container fails in a working node. Similar to the previous case, Docker keeps track of the specified number of containers, and if it decreases, a scheduler is activated that immediately restores the container, bringing the current state of the cluster to the desired state. That is, if you announced 3 replicas, all these replicas should work at any given time.

To be continued very soon …

A bit of advertising 🙂

Thank you for staying with us. Do you like our articles? Want to see more interesting materials? Support us by placing an order or recommending to your friends, cloud VPS for developers from $ 4.99, A unique analogue of entry-level servers that was invented by us for you: The whole truth about VPS (KVM) E5-2697 v3 (6 Cores) 10GB DDR4 480GB SSD 1Gbps from $ 19 or how to divide the server? (options are available with RAID1 and RAID10, up to 24 cores and up to 40GB DDR4).

Dell R730xd 2 times cheaper at the Equinix Tier IV data center in Amsterdam? Only here 2 x Intel TetraDeca-Core Xeon 2x E5-2697v3 2.6GHz 14C 64GB DDR4 4x960GB SSD 1Gbps 100 TV from $ 199 in the Netherlands! Dell R420 – 2x E5-2430 2.2Ghz 6C 128GB DDR3 2x960GB SSD 1Gbps 100TB – from $ 99! Read about How to Build Infrastructure Bldg. class c using Dell R730xd E5-2650 v4 servers costing 9,000 euros for a penny?